Home > Storage > PowerStore > Virtualization and Cloud > Dell PowerStore: VMware vSphere with Tanzu and TKG Clusters > Deploy a Tanzu Kubernetes Cluster

Deploy a Tanzu Kubernetes Cluster

-

The last section of this document will highlight the deployment of a Tanzu Kubernetes Cluster. A Tanzu Kubernetes Cluster is a full distribution of the open-source Kubernetes container orchestration platform and is deployed on a vSphere with Tanzu Supervisor Cluster by using the Tanzu Kubernetes Grid Service.

Workloads and services can be deployed to Tanzu Kubernetes Clusters using the same tools and methods as with standard Kubernetes clusters. DevOps teams may prefer TKG clusters because they have control over the Kubernetes cluster, including root level access to the control plane and worker nodes. Among other things, this allows DevOps teams to create their own namespaces and maintain the TKG cluster with current Kubernetes versions without having to manage or upgrade the vSphere Supervisor Cluster.

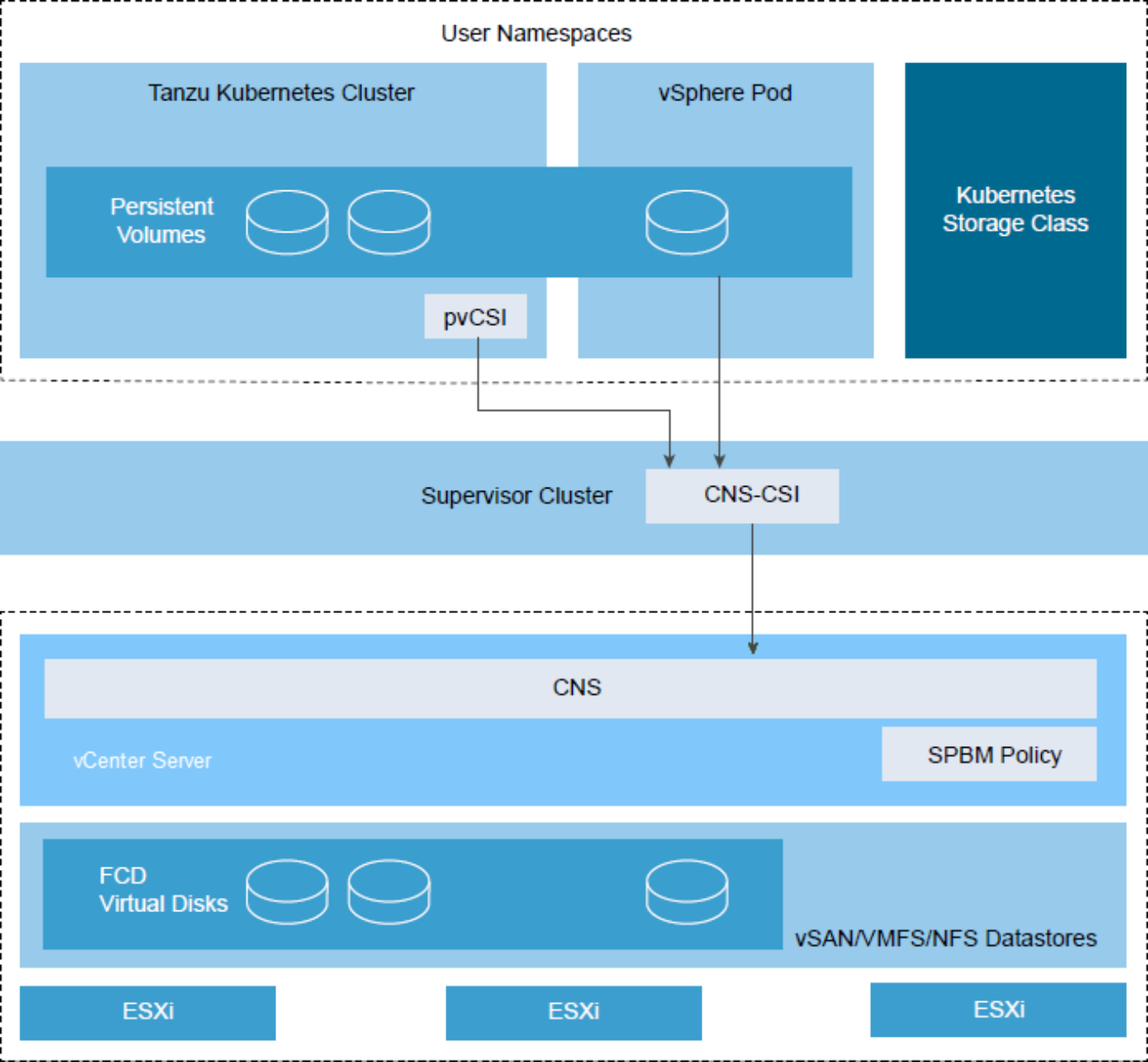

Regarding storage, vSphere with Tanzu uses the vSphere CSI driver to consume storage. TKG clusters consume storage using a separate Paravirtual CSI (pvCSI) driver. The pvCSI is the version of the vSphere CNS-CSI driver modified for Tanzu Kubernetes clusters. The pvCSI resides in the Tanzu Kubernetes cluster and is responsible for all storage-related requests originating from the Tanzu Kubernetes cluster. Storage requests are delivered to the CNS-CSI and then propagate to the CNS in vCenter Server. The pvCSI does not have direct communication with the CNS component. It relies on the CNS-CSI for storage provisioning.

Figure 18. vSphere CNS-CSI and pvCSI architecture (credit: VMware)

To deploy a Tanzu Kubernetes Cluster, follow these steps:

- Open a text editor and construct the .yaml file. There are many community-driven GitHub repositories with example code and demo applications that can be used instead of creating from scratch. This one is a good example. In this deployment, the TKG cluster named tkc-01 will consist of one control plane node and three worker nodes. In addition, each node will consume vVol storage using the vk8s-vvol-silver storage policy created earlier.

kind: TanzuKubernetesCluster #required parameter

metadata:

name: tkc-01 #cluster name, user defined

namespace: devops #supervisor namespace

spec:

distribution:

version: v1.16 #resolved kubernetes version

topology:

controlPlane:

count: 1 #number of control plane nodes

class: guaranteed-small #vmclass for control plane nodes

storageClass: vk8s-vvol-silver #storageclass for control plane nodes

workers:

count: 3 #number of worker nodes

class: guaranteed-small #vmclass for worker nodes

storageClass: vk8s-vvol-silver #storageclass for worker nodes

- Use Kubernetes CLI Tools to log in and switch to the devops vSphere Namespace.

C:\>kubectl vsphere login --server=https://xxx.xxx.xxx.193 --vsphere-username devops@vsphere.local --insecure-skip-tls-verify

Password:

Logged in successfully.

You have access to the following contexts:

xxx.xxx.xxx.193

devops

If the context you wish to use is not in this list, you may need to try logging in again later, or contact your cluster administrator.

To change context, use `kubectl config use-context <workload name>`

C:\>kubectl config use-context devops

Switched to context "devops".

- Apply the .yaml file to deploy the TKG cluster.

C:\>kubectl apply -f C:\Users\jboche\Downloads\Kubernetes-master\Kubernetes-master\demo-applications\create-tkc-cluster.yaml

tanzukubernetescluster.run.tanzu.vmware.com/tkc-01 created

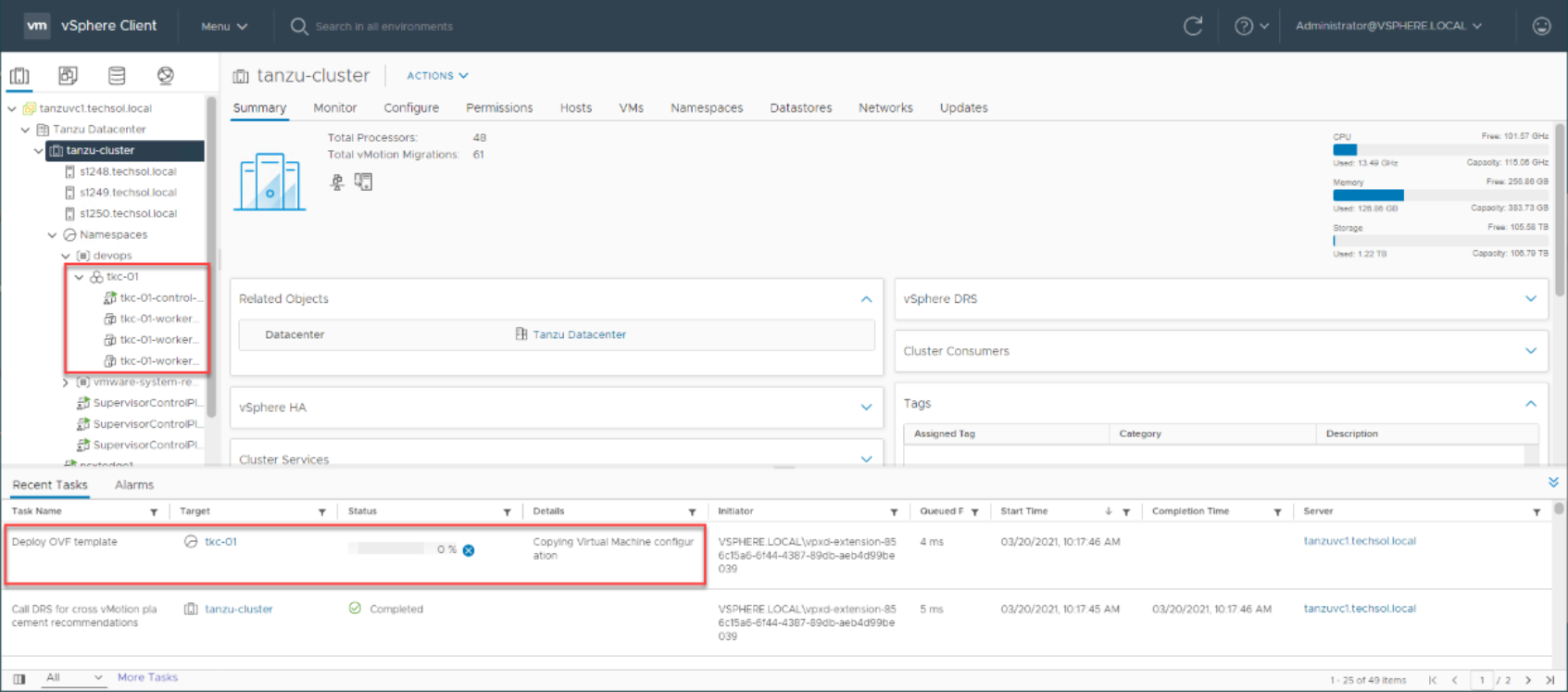

The deployment process will take several minutes. The progress can be monitored in the vSphere UI shown below. Watch as the control plane node is created, followed by the worker nodes.

Figure 19. Monitoring the deployment of a TKG cluster in the vSphere UI

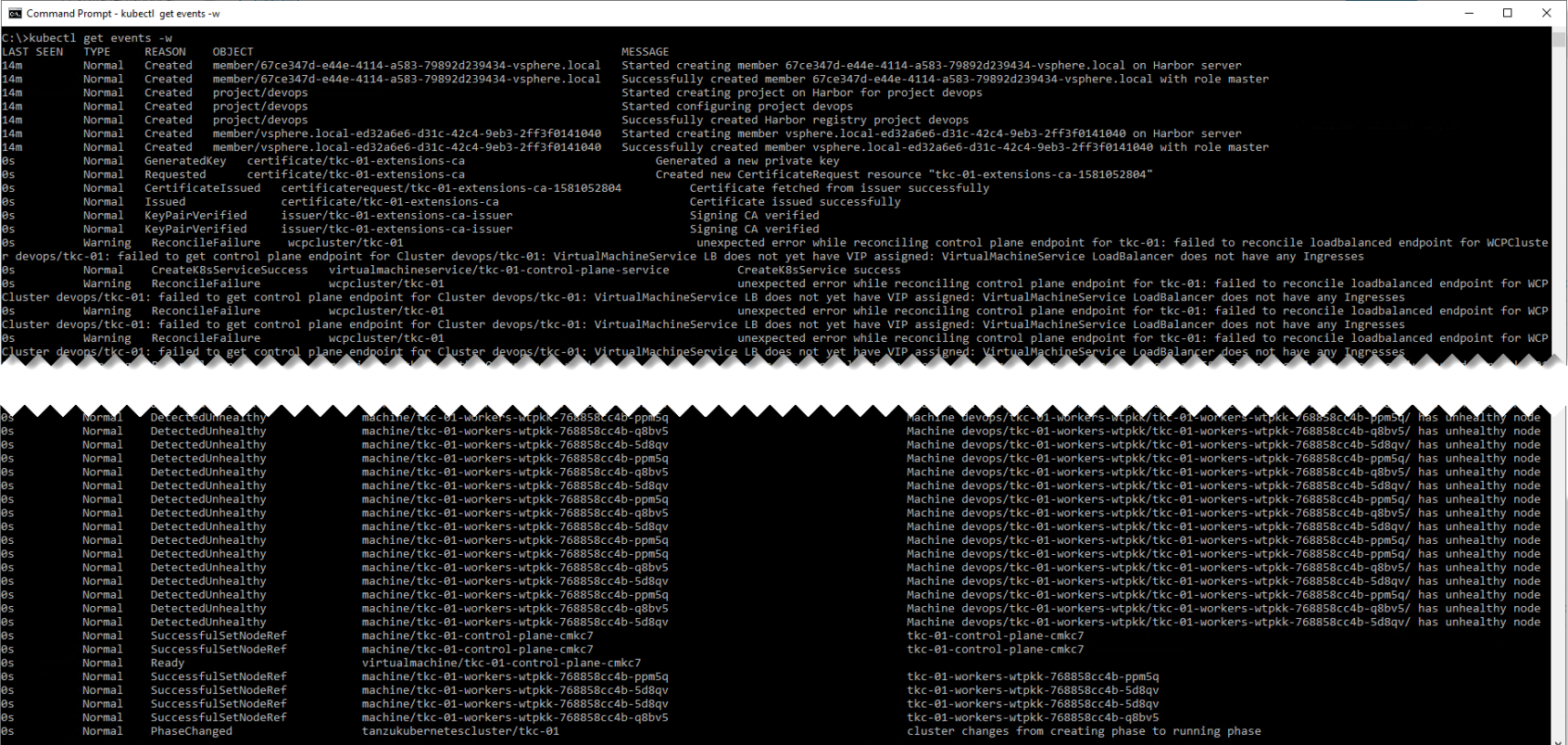

The deployment process can also be monitored using the Kubernetes CLI Tools with the kubectl get events -w command as shown below. It is not uncommon to see many errors scroll by during the deployment. Errors are normal, and can typically be ignored.

Figure 20. Monitoring the deployment of a TKG cluster using the Kubernetes CLI Tools

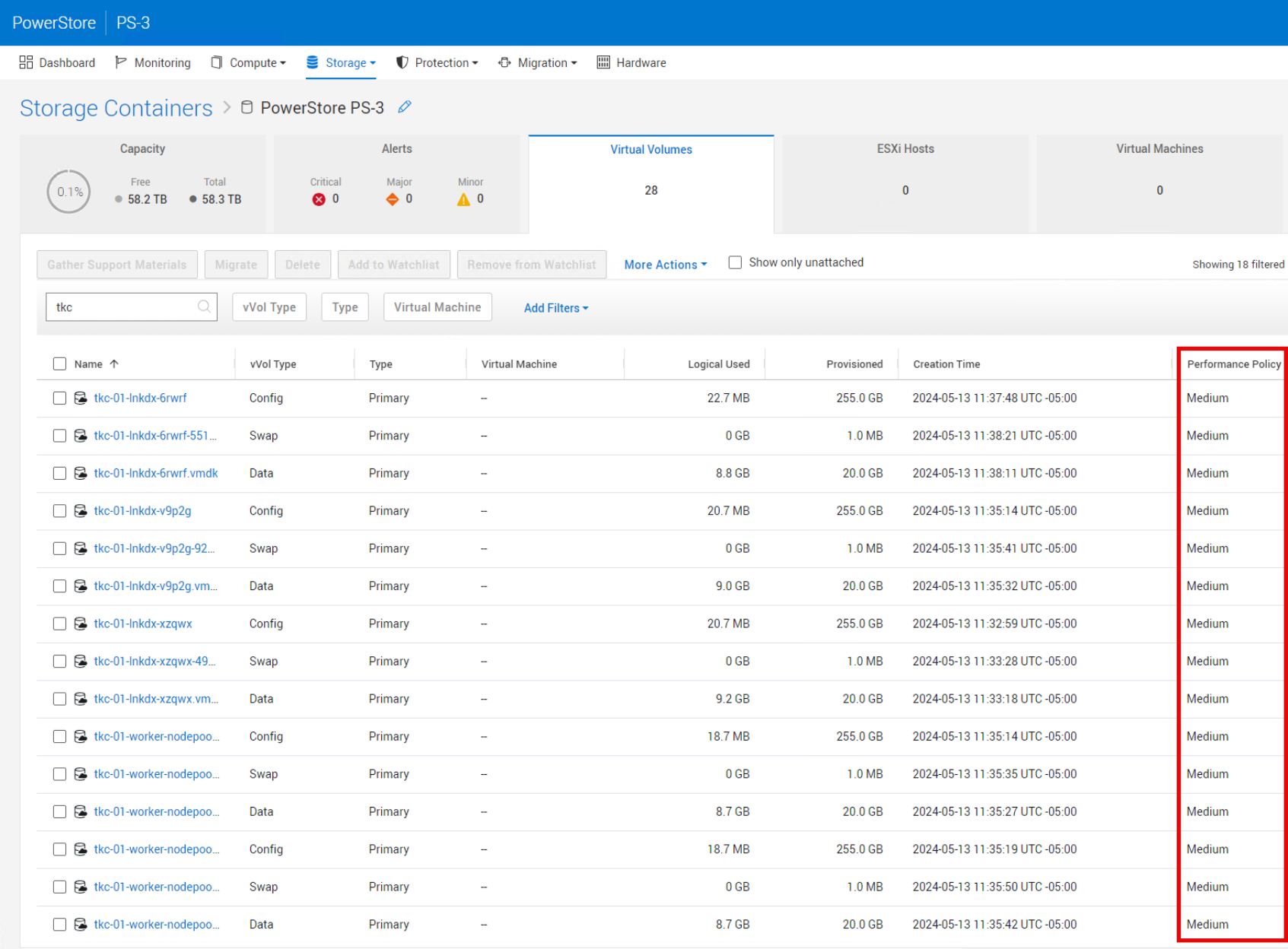

The PowerStore Manager UI reflects the deployment of the TKG control plane and worker nodes on vVol storage with an IO Priority of Medium.

Figure 21. Examining TKG cluster consumption of vVol storage

After the TKG cluster is deployed, the devops user can log in and look around using the Kubernetes CLI Tools.

C:\>kubectl vsphere login --server=https://xxx.xxx.xxx.193 --vsphere-username devops@vsphere.local --insecure-skip-tls-verify --tanzu-kubernetes-cluster-name tkc-01 --tanzu-kubernetes-cluster-namespace devops

Password:

Logged in successfully.

You have access to the following contexts:

xxx.xxx.xxx.193

devops

tkc-01

If the context you wish to use is not in this list, you may need to try

logging in again later, or contact your cluster administrator.

To change context, use `kubectl config use-context <workload name>`

C:\>kubectl config use-context tkc-01

Switched to context "tkc-01".

C:\>kubectl get nodes

NAME STATUS ROLES AGE VERSION

tkc-01-control-plane-cmkc7 Ready master 76m v1.16.14+vmware.1

tkc-01-workers-wtpkk-768858cc4b-5d8qv Ready <none> 72m v1.16.14+vmware.1

tkc-01-workers-wtpkk-768858cc4b-ppm5q Ready <none> 72m v1.16.14+vmware.1

tkc-01-workers-wtpkk-768858cc4b-q8bv5 Ready <none> 72m v1.16.14+vmware.1

C:\>kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

xxx.xxx.xxx.193 xxx.xxx.xxx.193 wcp:xxx.xxx.xxx.193:devops@vsphere.local

devops xxx.xxx.xxx.193 wcp:xxx.xxx.xxx.193:devops@vsphere.local devops

* tkc-01 xxx.xxx.xxx.195 wcp:xxx.xxx.xxx.195:devops@vsphere.local

C:\>kubectl cluster-info

Kubernetes master is running at https://xxx.xxx.xxx.195:6443

KubeDNS is running at https://xxx.xxx.xxx.195:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

The TKG cluster has been deployed successfully and is ready for management and deployment of containerized applications and services by the DevOps team.