Home > Storage > PowerStore > Virtualization and Cloud > Dell PowerStore: VMware vSphere Best Practices > NVMe over TCP (NVMe/TCP)

NVMe over TCP (NVMe/TCP)

-

NVMe over TCP support was introduced with vSphere 7.0 Update 3 and PowerStoreOS 2.1. When planning to implement this new protocol, confirm that the host’s networking hardware is supported in the VMware Compatibility Guide.

This section provides a high-level overview of configuration best practices, but for more information, see the PowerStore resources on the Dell Technologies Info Hub.

NVMe/TCP host configurations

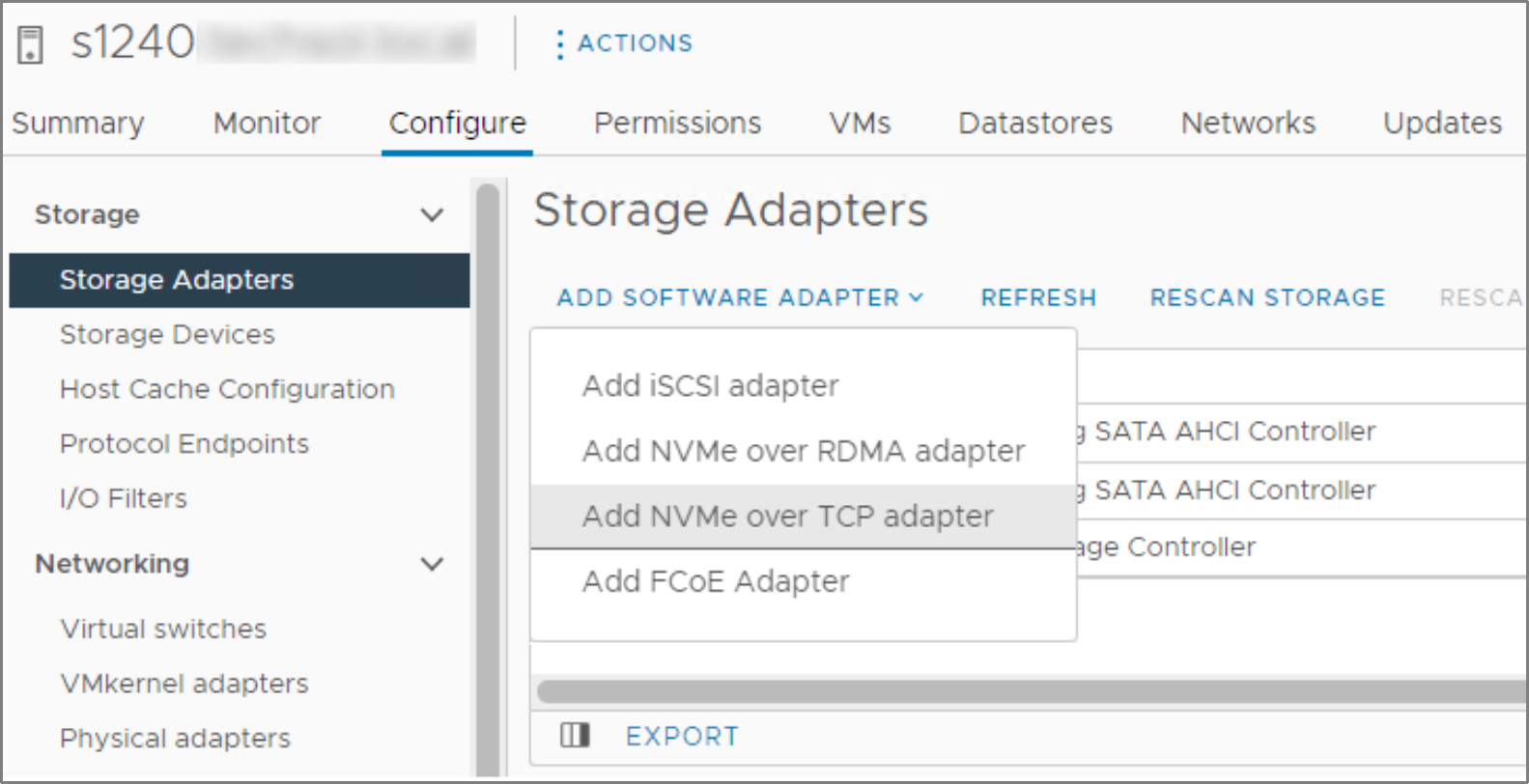

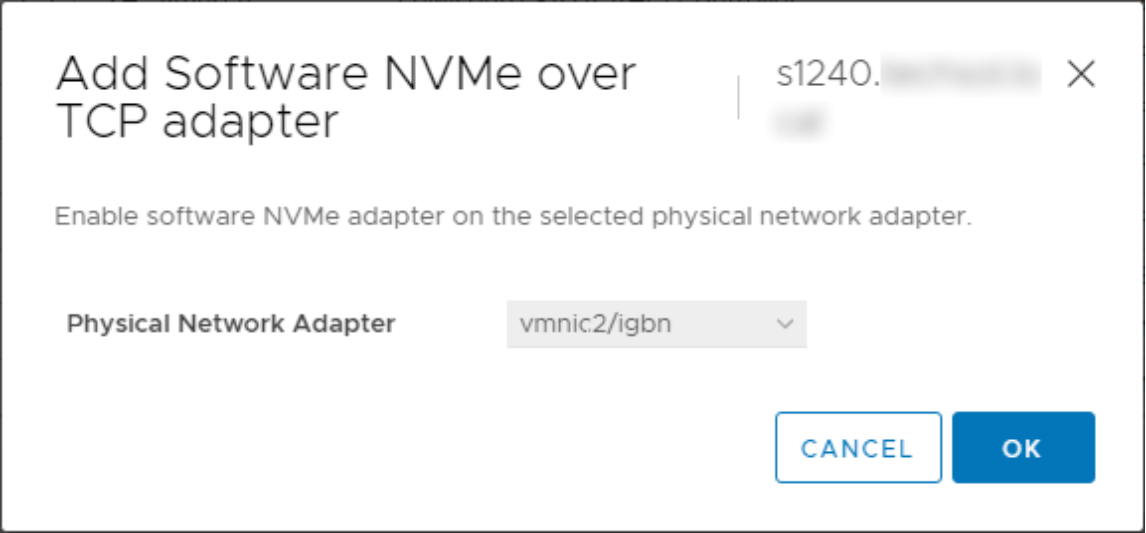

Like with iSCSI, ESXi 7.0 U3 adds a software adapter for NVMe over TCP (Figure 6). After the software adapter is added, it becomes associated with a physical network adapter (Figure 7).

Figure 6. Adding an NVMe over TCP software adapter

Figure 7. Associating the adapter with a physical NIC

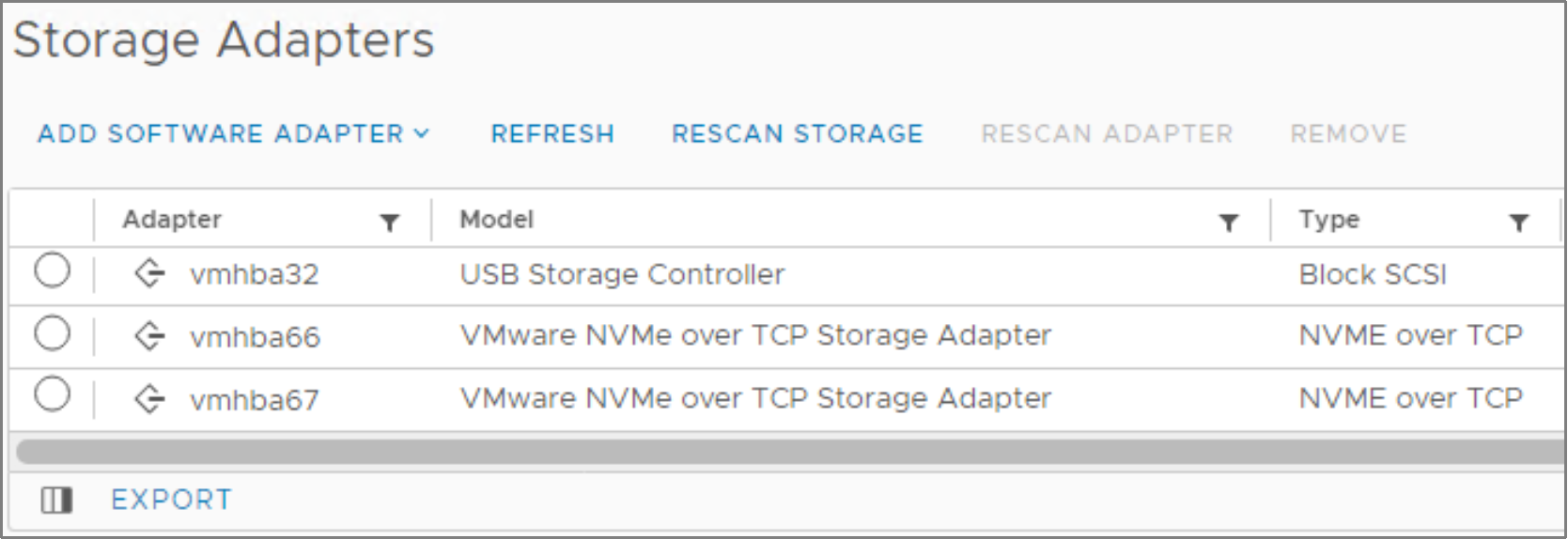

The best practice for storage network redundancy is to add two NVMe over TCP adapters and associate them with their respective storage network’s physical NICs (see the following figure).

Figure 8. NVMe over TCP storage adapters

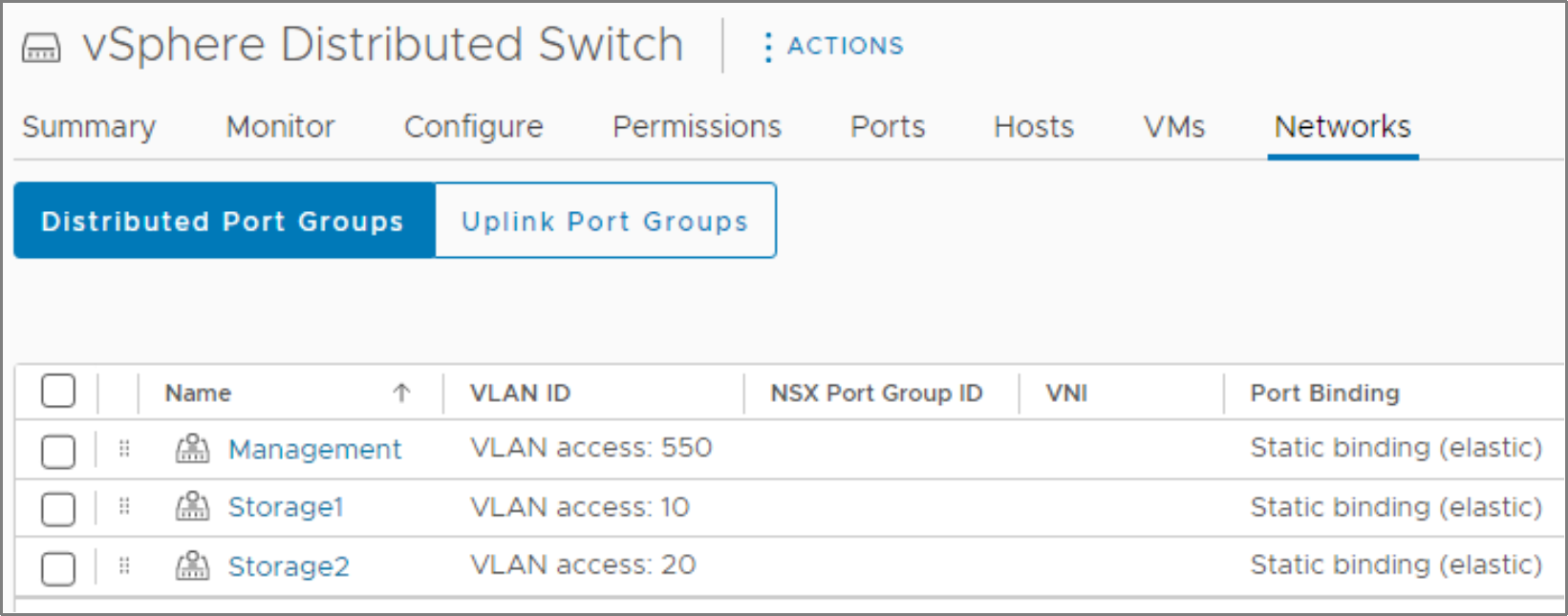

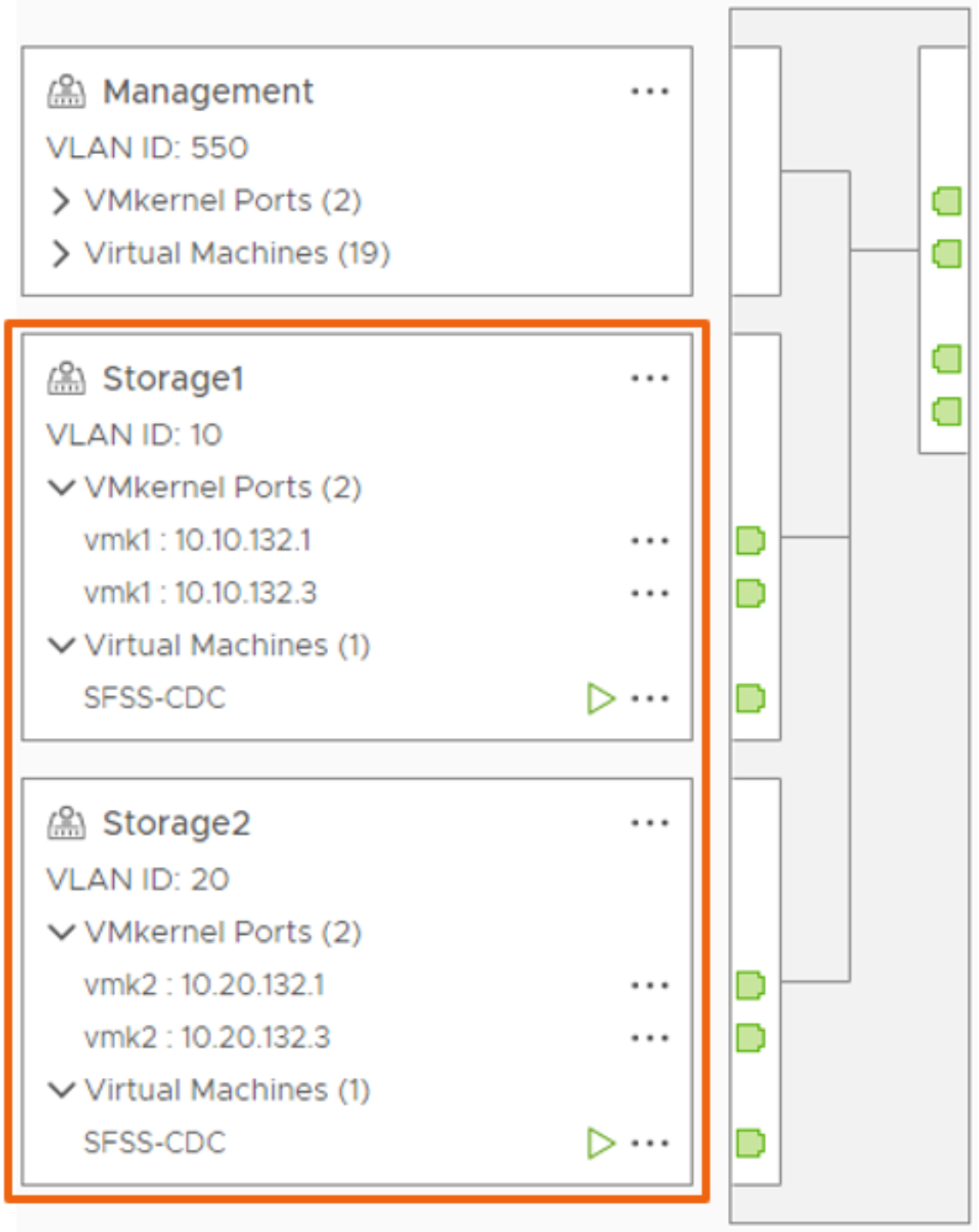

After you add the storage adapters, you can configure the cluster networking. The best practice is to use a vSphere Distributed Switch (VDS) with two distributed port groups, one for each of the redundant storage networks (see the following figure).

Figure 9. Distributed port groups used for storage networks

Note: It is a best practice to use two storage fabrics for redundance.

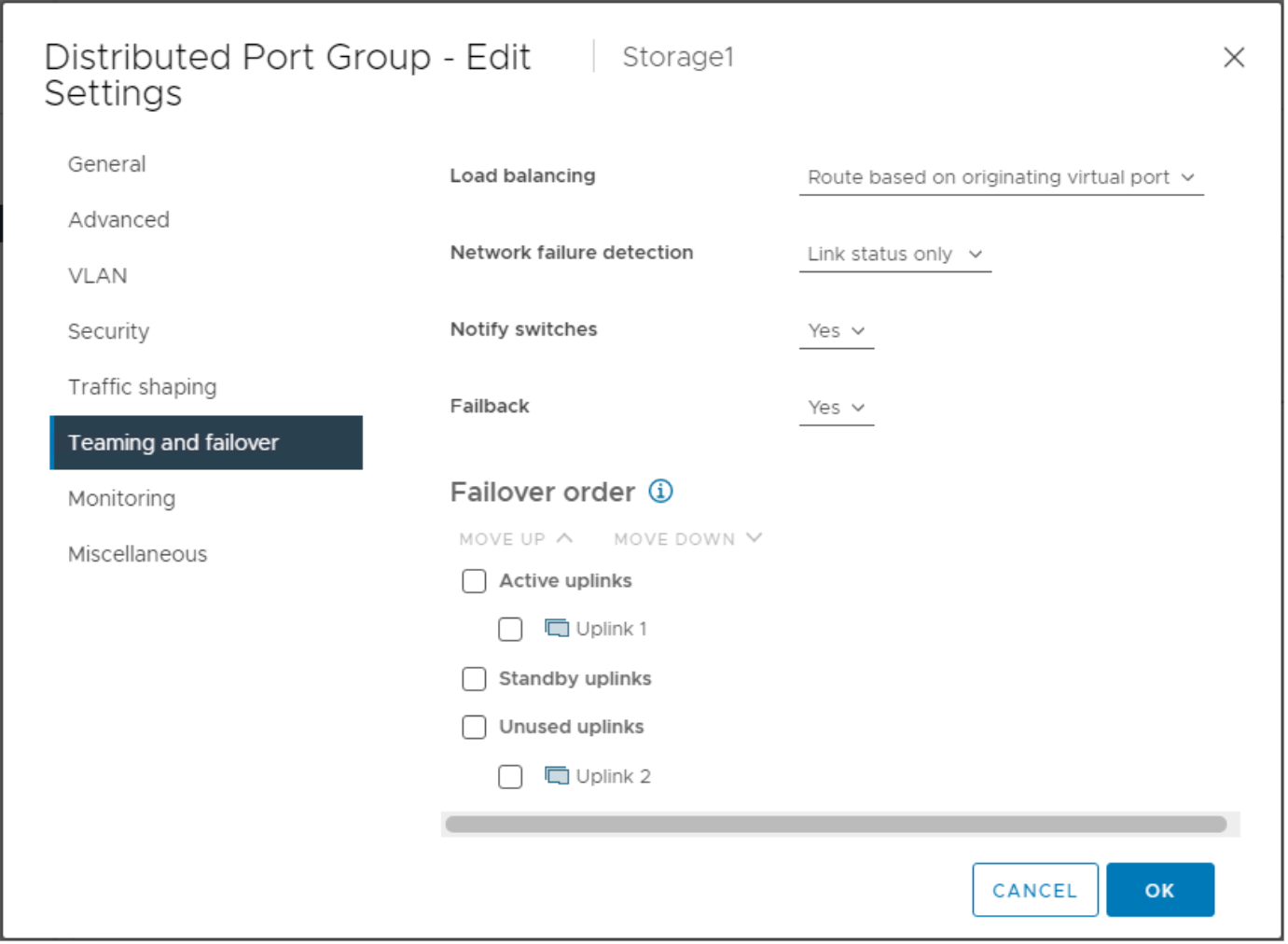

Since each NVMe over TCP storage adapter is bound to a physical NIC, you must adjust the Teaming and Failover for each distributed port group. Set the physical uplink that is bound to the vmhba to Active, and set the other NICs to Unused (see the following figure).

Figure 10. Teaming and failover settings for the distributed port group

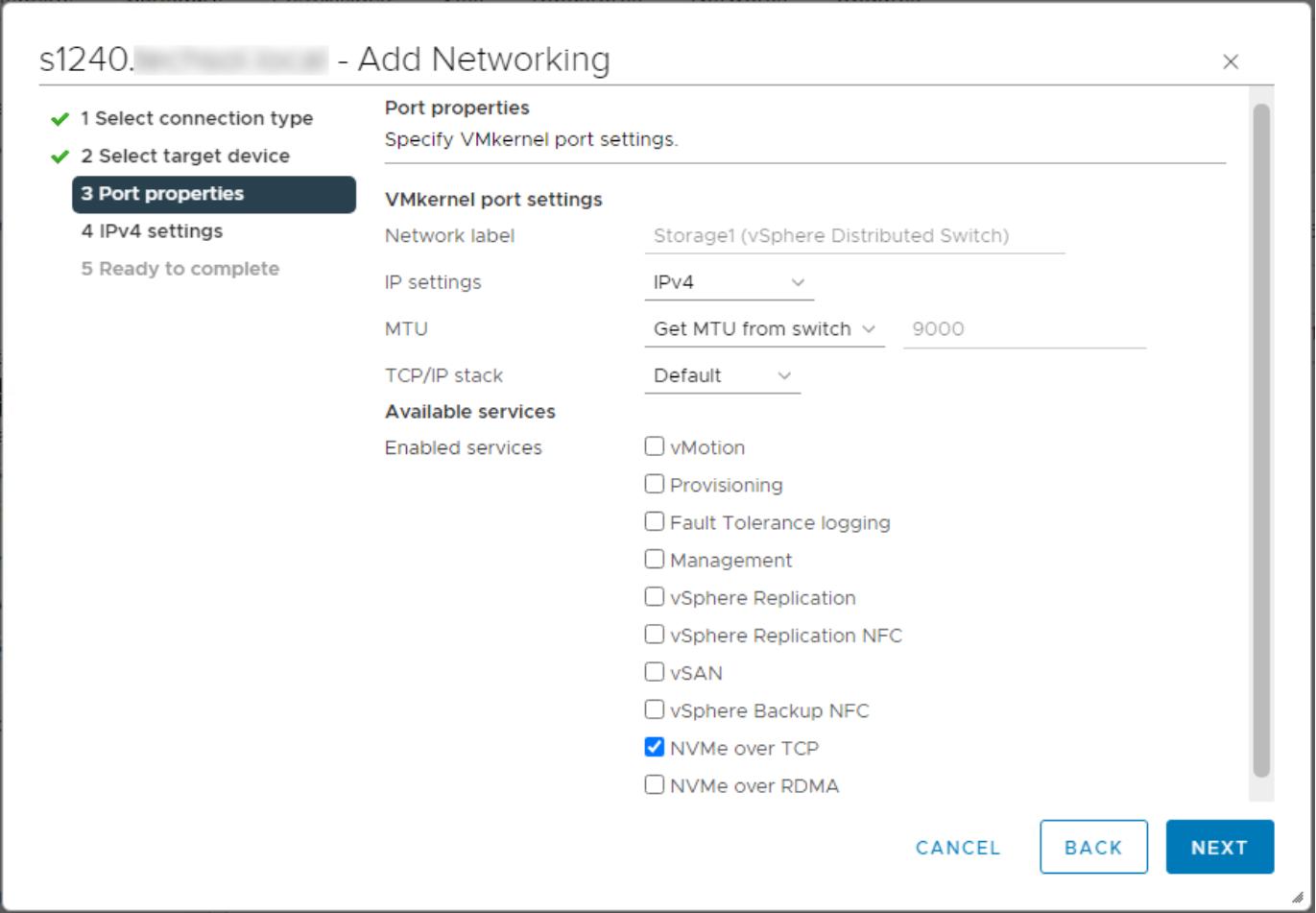

Next, add the VMkernel adapters to their respective distributed port groups, and enable the NVMe over TCP service (see the following figure). These VMkernel adapters supply the IP addresses for each of the storage adapters (for example vmhba66 or vmhba67 as shown in Figure 8).

Figure 11. VMkernel adapter with NVMe over TCP service enabled

After you configure the host and cluster networking pieces, the dual storage networks should look like the example cluster shown in the following figure.

Figure 12. vSphere Distributed Switch topology view

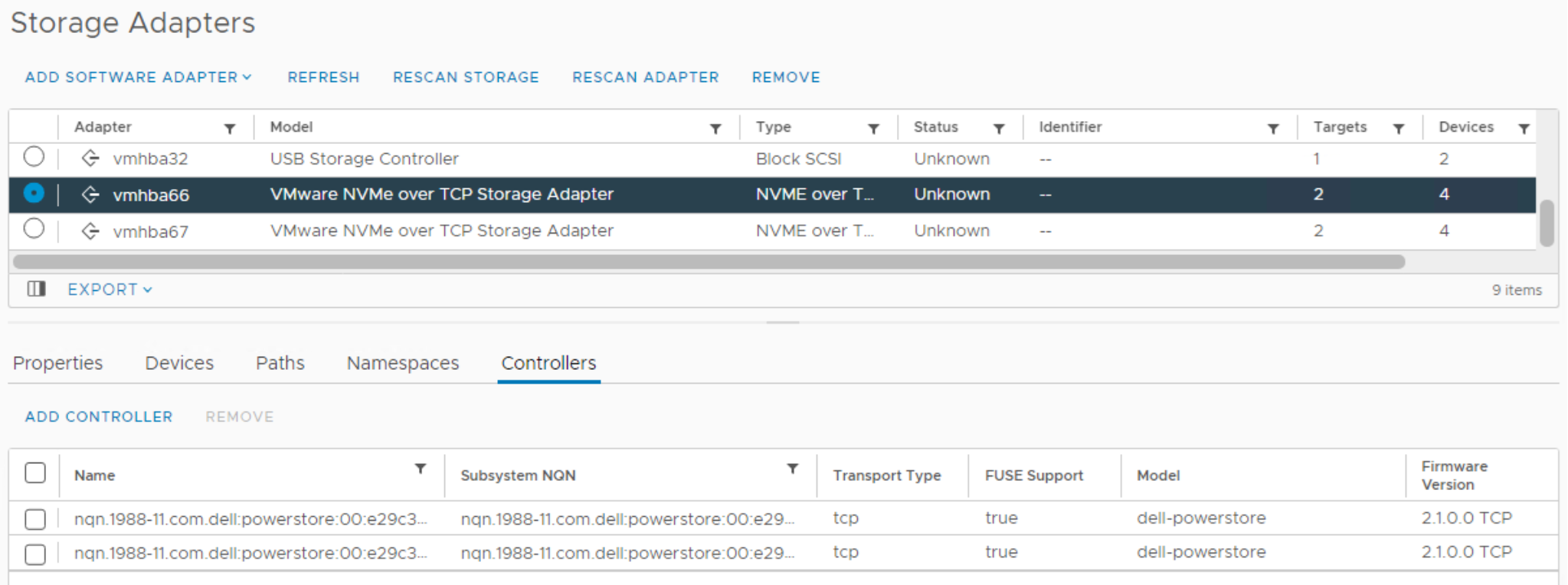

After you complete the prerequisite networking configuration, add the storage controllers to discover the PowerStore array ports and IP addresses. You can add the storage controllers manually, by using direct discovery, or automatically by using the SmartFabric Storage Software (SFSS) as a Centralized Discovery Controller (CDC). PowerStoreOS 3.0 added enhancements to automate PowerStore registration with the SFSS/CDC. For more information, see the SmartFabric Storage Software (SFSS) for NVMe over TCP – Deployment Guide.

After controller discovery, add the respective PowerStore front-end ports to each storage adapter (see the following figure). For example, add storage network 1 ports to vmhba66, and add storage network 2 ports to vmhba67. This process can be streamlined when using zoning capabilities with SFSS.

Figure 13. PowerStore ports added to each NVMe storage adapter

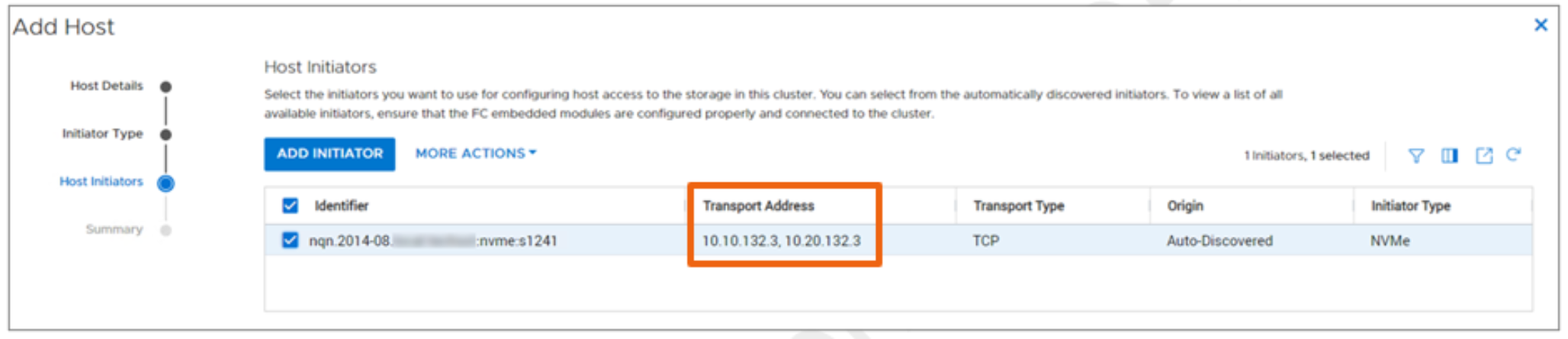

Finally, add the ESXi hosts to PowerStore Manager before provisioning volumes. If everything is configured correctly, the host NQN should be associated with both VMK IPs as listed in the Transport Address field as shown in the following figure.

Figure 14. PowerStore Manager—Adding NVMe/TCP host with both VMK Ips

Note: If an ESXi host has been previously configured with NVMe/FC, set the vmknvme_hostnqn_format=1 variable back to the hostname option before configuring NVMe/TCP. For more information, see the Dell Technologies Host Connectivity Guide.