Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Analytics > Dell PowerScale and NVIDIA GPUDirect Performance Report > PCIe topology

PCIe topology

-

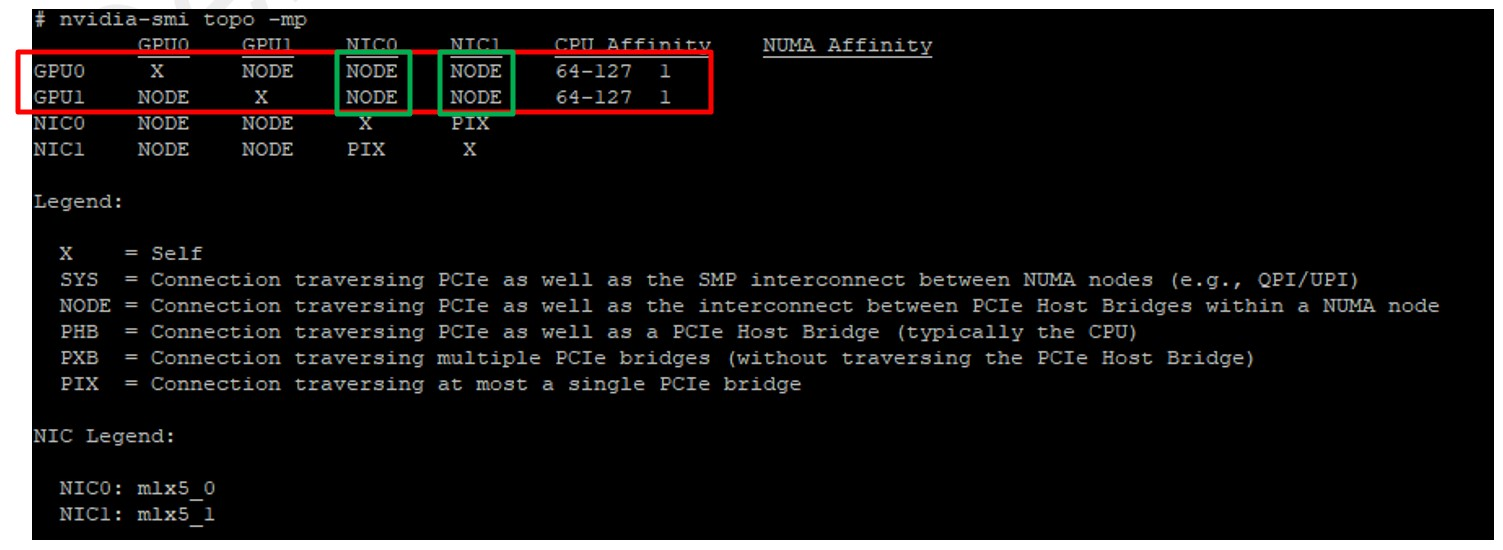

The PCIe topology, PCIe root complex, and the physical location of the GPU and network and storage devices are the most important things to understand with GPUDirect. The key is to limit the number of “hops” for a GPU to communicate with a NIC (and group GPUs and NICs based on their NUMA node affinity), also called CPU affinity. To retrieve this information, you could use some Linux commands like lspci or lstopo to identify PCIe devices, referred to as BDF notation (bus:device.func). However, the NVIDIA CUDA Toolkit package provides a command called nvidia-smi that can facilitate this task. See Figure 4 for details.

Figure 4. PCIe topology

Figure 4. PCIe topologyBased on nvidia-smi topo -mp command output (Figure 4), we can determine the following mapping for the R7525s used in this testing.

Table 3. PCIe mapping

GPU ID

Mellanox card

Numa affinity

0

mlx5_0

1

1

mlx5_0

1

Note: You can use the ibdev2netdev command to see which interface names (mlx5_X) map to which device names (enpYsZ).

Example:

# ibdev2netdev

mlx5_0 port 1 ==> ens6f0 (Up)

mlx5_1 port 1 ==> ens6f1 (Up)