Home > Storage > PowerMax and VMAX > Data Protection > Dell PowerMax: Reliability, Availability, and Serviceability > Channel front-end redundancy

Channel front-end redundancy

-

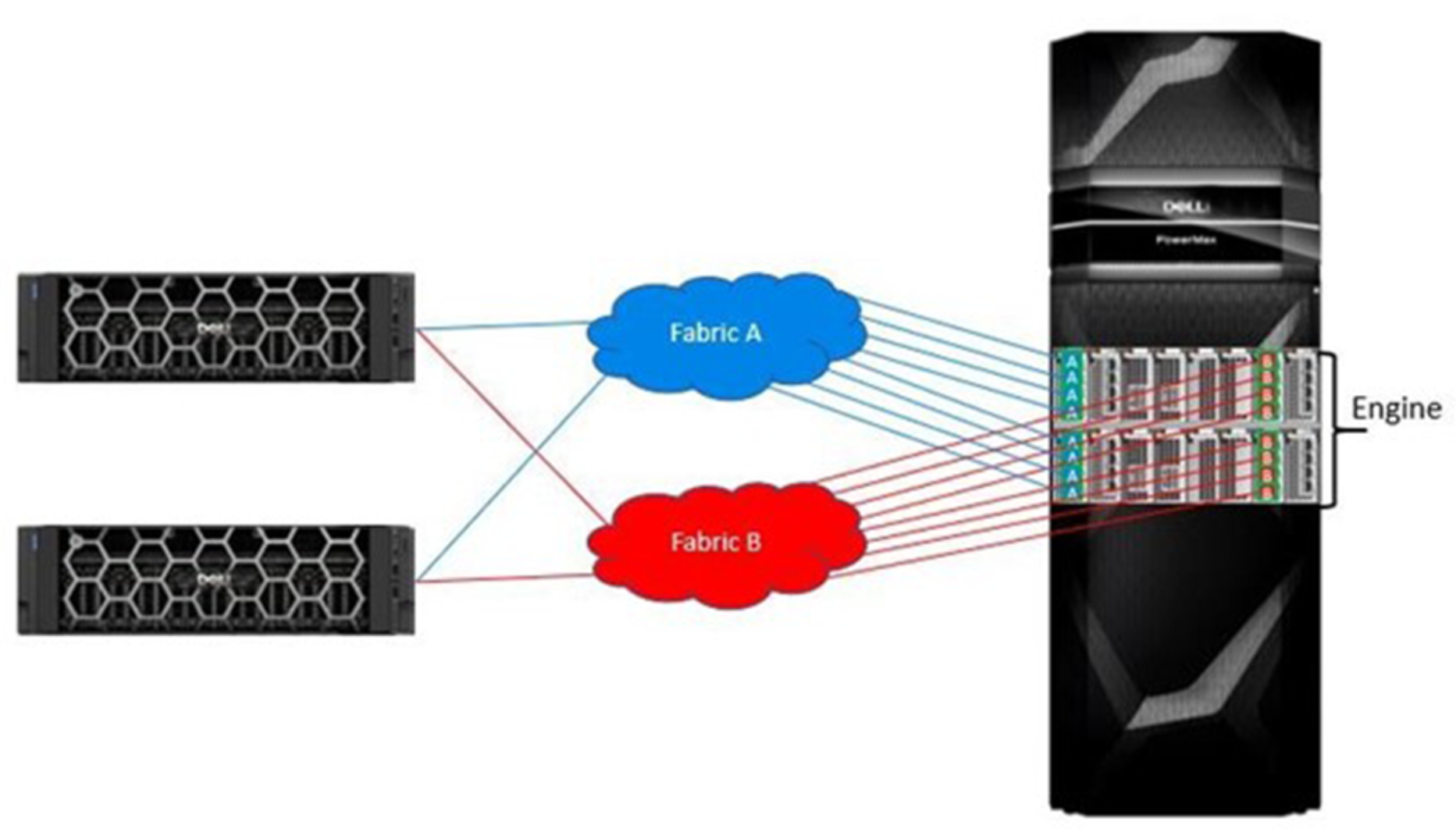

Channel redundancy is provided by configuring multiple connections from the host servers (direct connect) or Fibre Channel switch (SAN connect) to the system. SAN connectivity allows each front-end port on the array to support multiple host attachments, which increases path redundancy and enables storage consolidation across many host platforms. A minimum of two connections per server or SAN to different directors is necessary to provide basic redundancy.

The highest level of protection is delivered by configuring at least 2 or 4 front-end paths in the port group for masking and zones to the host. Single initiator zoning is recommended. Multiple ports from each server should be connected to redundant fabrics.

On the array, distributing the host paths and fabric connections across the physical array components increases fabric redundancy and spreads the load for performance. The relevant array components include directors, front-end I/O modules, and ports.

The following two examples show the options for selecting the array ports for each fabric path. The fabrics can either share I/O modules or be connected to separate I/O modules. If both redundant fabrics connect to the same I/O module, one fabric should be connected to the high ports and the other fabric should be connected to the low ports.

Both are examples of a pair of servers connected through redundant fabrics to a single-engine PowerMax array with a pair of front-end I/O modules on each director. A multi-engine system has more directors and modules to spread across for redundancy. Each fabric has multiple connections to each director in the PowerMax. If a server loses connection to one fabric, it will have access to the PowerMax through the remaining fabric. Redundancy can be increased further if additional paths are configured from the servers to the fabrics and/or from the fabrics to the PowerMax.

In the first example, each fabric connects to each front-end I/O module. If one of the front-end I/O modules were to fail, each server will have an equal number of paths remaining to each director and to each of the remaining I/O modules. If a director failure occurs, host connectivity remains available through the other director’s front-end I/O modules. Fabric A connects to the two high ports on each I/O modules, and Fabric B is connected to the two low ports.

Figure 7. Each fabric connected to each I/O module

In the following example, each front-end I/O module is dedicated to one fabric. A front-end I/O module failure will only affect one fabric. And like the previous example, a director failure will affect 4 paths from each fabric.

Figure 8. Each I/O module dedicated to one fabric

FC-NVMe front-end redundancy

NVMe devices are supported with PowerPath 7.0 releases providing enhance multi-pathing with flake path and congestion controls that yield higher IOPS over native operating-system multipathing.

The guidelines outlined for previously supported protocols apply to FC-NVMe connectivity. Redundant fabrics along with a PowerMax array with multiple engines and multiple front-end I/O modules provide the most opportunities for redundancy.

- Redundant connections from each server to each fabric

- Redundant connections from each fabric to each PowerMax director

- If each director has multiple I/O modules, there are two connection options:

- Connect each fabric to each I/O module

- Dedicate each I/O module to one fabric

- If the redundant fabrics connect to the same I/O module, one fabric should be connected to the high ports and the other fabric should be connected to the low ports.

- For example, if Fabric A is connected to port 4 and/or port 5, then Fabric B should be connected to port 6 and/or port 7.

Dell PowerPath Intelligent Multipathing Software

Dell PowerPath is a family of software products that ensures consistent application availability and performance across I/O paths on physical and virtual platforms.

It provides automated path management and tools that enable you to satisfy aggressive service-level agreements without investing in additional infrastructure. PowerPath includes PowerPath Migration Enabler for non-disruptive data migrations and PowerPath Viewer for monitoring and troubleshooting I/O paths.

Dell PowerPath/VE is compatible with VMware vSphere and Microsoft Hyper-V-based virtual environments. It can be used together with Dell PowerPath to perform the following functions in both physical and virtual environments:

- Standardize Path Management: Optimize I/O paths in physical and virtual environments (PowerPath/VE) and cloud deployments.

- Optimize Load Balancing: Adjust I/O paths to dynamically rebalance your application environment for peak performance.

- Increase Performance: Leverage your investment in physical and virtual environment by increasing headroom and scalability.

- Automate Failover and Recovery: Define failover and recovery rules that route application requests to alternative resources if component failures or user errors occur.

For more information about PowerPath, see the Dell PowerPath Family Product Guide.