Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Networking and connectivity

Networking and connectivity

-

PowerMax FC/NVMeoF/ iSCSI connectivity

Each ESXi server in the VMware vSphere environment should have at least two physical HBAs when using FC or FC-NVMe. Each HBA should be connected to at least two different front-end ports on different directors of the PowerMax.

Similarly, when using iSCSI or NVMe/TCP, each ESXi server in the VMware vSphere environment should have at least two physical NICs. Do not use teaming. There should be at least two front-end ports on different directors of the PowerMax.

In the examples below for FC, SAN switches are not included. The diagrams depict the recommended zoning, rather than the physical connectivity; however, it is possible some customers may use direct connectivity which would mirror the examples.

Note: iSCSI connectivity proceeds differently than FC zoning. For setup details, see iSCSI Implementation for Dell EMC Storage Arrays Running PowerMaxOS.

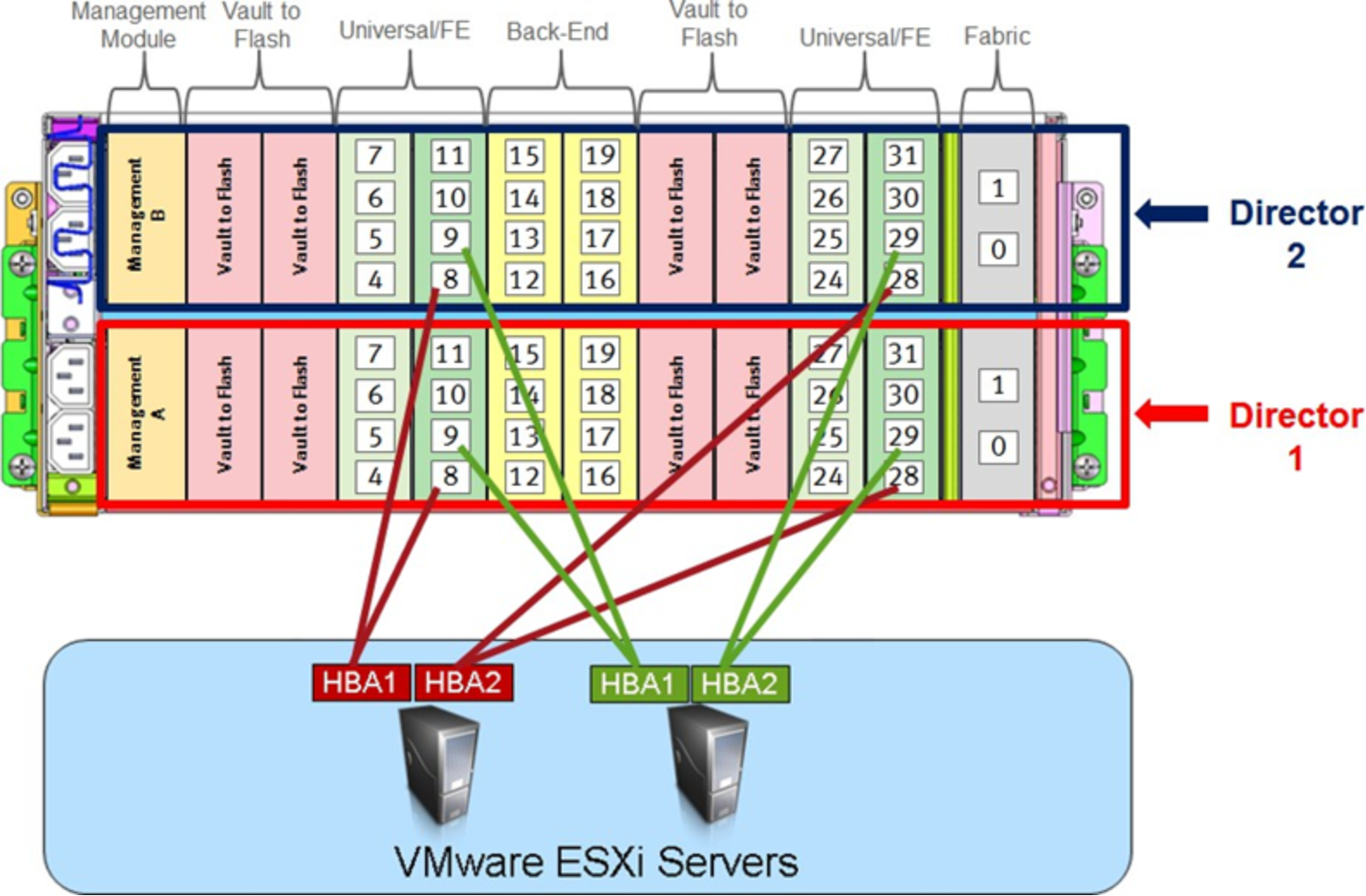

PowerMax arrays

If the PowerMax in use has only one engine, then each HBA should be connected to the odd and even directors within it. Each ESXi host with two HBAs using two ports would have four paths to the array as in Figure 1. The port numbers were chosen randomly, and the emulations are not included.

Figure 1. Connecting ESXi servers to a single engine PowerMax

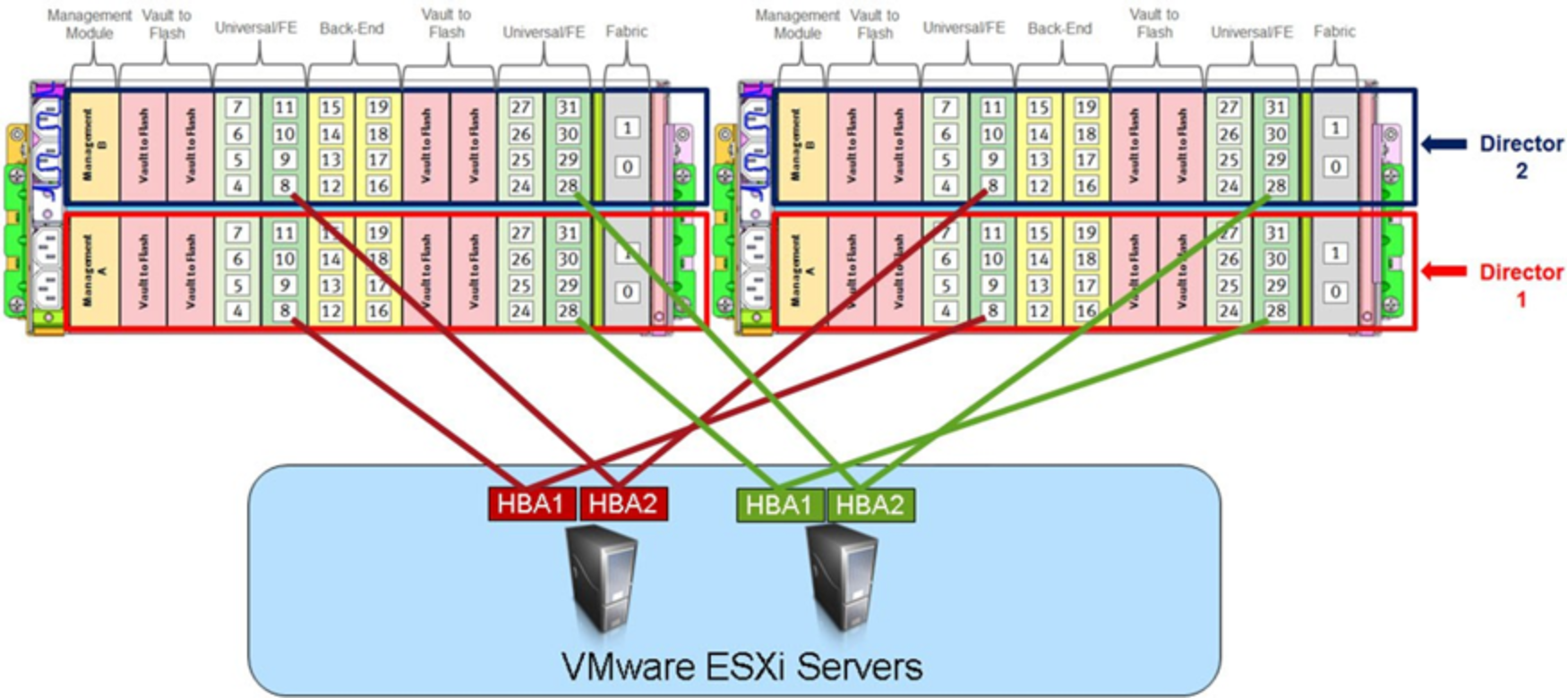

The multi-engine connectivity is demonstrated in Figure 2.

Figure 2. Connecting ESXi servers to a multi-engine PowerMax

Performance

The ports on the PowerMax do not share a CPU. On the PowerMax all the front-end ports have access to all CPUs designated for that part of the director. Therefore, a single port on a PowerMax can do far more I/O than a similar port on the previous array models. From a performance perspective, for small block I/O, two ports per host would be sufficient. For large block I/O, more ports would be recommended to handle the increase in Gb/s.

Note: Dell Technologies recommends using four ports in each port group. If creating a port group in Unisphere for PowerMax, the user is warned if fewer than four ports are used.