Home > Storage > ObjectScale and ECS > Product Documentation > Dell ECS: High Availability Design > ECS node failure

ECS node failure

-

ECS is constantly performing health checks on nodes. To maintain system availability, the ECS distributed architecture allows any node to accept client requests. If one node is down, a client can be redirected either manually or automatically (for example, using DNS or a load balancer) to another node that can service the request.

To avoid reconstruction operations being triggered for spurious events, a full reconstruction operation is not triggered unless a node fails a set number of sequential health checks. The default is 60 minutes. The following actions occur if an I/O request comes in for a node that is not responding but before a full reconstruction is triggered:

- If the request is for a partition table hosted on a node that is not responding, the requested partition table’s ownership is redistributed across the remaining nodes in the site. Once this redistribution is complete, the request is completed successfully.

- If the request is for data that exists on disks from the unresponsive node, reconstruction occurs through the use of either the remaining erasure coded data and parity fragments or the replica copies. The chunk information is then updated. Once the fragments have been re-created, any outstanding or new read requests use the updated chunk information to request the data fragment or fragments and service the read request.

After a node fails a set number of sequential health checks (the default is 60 minutes), the node is deemed to be down. This action automatically triggers a re-creation operation of the partition tables and the chunk fragments on the disks owned by the failed node.

As part of the re-creation operation, a notification is sent to chunk manager, which starts a parallel recovery of all chunk fragments stored on the failed node’s disks. This recovery can include chunks containing object data, custom client-provided metadata, and ECS metadata. If the failed node comes back online, an updated status is sent to chunk manager, and any incomplete recovery operations are canceled. For more details about chunk fragment recovery, see Disk failure.

Besides monitoring hardware, ECS also monitors all services and data tables on each node.

- If there is a table failure but the node is still up, ECS automatically tries to reinitialize the table on the same node.

- If ECS detects a service failure, it first tries to restart the service.

If these efforts fail, ECS redistributes ownership of the tables owned by the down node or service across all remaining nodes in the VDC/site. The ownership changes involve updating the vnest information and re-creating the memory tables owned by the failed node. The vnest information is updated on the remaining nodes with new partition table owner information.

The memory tables from the failed node are re-created by replaying journal entries that were written after the latest successful journal checkpoint.

Multiple node failures

In some scenarios, multiple nodes within a site can fail, either one by one or concurrently:

- One-by-one failure—When nodes fail one by one, it means that one node fails, all recovery operations are completed, and then a second node fails. This action can occur multiple times and is analogous to a VDC going from something like 4 sites to 3 sites to 2 sites to 1 site. This process requires that the remaining nodes have sufficient space to complete recovery operations.

- Concurrent failure—When nodes fail concurrently, it means that the nodes fail almost at the same time or a node fails before recovery from a previous failed node is complete.

The impact of the failure depends on which nodes go down. The following tables describe the best-case scenarios for failure tolerances of a single site based on the erasure coding scheme and number of nodes in a VDC.

Table 8. Best-case scenario of multiple node failures within a site based on the default 12+4 erasure coding scheme

Number of nodes in VDC at creation

Total number of failed nodes since VDC creation

Status after concurrent failures

Status after most recent one-by-one failure

Current VDC state after previous one-by-one failures

5

1

5-node VDC with 1 node failing

2

VDC previously went from 5 to 4 nodes;

now 1 additional node is failing3–4

VDC previously went from

5 to 4 to 3 nodes or

5 to 4 to 3 to 2 nodes;

now 1 additional node is failing6

1

6-node VDC with 1 node is failing

2

VDC previously went from 6 to 5 nodes;

now 1 additional node is failing3

VDC previously went from 6 to 5 to 4 nodes;

now 1 additional node is failing4–5

VDC previously went from

6 to 5 to 4 to 3 nodes or

6 to 5 to 4 to 3 to 2 nodes;

now 1 additional node is failing8

1–2

8-node VDC or VDC that went from 8 to 7 nodes;

now 1 node failing3–4

VDC previously went from

8 to 7 to 6 nodes or

8 to 7 to 6 to 5 nodes;

now 1 additional node is failing5

VDC previously went from

8 to 7 to 6 to 5 to 4 nodes;

now 1 additional node is failing6–7

VDC previously went from

8 to 7 to 6 to 5 to 4 to 3 nodes or

8 to 7 to 6 to 5 to 4 to 3 to 2 nodes;

now 1 additional node is failing

Number of nodes in VDC at creation

Total number of failed nodes since VDC creation

Status after concurrent failures

Status after most recent one-by-one failure

Current VDC state after previous one-by-one failures

6

1

6-node VDC with 1 node failing

2

VDC previously went from 6 to 5 nodes;

now 1 additional node is failing3

VDC previously went from 6 to 5 to 4 nodes;

now 1 additional node is failing4–5

VDC previously went from

6 to 5 to 4 to 3 nodes

or 6 to 5 to 4 to 3 to 2 nodes;

now 1 additional node is failing8

1

8-node VDC with 1 node failing

2

VDC previously went from 8 to 7 nodes;

now 1 additional node is failing3–5

VDC previously went from

8 to 7 to 6 nodes or

8 to 7 to 6 to 5 nodes or

8 to 7 to 6 to 5 to 4 nodes;

now 1 additional node is failing6–7

VDC previously went from

8 to 7 to 6 to 5 to 4 to 3 nodes or

8 to 7 to 6 to 5 to 4 to 3 to 2 nodes;

now 1 additional node is failing12

1–2

12-node VDC or 12-node VDC that went from 12 to 11 nodes;

now 1 additional node is failing3–6

VDC previously went from

12 to 11 to 10 nodes or

12 to 11 to 10 to 9 nodes or

12 to 11 to 10 to 9 to 8 nodes or

12 to 11 to 10 to 9 to 8 to 7 nodes;

now 1 additional node is failing7–9

VDC previously went from

12 to 11 to 10 to 9 to 8 to 7 to 6 nodes or

12 to 11 to 10 to 9 to 8 to 7 to 6 to 5 nodes or

12 to 11 to 10 to 9 to 8 to 7 to 6 to 5 to 4 nodes;

now 1 additional node is failing10–11

VDC previously went from 12 to 11 to 10 to 9 to 8 to 7 to 6 to 5 to 4 to 3 nodes

or 12 to 11 to 10 to 9 to 8 to 7 to 6 to 5 to 4 to 3 to 2 nodes; now 1 additional node is failingThe following basic rules determine what operations fail in a single site with multiple node failures:

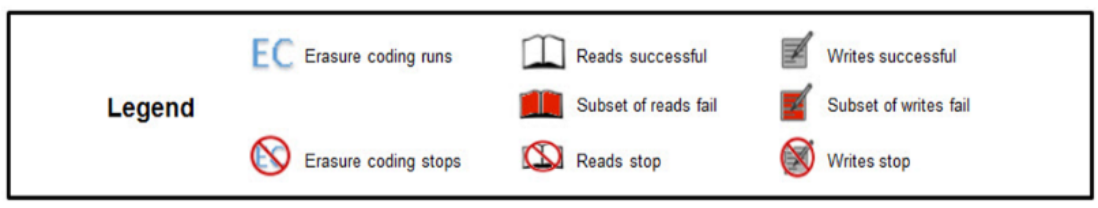

- If you have three or more concurrent node failures, some reads and writes will fail due to the potential loss of all three replica copies of the associated triple mirrored metadata chunks.

- Writes require a minimum of three nodes.

- Erasure coding will stop and erasure coded chunks will be converted to triple mirror protection if the number of nodes is fewer than the minimum required for each erasure coding scheme. Because the default erasure coding scheme of 12+4 requires 4 nodes, erasure coding will stop if there are fewer than 4 nodes. For cold storage erasure coding of 10+2, erasure coding will stop if there are fewer than 6 nodes.

- If the node count goes below the minimum required for the erasure coding scheme, erasure coded chunks will be converted to triple mirror protection. As an example, in a VDC with default erasure coding and 4 nodes, after a node failure, the following events occur:

- 4 fragments are lost.

- Missing fragments are rebuilt.

- Chunk creates 3 replica copies, one on each node.

- EC copy is deleted.

- One-by-one failures act like single-node failures. As an example, if you lose two nodes one-by-one, each failure only consists of recovering data from the single node outage.

As an example, with 6 nodes and default erasure coding:

- First failure: Each of the six nodes has up to three fragments (16 fragments/6 nodes). The missing three fragments are re-created on the remaining nodes. After recovery is complete, the VDC ends up with 5 nodes.

- Second failure: Each of the five nodes has up to 4 fragments (16 fragments/5 nodes). The missing four fragments are re-created on the remaining nodes. After recovery is complete, the VDC ends up with 4 nodes.

- Third failure: Each of the four nodes has up to 4 fragments (16 fragments/4 nodes). The missing four fragments are re-created on the remaining nodes. After recovery is complete, the VDC ends up with 3 nodes. Because this number is below the minimum for erasure coding, the erasure coded chunk is replaced by three replica copies distributed across the remaining nodes.

- Fourth failure: Each of the three nodes has one replica copy. The missing replica copy is re-created on one of the remaining nodes. After recovery is complete, the VDC ends up with 2 nodes.

- Fifth failure: There are three replica copies, two on one node and one on the other node. The missing replica copy or copies are re-created on the remaining node. After recovery is complete, the VDC ends up with 1 node.