Home > Storage > ObjectScale and ECS > Product Documentation > Dell ECS: GeoDrive Best Practices > Test environment

Test environment

-

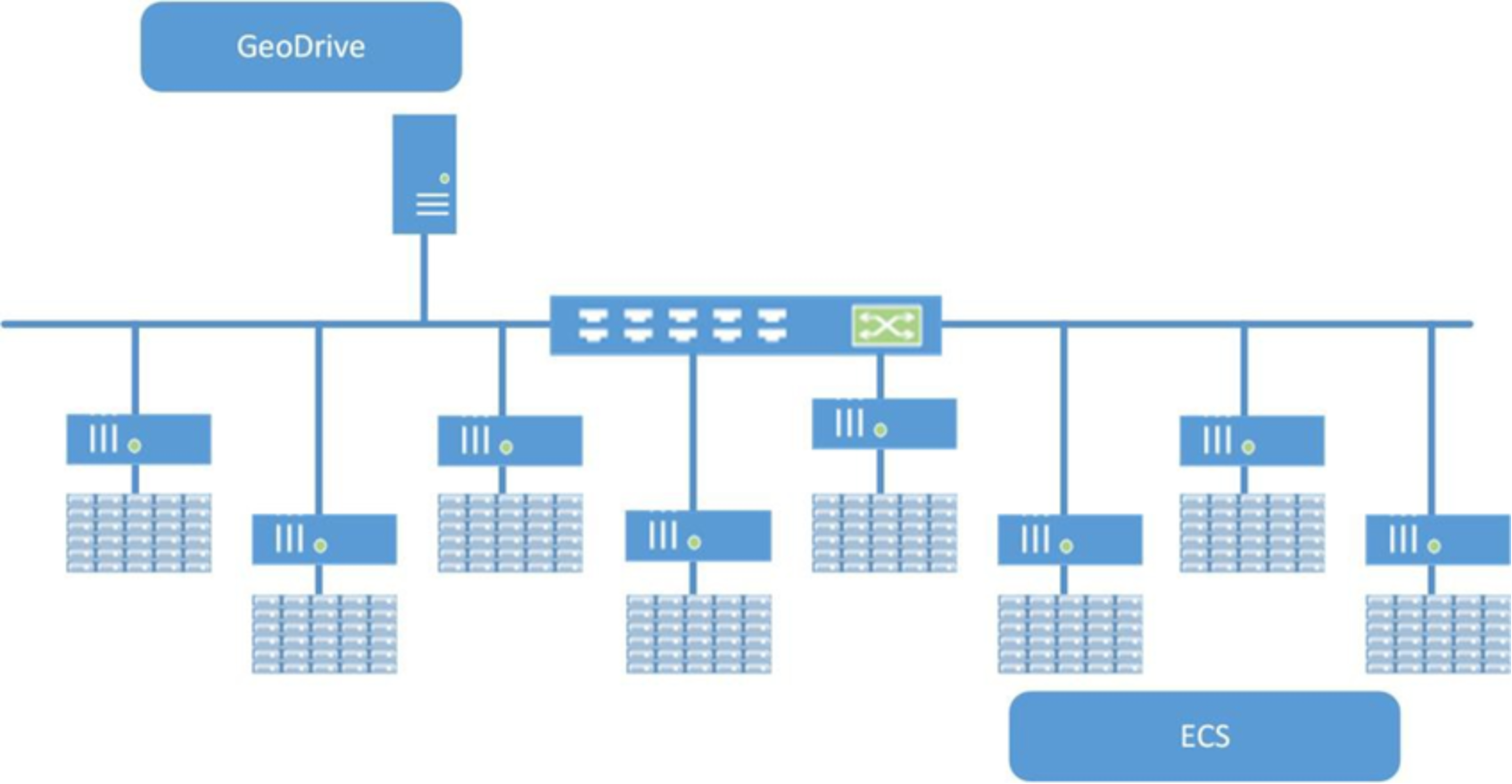

Table 2 describes the specification of the hardware and software used for this performance study and Figure 10 illustrates the hardware and network connectivity setup.

Table 2. Hardware and software used for testing

Hardware

Specifications

Software

Server

CPU: 2x Intel® Xeon® E5-2650 v3 Processor (2.3 GHz, 10 Cores, 10x 256KB L2 and 25MB L3 Cache),

Memory: 256 GB

Disks: 12 600GB 10K RPM SAS

drives

4 disks: Virtual single drive: RAID 0, NTFS for GeoDrive local cache drive

1 disk: RAID 0 where all datasets were stored

Windows 2012 Server R2 Standard

CIFS-ECS version 1.2 (x64)

Python 3.5.1

ECS Appliance

U2000 Gen 2 Appliance

8 nodes each with dual, six-core

2.4 GHz Intel Xeon® (E5-2620) processors

Memory: 64 GB

Disks: Each node has direct access to 30 8TB 7200RPM SAS drives

ECS v3.1

Single Site VDC with Single Bucket

Pre-populated with a minimum of

1.72 Million objects

Network

10GbE

The ECS Appliance was pre-populated with a minimum of about 1.72 million objects. One GeoDrive drive was mapped to a single site, a single namespace and a single bucket. The GeoDrive drive used as a local disk cache consisted of a virtual drive of RAID 0 with four 600GB SAS drives (10K RPM) and formatted with NTFS. The IP addresses of each of the ECS nodes were specified as the hosts and GeoDrive was responsible for doing the load balancing across the nodes. GeoDrive was set up to connect to ECS using HTTP. One 10GBe network port uplink to the 10GBe switch was used on the GeoDrive server. ECS had two 10GBe uplinks to the switch one per ECS top of rack 10GBe switch.

Figure 10. 10GbE test network topology