Home > AI Solutions > Artificial Intelligence > White Papers > Dell Automotive Reference Architecture > Modified data flow using a server-based HiL Rig

Modified data flow using a server-based HiL Rig

-

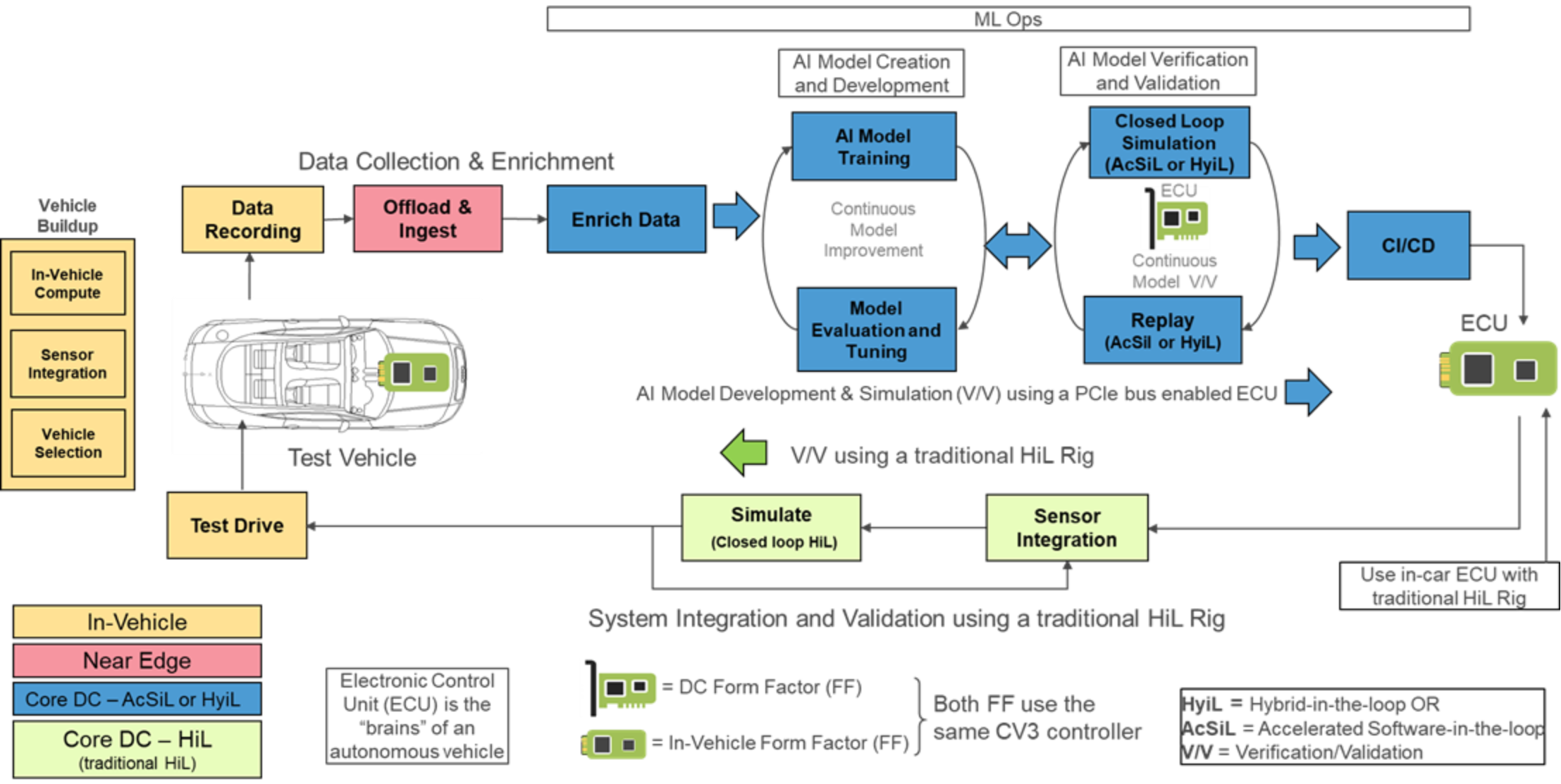

Figure 2 illustrates a typical end-to-end AI automotive pipeline in which the forward path is exclusively for AI model development and software V/V. The following figure also references a typical automotive pipeline but slightly modified:

Figure 7. Modified data flow using a server based HiL Rig and the Ambarella CV3 controllerIn this case, the forward path includes one or more PCIe bus-enabled ECUs inside a server-based HiL Rig. V/V of the AI developed models can now be performed by transferring all information through the PCIe bus to the ECU controller. Because the ECU is PCIe-enabled, it can run at software speeds, which negate the need for traditional SiL development and allows direct access to the ECU controller. The return path in this figure is also different. Because the AI model V/V has already been completed in the forward path, the return path must use the in-vehicle ECU Form Factor with the PCIe bus disabled and the traditional sensor input paths enabled. This method ensures that the AI models respond identically to the PCIe bus-enabled paths. This new architecture allows for cheaper and easier data center scaling while dramatically reducing development cycle times.

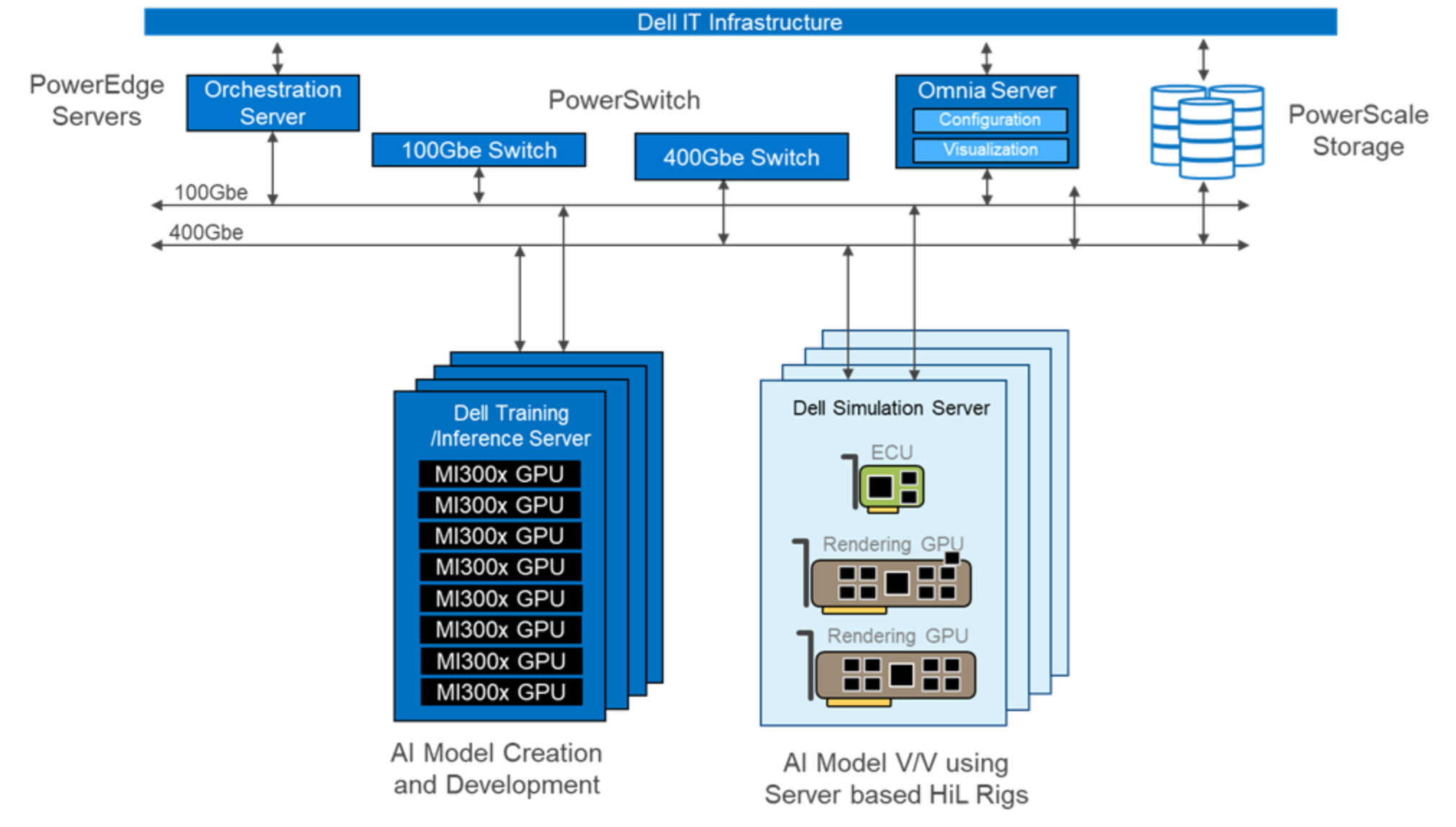

The following figure is an architectural block diagram of DARA using server-based HiL Rigs:

Figure 8. Architectural block diagram of DARA using a server-based HiL RigThe Training and Inference server is the eight-way PowerEdge XE9680 GPU server platform using AMD’s MI300x GPUs. The simulation servers act as server-based HiL Rigs. You can see that the architecture is simplified when the HiL Rig functionality is contained in a standard PowerEdge server. Depending on the PowerEdge server with AMD GPUs used, there can be several combinations of ECU PCIe controller cards to GPU rendering cards. In this figure, three PCIe cards are shown: one Ambarella CV3 ECU controller card and two GPU cards used for rendering. (This figure assumes that the PowerEdge R7625 server with AMD GPUs has 3 PCIe slots.)

The other components are necessary for high-speed network, storage, and platform manageability. The following table lists the basic components and a brief description of the DARA architecture.

Table 4. Summary of the DARA architectural components

Component

Hardware

Description

Orchestration server

Dell PowerEdge R660

Used for coordinating and automating various tasks, processes, and workflows across the architecture.

100 GbE switch

Dell PowerSwitch Z9432

Network for testing, troubleshooting, and deploying operating system patches, operating system and driver updates, security assessments, and other management tasks.

400 Gb PowerSwitch

Dell PowerSwitch Z9664

Enabled using the PowerSwitch Z9664, which forms the backbone to connect all high-speed components for high bandwidth and low latency communication.

Control PC or Omnia server

Dell PowerEdge R660

Used to manage and monitor network devices, switches, server management, system maintenance, remote access, virtualization management, backup, disaster recovery, monitoring, alerts, and visualization using Graphana panels to view telemetry data.

Dell training and inference servers

PowerEdge XE9680 server with AMD MI300x GPUs

The PowerEdge XE9680 server with 8 AMD MI300x GPUs serves as the primary compute engine for AI model creation and development.

Dell simulation servers

PowerEdge R7625 server with integrated NVIDIA L40S GPU, Ambarella CV3

The Dell simulation servers act as the server-based HiL Rig. The PowerEdge R7625 2U AMD server using NVIDIA L40S GPUs for rendering and Ambarella’s CV3 Controller are integrated into the server using PCIe plug-in cards.

PowerScale storage

Dell PowerScale F710, H700, or H7000

Depending on the use case, Dell PowerScale F710 all flash array storage can be used for training and Dell PowerScale H700 or Dell PowerScale H7000 for large simulation environments.

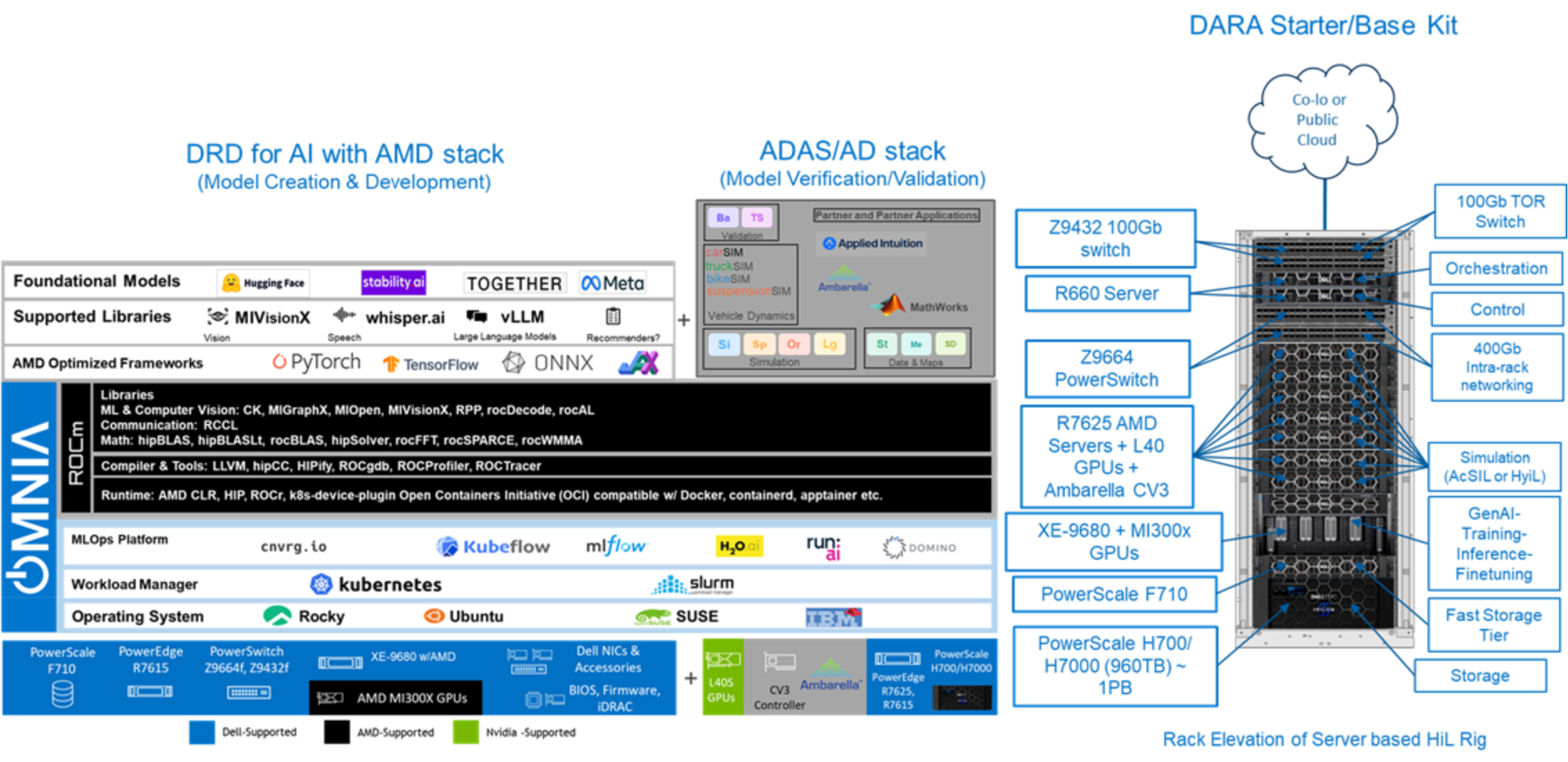

The following figure shows the full DARA software stack and rack elevation that includes the server-based HiL Rig.

Figure 9. The DARA starter kit with the full software stack and rack elevation