Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Analytics > Dell and AMD for Deep Learning Analytics > Solution sizing guidance

Solution sizing guidance

-

DL workloads vary significantly with respect to the demand for compute, memory, disk, and I/O profiles, often by orders of magnitude. Sizing guidance for the GPU quantity and configuration of F900 nodes can only be provided when these resource requirements are known in advance. That said, it is usually beneficial to have a few data points on the ratio of GPUs per PowerScale F900 node for common image classification benchmarks.

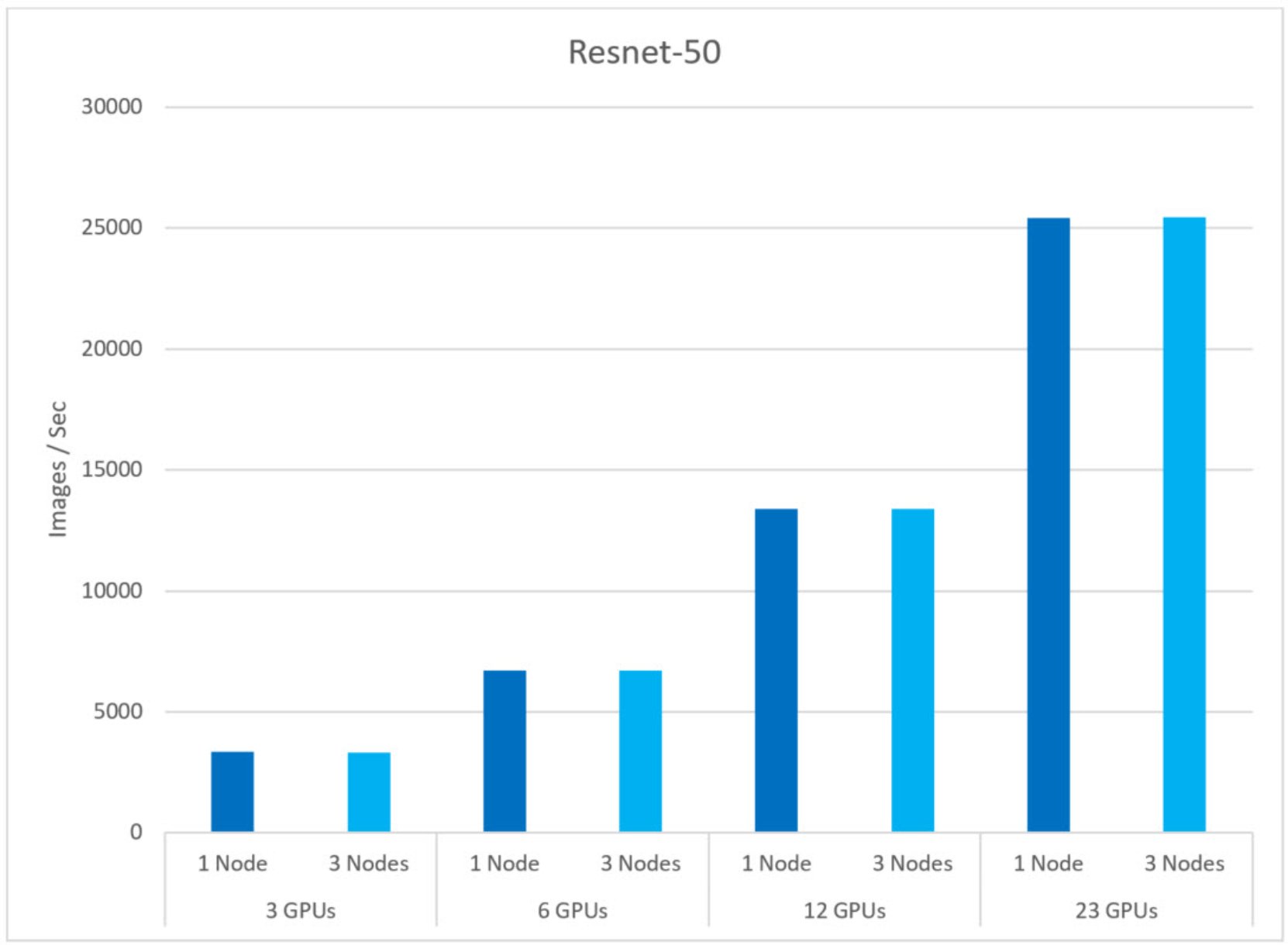

Based on the results from the TensorFlow benchmark, Figure 3 compares the image throughput between results where N GPUs were assigned to N F900 Nodes (where N is the number of GPUs and F900 nodes used during the test). For example, with three GPUs we run two different tests where one and three F900 nodes were used, respectively. The goal of this test is to identify the number of GPUs that a single F900 node can support without performance degradation.

During the test, we have seen similar image throughput from one to 23 GPUs assigned to one and three F900 nodes, respectively. We have not noticed any performance degradation with 23 GPUs assigned to a single F900 node.

From this test we can conclude that a single PowerScale F900 can support at least 23 AMD Instinct MI100 GPUs without performance degradation.

Figure 3. Sizing guidance