Home > Storage > PowerScale (Isilon) > Industry Solutions and Verticals > Analytics > Deep Learning with Dell EMC Isilon > Isilon

Isilon

-

The following Isilon hardware was used for the benchmarking in this document.

Table 4. Specification of tested Isilon F800

Item

Description

Model

Isilon F800-4U-Single-256GB-1x1GE-2x40GE SFP+-48TB SSD

Number of Nodes

4

Number of Chassis

1

Processors Per Chassis

4 x Intel Xeon Processor E5-2697A v4

Raw Capacity Per Chassis

192 TB (60 x 3.2 TB SSD)

Throughput Per Chassis

Up to 15 GB/s

Front-End Networking

2 x 40GbE (QSFP+) per node

Infrastructure Networking

2 x InfiniBand QDR per node

Storage Type

SSD

Number of Chassis Possible

36

OneFS version

8.1.0.2

Note: The Isilon cluster used to validate this solution used InfiniBand for the back-end (infrastructure) networking. However, it is recommended that new Isilon deployments use 40GbE for the back-end networking, even for deep learning workloads.

Isilon configuration

The default and minimal configuration of Isilon is sufficient for excellent performance of the deep learning workloads tested in this document.

In a minimal configuration, there will be a single Isilon chassis containing four Isilon F800 nodes. Each Isilon node should have at least one 40 Gigabit Ethernet port connected to a front-end switch. In most situations, this will provide as much bandwidth as can be provided by the F800. However, for protection from switch failures, the 2nd 40 Gigabit Ethernet port should also be connected.

Mounting to Isilon

When NFS clients establish a mount to an Isilon cluster, the recommended best practice is to mount to a DNS name which the Isilon cluster will resolve to one of its IP addresses. These IP addresses are automatically assigned and reassigned to individual Isilon nodes to handle load balancing and node failures. This method of mounting is simple to administer since the mount host is the same for all nodes (e.g. isilon.example.com). However, when there are just a few high-throughput clients, one Isilon node may have significantly more client connections than others, leading to an imbalance and reducing performance. To prevent this problem, each client can be mounted to a different fixed IP address to force a good balance. For instance, compute node 1 connects to Isilon node 1, compute node 2 connects to Isilon node 2, etc. Note that although a specific client node connects to only a single Isilon node, an I/O request will typically involve the disks and caches in all nodes of the Isilon cluster.

If archive-class Isilon nodes are part of the Isilon cluster, it is recommended that NFS clients do not connect to them as their slower CPUs will reduce client performance. Even if the dataset is known to be stored on the archive-class nodes, it is recommended to access them via the F800 all-flash nodes with faster CPU and more RAM. In general, archive-class nodes do not need to be assigned front-end IP addresses as all I/O to them will go through the back-end network.

Configuring automatic storage tiering

This section explains one method of configuring storage tiering for a typical DL workload. The various parameters should be changed according to the expected workload.

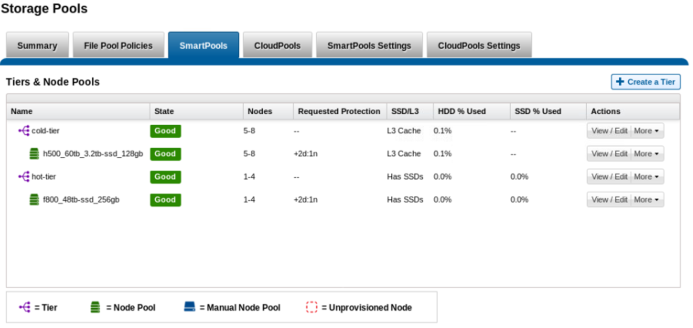

- Build an Isilon cluster with two or more node types. For example, F800 all-flash nodes for a hot tier and H500 hybrid nodes for a cold tier.

- Obtain and install the Isilon SmartPools license.

- Enable Isilon access time tracking with a precision of 1 day. In the web administration interface, click File System -> File System Settings. Alternatively, enter the following in the Isilon CLI:

isilon-1# sysctl efs.bam.atime_enabled=1

isilon-1# sysctl efs.bam.atime_grace_period=86400000- Create a tier named hot-tier that includes the F800 node pool. Create a tier named cold-tier that includes the H500 node pool.

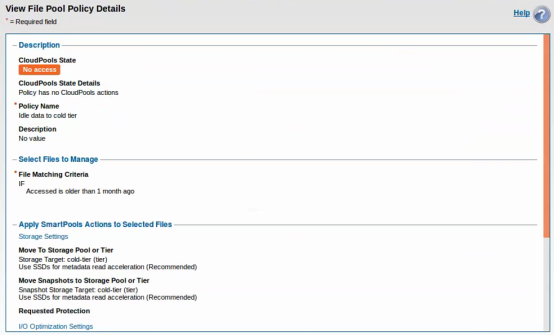

- Edit the default file pool policy to change the storage target to hot-tier. This will ensure that all files are placed on the F800 nodes unless another file pool policy applies that overrides the storage target.

- Create a new file pool policy to move all files that have not been accessed for 30 days to the H500 (cold) tier.

Configuration is now complete. To test this configuration, follow these steps:

- Start the Isilon SmartPools job and wait for it to complete. This will apply the above file pool policy and move all files to the F800 nodes (assuming that all files have been accessed within the last 30 days).

isilon-1# isi job jobs start SmartPools

isilon-1# isi status- Use the isi get command to confirm that a file is stored in the hot tier. Note that first number in each element of the inode list (1, 2, and 3 below) is the Isilon node number that contains blocks of the file. For example:

isilon-1# isi get -D /ifs/data/imagenet-scratch/tfrecords/train-00000-of-01024

* IFS inode: [ 1,0,1606656:512, 2,2,1372672:512, 3,0,1338880:512 ]

* Disk pools: policy hot-tier(5) -> data target f800_48tb-ssd_256gb:2(2), metadata target f800_48tb-ssd_256gb:2(2)- Use the ls command to view the access time.

isilon-1# ls -lu /ifs/data/imagenet-scratch/tfrecords/train-00000-of-01024

-rwx------ 1 1000 1000 762460160 Jan 16 19:32 train-00000-of-01024- Instead of waiting for 30 days, you may manually update the access time of the files using the touch command.

isilon-1# touch -a -d '2018-01-01T00:00:00' /ifs/data/imagenet-scratch/tfrecords/*

isilon-1# ls -lu /ifs/data/imagenet-scratch/tfrecords/train-00000-of-01024

-rwx------ 1 1000 1000 762460160 Jan 1 2018 train-00000-of-01024- Start the Isilon SmartPools job and wait for it to complete. This will apply the above file pool policy and move the files to the H500 nodes. Record the number of bytes moved and the duration.

isilon-1# isi job jobs start SmartPools

isilon-1# isi status- Use the isi get command to confirm that the file is stored in the cold tier.

isilon-1# isi get -D /ifs/data/imagenet-scratch/tfrecords/train-00000-of-01024

* IFS inode: [ 4,0,1061376:512, 5,1,1145344:512, 6,3,914944:512 ]

* Disk pools: policy cold-tier(9) -> data target h500_60tb_3.2tb-ssd_128gb:15(15), metadata target h500_60tb_3.2tb-ssd_128gb:15(15)- Run the training benchmark as described in the previous section. Be sure to run at least 4 epochs so that all TFRecords are accessed. Monitor the Isilon cluster to ensure that disk activity occurs only on the H500 nodes. Note that since the files will be read, this will update the access time to the current time. When the SmartPools job runs next, these files will be moved back to the F800 tier. By default, the SmartPool job runs daily at 22:00.

- Start the Isilon SmartPools job and wait for it to complete. This will apply the above file pool policy and move the files to the F800 nodes.

isilon-1# isi job jobs start SmartPools

isilon-1# isi status- Use the isi get command to confirm that the file is stored in the hot tier.

isilon-1# isi get -D /ifs/data/imagenet-scratch/tfrecords/train-00000-of-01024

* IFS inode: [ 1,0,1606656:512, 2,2,1372672:512, 3,0,1338880:512 ]

* Disk pools: policy hot-tier(5) -> data target f800_48tb-ssd_256gb:2(2), metadata target f800_48tb-ssd_256gb:2(2)