Home > Workload Solutions > Oracle > Guides > Reference Architecture Guide—Accelerate Oracle Database using Oracle TimesTen as an Application-Tier Cache > Use Case 2: Large TimesTen cache on PMem

Use Case 2: Large TimesTen cache on PMem

-

The goal of this use case was to study the performance and resource utilization impact of a large TimesTen cache when caching a relatively large subset of the backend RAC database. We also wanted to study the impact of using Intel Optane PMem as the main memory device for caching the backend RAC database on the TimesTen cache running on it.

Configuration overview

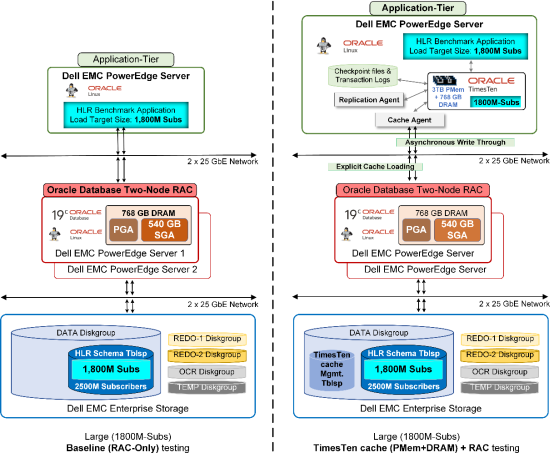

The following figure shows the two configurations—1800M subscribers Baseline and 1800M subscribers TimesTen cache + RAC—that we tested in Use Case 2.

Figure 7. Use Case 2: Large (1800M subscribers) dataset configuration overview

In this use case, we added 3 TB capacity of PMem to run in Memory Mode to the 768 GB of DRAM that existed in the application (TimesTen) server. The PMem modules’ 3 TB capacity was used as the main memory by the operating system and the application (TimesTen) while the 768 GB of DRAM that was used by the PMem as the front-end cache was transparent to the operating system and the application. Given this 3 TB main memory size, we could explicitly load a maximum of 1,800 million SUBSCRIBER rows (1,800M Subs) into the TimesTen cache. Therefore, we set the target workload data size for both the test configurations in this use case to 1,800M subscribers. 1,800M subscribers is 72 percent of the total HLR schema size of 2,500 million SUBSCRIBER rows in the backend RAC database; therefore, this use case represents the scenario in which a relatively large subset of RAC database is cached in TimesTen.

All hardware and software configurations used for the HLR benchmark application, TimesTen, and the backend RAC database in Use Case 1 remained virtually identical for Use Case 2 with two exceptions: We increased the RAC instance SGA to 540 GB, and we increased the HugePages size appropriately. For details on the RAC and TimesTen database configuration, refer to Appendix: Configuration details.

Test methodology

During the baseline tests, we ran the HLR benchmarking workload directly against the backend two-node RAC database setup with a target workload size of 1,800M subscribers. During each of the baseline tests, we ran seven workload iterations with increasing HLR application thread counts of 2, 4, 8, 16, 32, 48, and 64. We repeated the baseline tests three times and reported the averages of the three runs.

Note: During the baseline tests, the TimesTen database and cache were disabled on the application server.

During the TimesTen tests, we first explicitly loaded 1,800M subscribers into the TimesTen cache running on 3 TB of PMem and configured in Asynchronous Writethrough (AWT) caching mode. We then ran the HLR benchmarking workload against the TimesTen cache limiting the target throughput to the maximum TPS rate achieved against the RAC database.

Note: As described in section Test methodology overview the limited target TPS rate for the TimesTen tests were nearly the same as the highest TPS achieved by the baseline tests.

Performance results

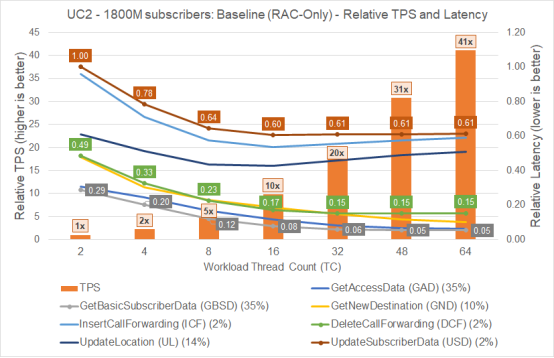

The figure below shows the results of the baseline test generated by the HLR application for the workload target size of 1,800M subscribers that we ran directly against the RAC database. The TPS values are plotted relative to the TPS throughput achieved at workload thread count 2 iteration. All latency values are plotted relative to the UpdateSubscriberData (USD) transaction latency observed at workload thread count 2 iteration.

Figure 8. Use Case 2 - 1800M subscribers - Baseline (RAC-only) - Relative TPS and Latency performance graph

As shown in the figure above, the RAC database and backend Dell EMC infrastructure scaled well in terms of TPS, delivering 41x more TPS at the peak workload thread count of 64 compared to the TPS at the starting workload thread count of 2. However, the HLR transaction latencies began at a high level during the initial iterations and decreased as the test progressed. Latency levels stabilized beginning with the iteration running with workload thread count of 16 and remained at the same latency level until the end of the test. The latency level variation occurred because it took few initial iterations for the RAC buffer cache to get warmed up. During this time, more read and write I/O operations to the backend storage caused higher I/O latencies while processing the larger target dataset size of 1,800M subscribers.

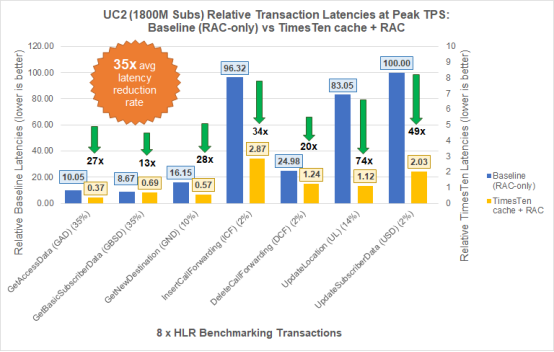

The figure below compares the application latencies for each of the seven HLR transactions during the baseline tests and the TimesTen cache tests.

Figure 9. Use Case 2 (1800M subscribers) - Average Latencies: Baseline (RAC-only) vs TimesTen cache with RAC

The relative transaction latencies reported in the figure above were captured for both the baseline and the TimesTen tests when they both delivered nearly the same peak TPS throughput performance. All latency values in the figure above are relative to the baseline UpdateSubscriberData (USD) transaction latency. As shown in the figure above, in Use Case 2, in which we tested with a relatively large dataset size (1,800M subscribers), the TimesTen cache could deliver the same throughput as the RAC database but with an average of 35 times better transaction response time across the seven different mixed types of query and DML transactions.

Note: As mentioned in Use Case 1, it is important to note that the latencies reported for the TimesTen tests in Use Case 2 are from the transaction commits to the local TimesTen cache running on 3 TB of PMem in Memory Mode (with 768 GB of DRAM as front-end cache to PMem).

An additional purpose of this use case was to determine how a TimesTen cache impacts the resource utilization of the backend RAC database and its infrastructure.

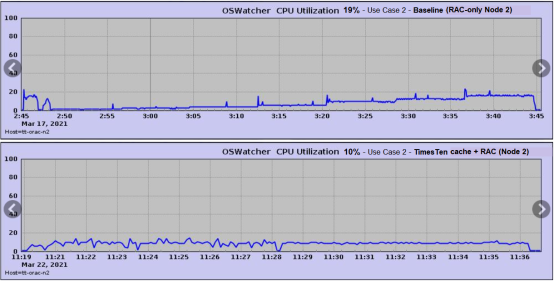

The following figure shows the CPU utilization of RAC node2 captured using the Oracle OSWatcher utility during the duration of both the baseline and the TimesTen tests.

Figure 10. RAC node2 CPU utilization - Use Case 2 - Baseline vs TimesTen cache tests

As shown in the figure, the RAC node2’s CPU utilization was around 10 percent (bottom graph) throughout the duration of the TimesTen tests as compared to roughly 19 percent for the baseline tests (top graph) during the peak workload thread count of 64. Therefore, the CPU utilization of the RAC node was reduced—a delta of about 9 percent in our particular test setup—during TimesTen tests compared to the baseline tests during their peak TPS performance.

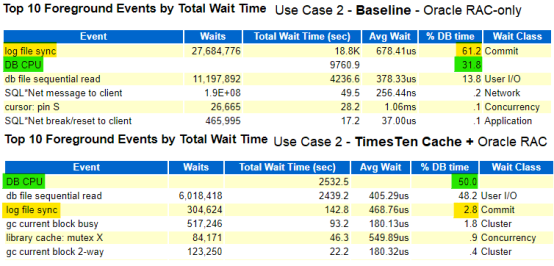

Similarly, when we compared the ‘Top 10 Foreground Events by Total Wait Time’ from the AWR reports of the two test cases—baseline vs TimesTen—we observed that during the TimesTen cache tests the ‘log file sync’ wait events that were observed during the baseline tests were nearly eliminated (61.2% DB Time in baseline vs 2.8% DB Time in TimesTen tests), as shown in the comparative figure below.

Figure 11. Foreground Wait Events - Use Case 2 - Baseline vs TimesTen cache tests

Also, the RAC database’s CPU consumption time (DB CPU) improved during the TimesTen tests (DB CPU = 50.0% DB time) when compared to its CPU consumption time during the baseline tests (DB CPU = 31.8% DB time)—a delta of 18 percent. This is an indication that the database spent more time processing the queries than waiting on other resources or events. This finding shows that deploying a TimesTen cache helps to increase the backend RAC database’s infrastructure usage efficiency.