Home > Storage > PowerScale (Isilon) > Product Documentation > Storage (general) > PowerScale OneFS Technical Overview > No data available.

No data available.

-

OneFS enables an administrator to modify the protection policy in real time, while clients are attached and are reading and writing data.

Be aware that increasing a cluster’s protection level may increase the amount of space consumed by the data on the cluster.

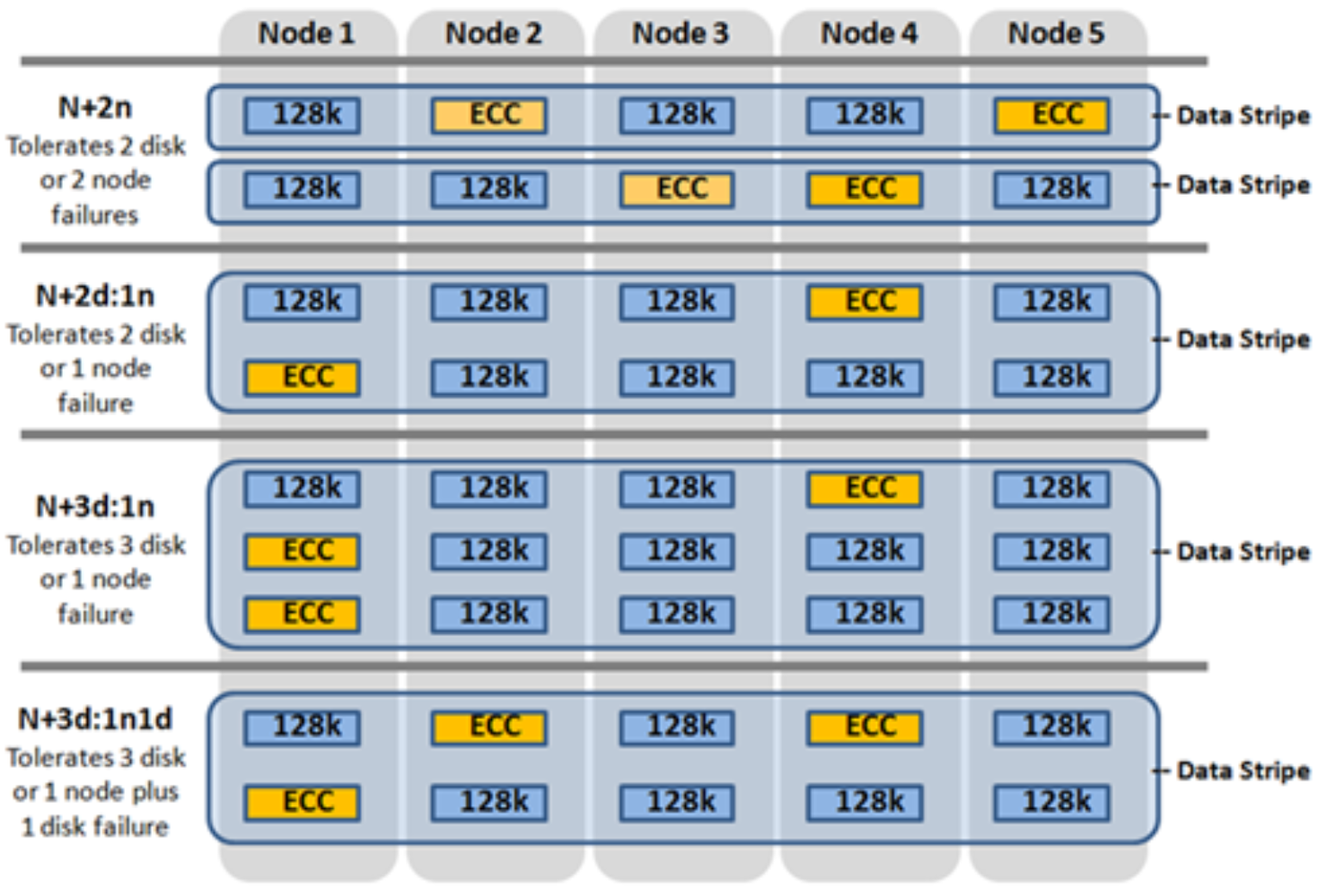

Figure 15. OneFS hybrid erasure code protection schemes

OneFS also provides under-protection alerting for new cluster installations. If the cluster is under-protected, the cluster event logging system (CELOG) will generate alerts, warning the administrator of the protection deficiency and recommending a change to the appropriate protection level for that particular cluster’s configuration.

Further information is available in the OneFS high availability and data protection white paper.

Automatic partitioning

Data tiering and management in OneFS is handled by the SmartPools framework. From a data protection and layout efficiency point of view, SmartPools facilitates the subdivision of large numbers of high-capacity, homogeneous nodes into smaller, more ‘Mean Time to Data Loss’ (MTTDL) friendly disk pools. For example, an 80-node H500 cluster would typically run at a +3d:1n1d protection level. However, partitioning it into four, twenty node disk pools would allow each pool to run at +2d:1n, thereby lowering the protection overhead and improving space utilization, without any net increase in management overhead.

In keeping with the goal of storage management simplicity, OneFS will automatically calculate and partition the cluster into pools of disks, or ‘node pools’, which are optimized for both MTTDL and efficient space utilization. This means that protection level decisions, such as the eighty-node cluster example above, are not left to the customer.

With Automatic Provisioning, every set of compatible node hardware is automatically divided into disk pools consisting of up to forty nodes and six drives per node. These node pools are protected by default at +2d:1n, and multiple pools can then be combined into logical tiers and managed with SmartPools file pool policies. By subdividing a node’s disks into multiple, separately protected pools, nodes are significantly more resilient to multiple disk failures than previously possible.

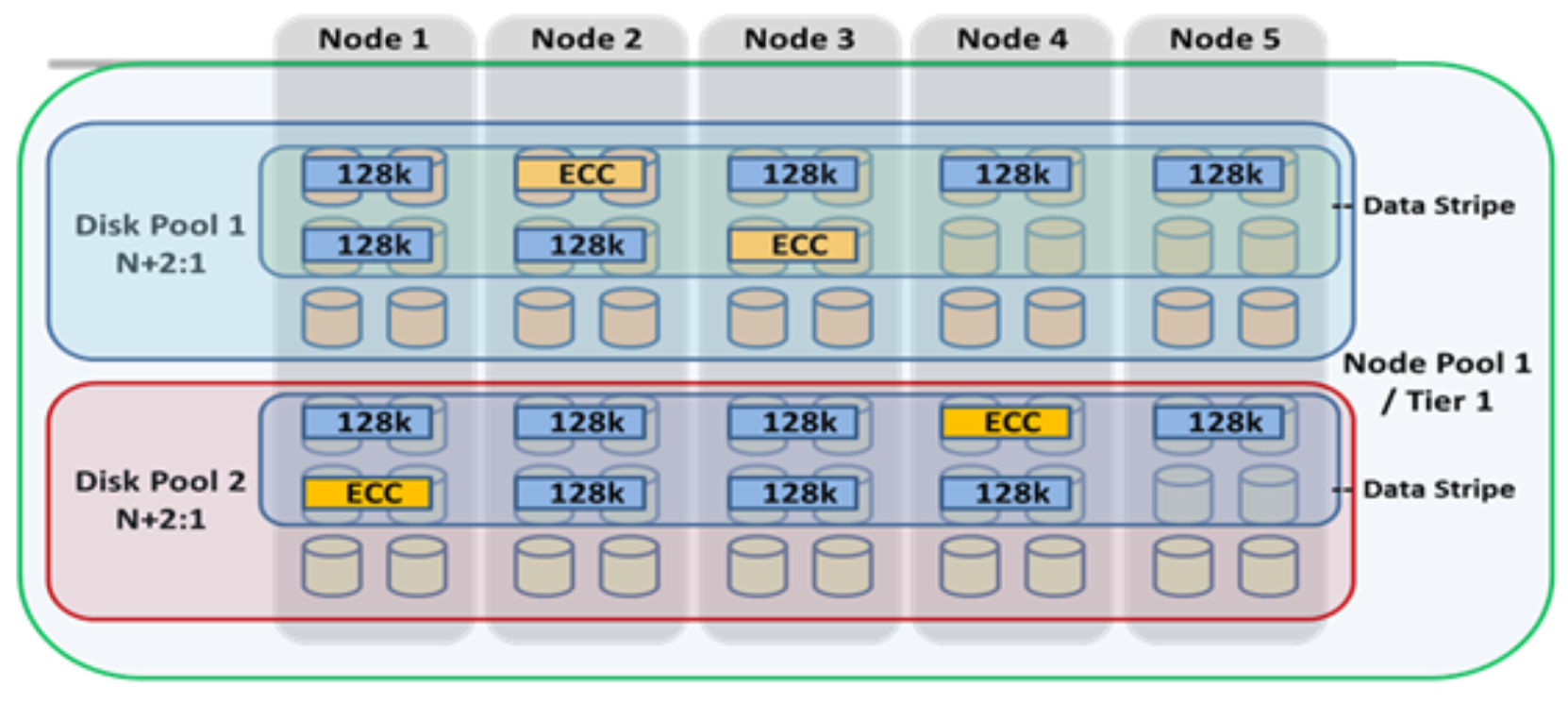

Figure 16. Automatic partitioning with SmartPools

More information is available in the SmartPools white paper.

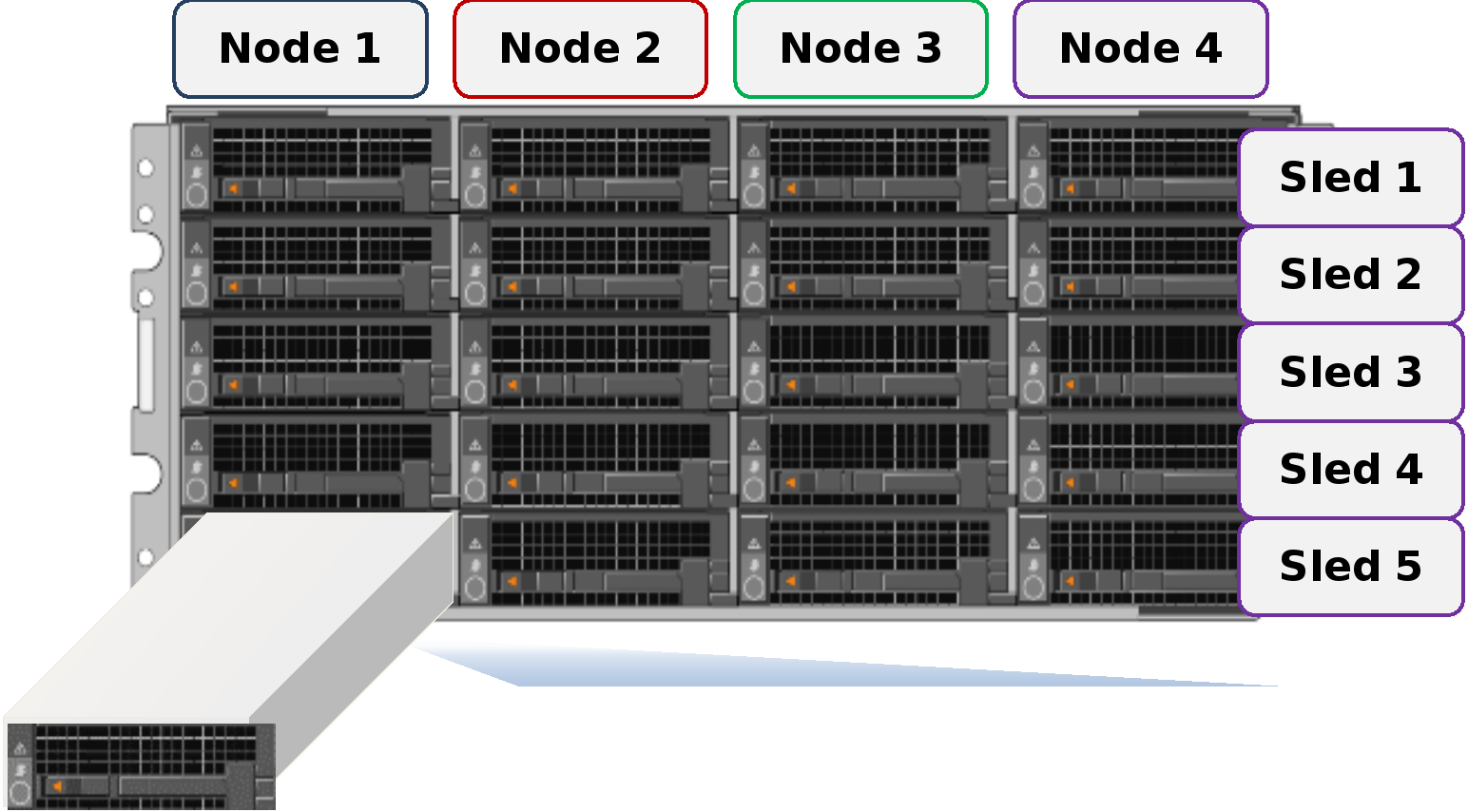

PowerScale Gen6 modular hardware platforms feature a highly dense, modular design in which four nodes are contained in a single 4RU chassis. This approach enhances the concept of disk pools, node pools, and ‘neighborhoods’ - which adds another level of resilience into the OneFS failure domain concept. Each Gen6 chassis contains four compute modules (one per node), and five drive containers, or sleds, per node.

Figure 17. Gen6 platform chassis front view showing drive sleds

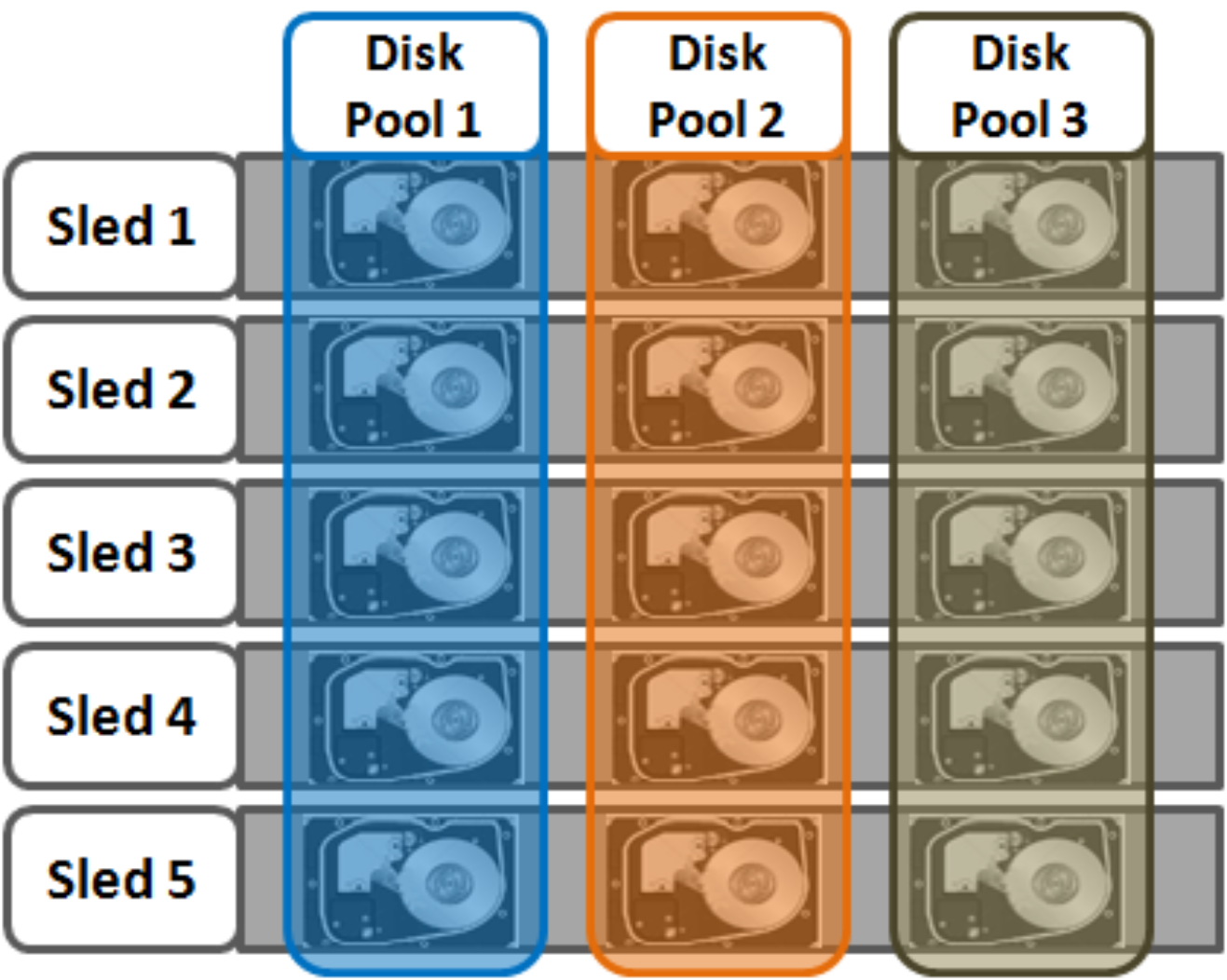

Each sled is a tray which slides into the front of the chassis and contains between three and six drives, depending on the configuration of a particular chassis. Disk pools are the smallest unit within the Storage Pools hierarchy. OneFS provisioning works on the premise of dividing similar nodes’ drives into sets, or disk pools, with each pool representing a separate failure domain. These disk pools are protected by default at +2d:1n (or the ability to withstand two drives or one entire node failure).

Disk pools are laid out across all five sleds in each Gen6 node. For example, a node with three drives per sled will have the following disk pool configuration:

Figure 18. OneFS disk pools

Node pools are groups of Disk pools, spread across similar storage nodes (compatibility classes). This is illustrated in Figure 19. Multiple groups of different node types can work together in a single, heterogeneous cluster. For example: one Node pool of F-Series nodes for I/Ops-intensive applications, one Node pool of H-Series nodes, primarily used for high-concurrent and sequential workloads, and one Node pool of A-series nodes, primarily used for nearline and/or deep archive workloads.

This allows OneFS to present a single storage resource pool consisting of multiple drive media types—SSD, high-speed SAS, or large-capacity SATA—providing a range of different performance, protection, and capacity characteristics. This heterogeneous storage pool in turn can support a diverse range of applications and workload requirements with a single, unified point of management. It also facilitates the mixing of older and newer hardware, allowing for simple investment protection even across product generations, and seamless hardware refreshes.

Each Node pool only contains disk pools from the same type of storage nodes and a disk pool may belong to exactly one node pool. For example, F-Series nodes with 1.6 TB SSD drives would be in one node pool, whereas A-Series nodes with 10 TB SATA Drives would be in another. Today, a minimum of four nodes (one chassis) are required per node pool for Gen6 hardware, such as the PowerScale H700, or three nodes per pool for self-contained nodes like the PowerScale F900.

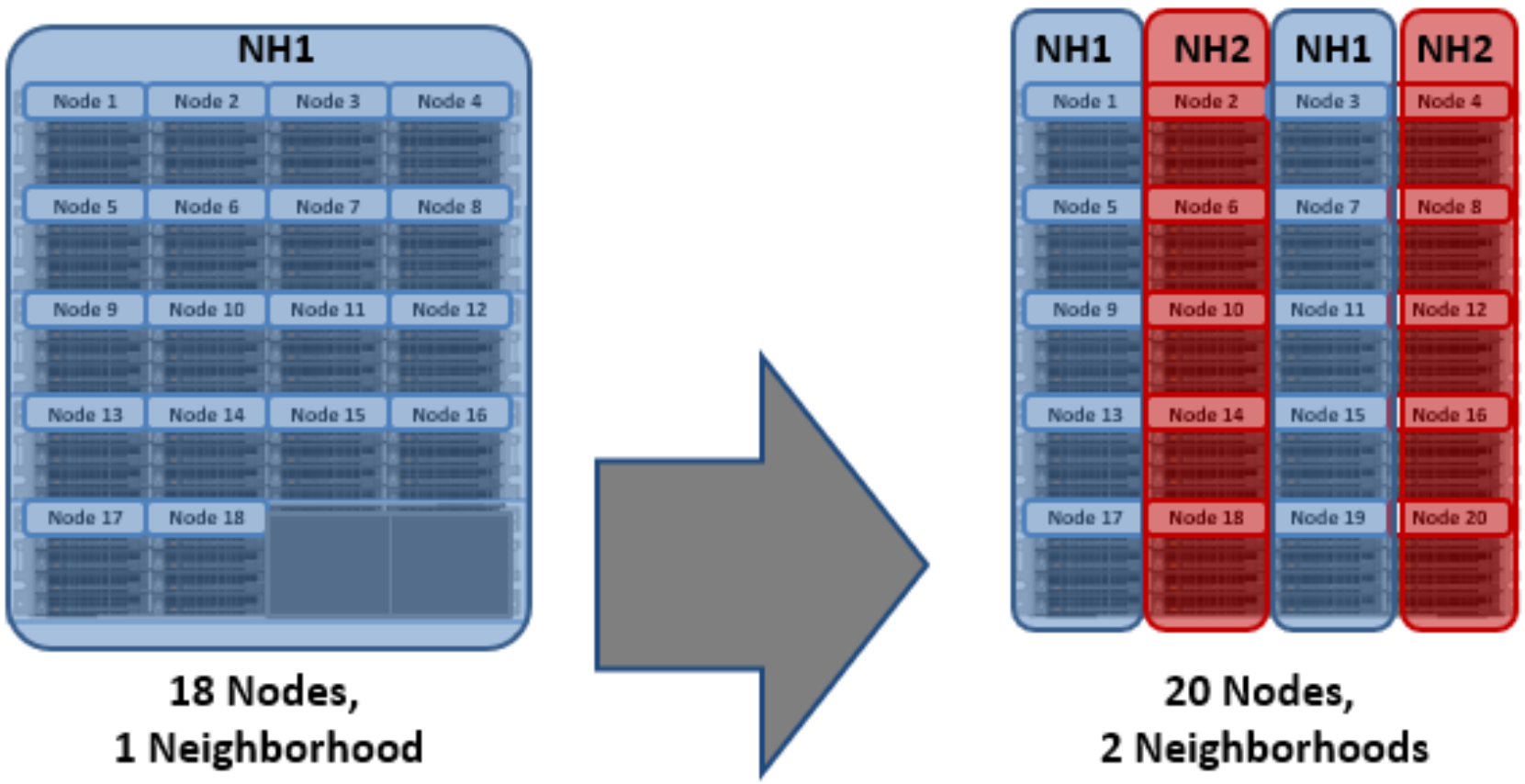

OneFS ‘neighborhoods’ are fault domains within a node pool, and their purpose is to improve reliability in general, and guard against data unavailability from the accidental removal of drive sleds. For self-contained nodes like the PowerScale F200, OneFS has an ideal size of 20 nodes per node pool, and a maximum size of 39 nodes. On the addition of the 40th node, the nodes split into two neighborhoods of twenty nodes. With the Gen6 platform, the ideal size of a neighborhood changes from 20 to 10 nodes. This protects against simultaneous node-pair journal failures and full chassis failures.

Partner nodes are nodes whose journals are mirrored. With the Gen6 platform, rather than each node storing its journal in NVRAM as in previous platforms, the nodes’ journals are stored on SSDs - and every journal has a mirror copy on another node. The node that contains the mirrored journal is referred to as the partner node. There are several reliability benefits gained from the changes to the journal. For example, SSDs are more persistent and reliable than NVRAM, which requires a charged battery to retain state. Also, with the mirrored journal, both journal drives have to die before a journal is considered lost. As such, unless both of the mirrored journal drives fail, both of the partner nodes can function as normal.

With partner node protection, where possible, nodes will be placed in different neighborhoods - and hence different failure domains. Partner node protection is possible once the cluster reaches five full chassis (20 nodes) when, after the first neighborhood split, OneFS places partner nodes in different neighborhoods:

Figure 19. Split to two neighborhoods at twenty nodes

Partner node protection increases reliability because if both nodes go down, they are in different failure domains, so their failure domains only suffer the loss of a single node.

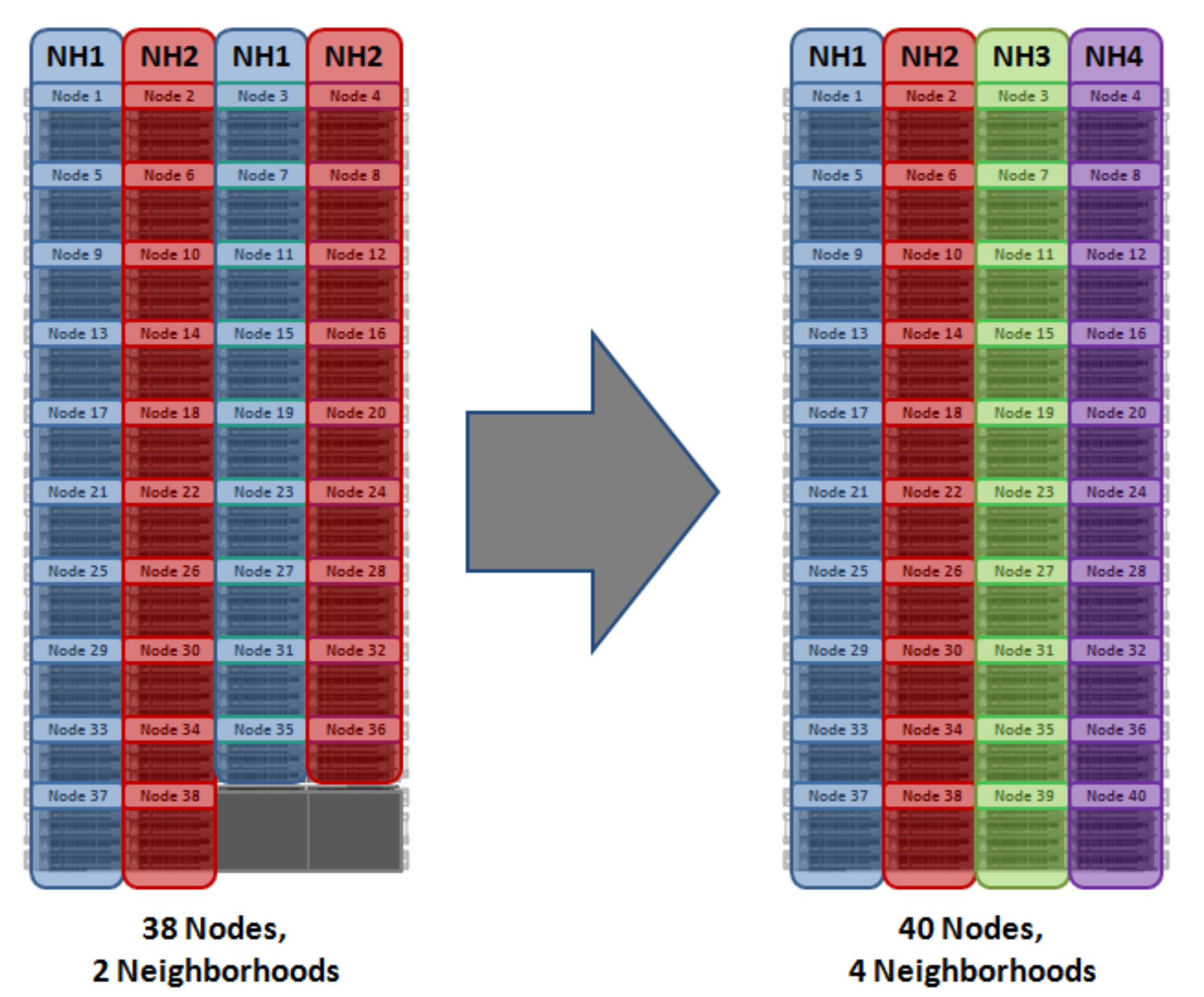

With chassis protection, when possible, each of the four nodes within a chassis will be placed in a separate neighborhood. Chassis protection becomes possible at 40 nodes, as the neighborhood split at 40 nodes enables every node in a chassis to be placed in a different neighborhood. As such, when a 38 node Gen6 cluster is expanded to 40 nodes, the two existing neighborhoods will be split into four 10-node neighborhoods:

Chassis protection ensures that if an entire chassis failed, each failure domain would only lose one node.

Figure 20. OneFS neighborhoods—Four neighborhood split

A 40 node or larger cluster with four neighborhoods, protected at the default level of +2d:1n can sustain a single node failure per neighborhood. This protects the cluster against a single Gen6 chassis failure.

Overall, a Gen6 platform cluster will have a reliability of at least one order of magnitude greater than previous generation clusters of a similar capacity as a direct result of the following enhancements:

- Mirrored Journals

- Smaller Neighborhoods

- Mirrored Boot Drives

Compatibility

Certain similar, but non-identical, node types can be provisioned to an existing node pool by node compatibility. OneFS requires that a node pool must contain a minimum of three nodes.

Due to significant architectural differences, there are no node compatibilities between the Gen6 platform, previous hardware generations, or the PowerScale nodes.

OneFS also contains an SSD compatibility option, which allows nodes with dissimilar capacity SSDs to be provisioned to a single node pool.

The SSD compatibility is created and described in the OneFS WebUI SmartPools Compatibilities list and is also displayed in the Tiers & Node Pools list.

When creating this SSD compatibility, OneFS automatically checks that the two pools to be merged have the same number of SSDs, tier, requested protection, and L3 cache settings. If these settings differ, the OneFS WebUI will prompt for consolidation and alignment of these settings.

More information is available in the SmartPools white paper.

Supported protocols

Clients with adequate credentials and privileges can create, modify, and read data using one of the standard supported methods for communicating with the cluster:

- NFS (Network File System)

- SMB/CIFS (Server Message Block/Common Internet File System)

- FTP (File Transfer Protocol)

- HTTP (Hypertext Transfer Protocol)

- HDFS (Hadoop Distributed File System)

- REST API (Representational State Transfer Application Programming Interface)

- S3 (Object Storage API)

For the NFS protocol, OneFS supports both NFSv3 and NFSv4, plus NFSv4.1 in OneFS 9.3. Also, OneFS 9.2 and later include support for NFSv3overRDMA.

On the Microsoft Windows side, the SMB protocol is supported up to version 3. As part of the SMB3 dialect, OneFS supports the following features:

- SMB3 Multi-path

- SMB3 Continuous Availability and Witness

- SMB3 Encryption

SMB3 encryption can be configured on a per-share, per-zone, or cluster-wide basis. Only operating systems that support SMB3 encryption can work with encrypted shares. These operating systems can also work with unencrypted shares if the cluster is configured to allow non-encrypted connections. Other operating systems can access non-encrypted shares only if the cluster is configured to allow non-encrypted connections.

The file system root for all data in the cluster is /ifs (the OneFS file system). This can be presented through the SMB protocol as an ‘ifs’ share (\\<cluster_name\ifs), through the NFS protocol as a ‘/ifs’ export (<cluster_name>:/ifs), and through the S3 protocol as an object interface.

Data is common between all protocols, so changes made to file content through one access protocol are instantly viewable from all others.

SmartQoS, introduced in OneFS 9.5, provides the ability to limit NFS3, NFS4, NFSoRDMA, S3 or SMB protocol operations per second (Protocol Ops), including mixed traffic to the same workload.

OneFS also provides full support for both IPv4 and IPv6 environments across the front-end Ethernet network(s), SmartConnect, and the complete array of storage protocols and management tools.

Additionally, OneFS CloudPools supports the following cloud providers’ storage APIs, allowing files to be stubbed out to a number of storage targets, including:

- Amazon Web Services S3

- Microsoft Azure

- Google Cloud Service

- Alibaba Cloud

- Dell ECS

- OneFS RAN (RESTful Access to Namespace)

More information is available in the CloudPools administration guide.

Non-disruptive operations - protocol support

OneFS contributes to data availability by supporting dynamic NFSv3 and NFSv4 failover and failback for Linux and UNIX clients, and SMB3 continuous availability for Windows clients. This ensures that when a node failure occurs, or preventative maintenance is performed, all in-flight reads and writes are handed off to another node in the cluster to finish its operation without any user or application interruption.

During failover, clients are evenly redistributed across all remaining nodes in the cluster, ensuring minimal performance impact. If a node is brought down for any reason, including a failure, the virtual IP addresses on that node is seamlessly migrated to another node in the cluster.

When the offline node is brought back online, SmartConnect automatically rebalances the NFS and SMB3 clients across the entire cluster to ensure maximum storage and performance utilization. For periodic system maintenance and software updates, this functionality allows for per-node rolling upgrades, affording full-availability throughout the duration of the maintenance window.

File filtering

OneFS file filtering can be used across NFS and SMB clients to allow or disallow writes to an export, share, or access zone. This feature prevents certain types of file extensions to be blocked, for files which might cause security problems, productivity disruptions, throughput issues or storage clutter. Configuration can be either using an exclusion list, which blocks explicit file extensions, or an inclusion list, which explicitly allows writes of only certain file types.