Home > Storage > Unity XT > Virtualization, Cloud & Applications > Dell Unity XT: Oracle Database Best Practices > Linux setup and configuration

Linux setup and configuration

-

Oracle databases are commonly deployed on Linux operating systems. The following subsections describe best practices when working with Dell Unity storage systems on Linux operating systems.

Discovering and identifying Dell Unity LUNs on a host

After creating and enabling host access of the LUNs in the Dell Unity system, the host operating system needs to scan for these LUNs before they can be used. On Linux, install the following rpm packages which contain useful utilities to discover and identify LUNs: sg3_utils and lsscsi.

Identifying LUN IDs on Dell Unity storage

When creating a LUN, if the Host LUN ID is not specified, Dell Unity automatically assigns LUN IDs, starting from 0 and incrementing by 1 thereafter. LUN 0 typically represents the first LUN allowed access to a host.

Perform the following in Unisphere to view the LUN ID information:

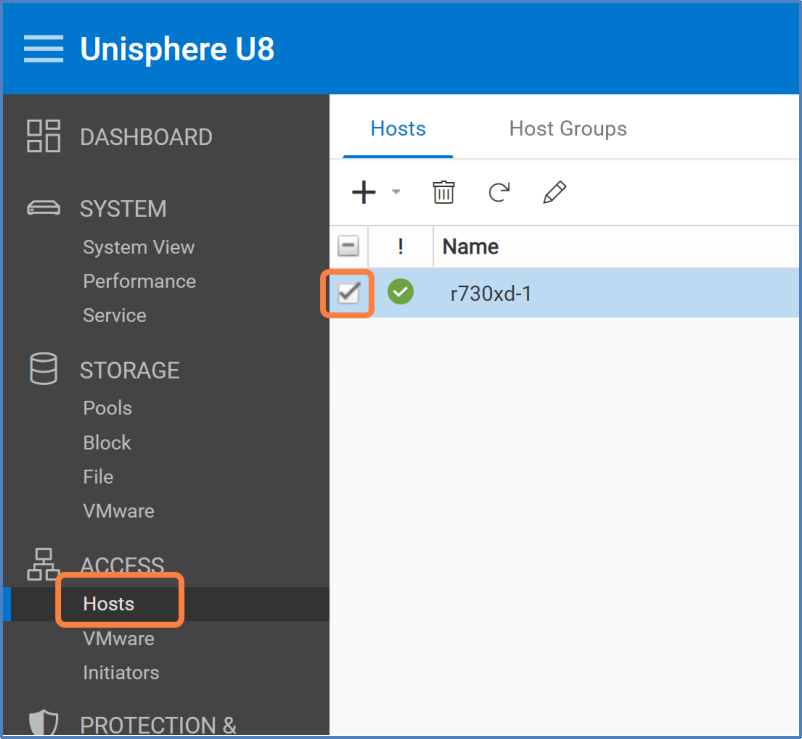

- In Unisphere’s navigation menu under ACCESS, click Hosts.

- Click the checkbox associated with the name of the host.

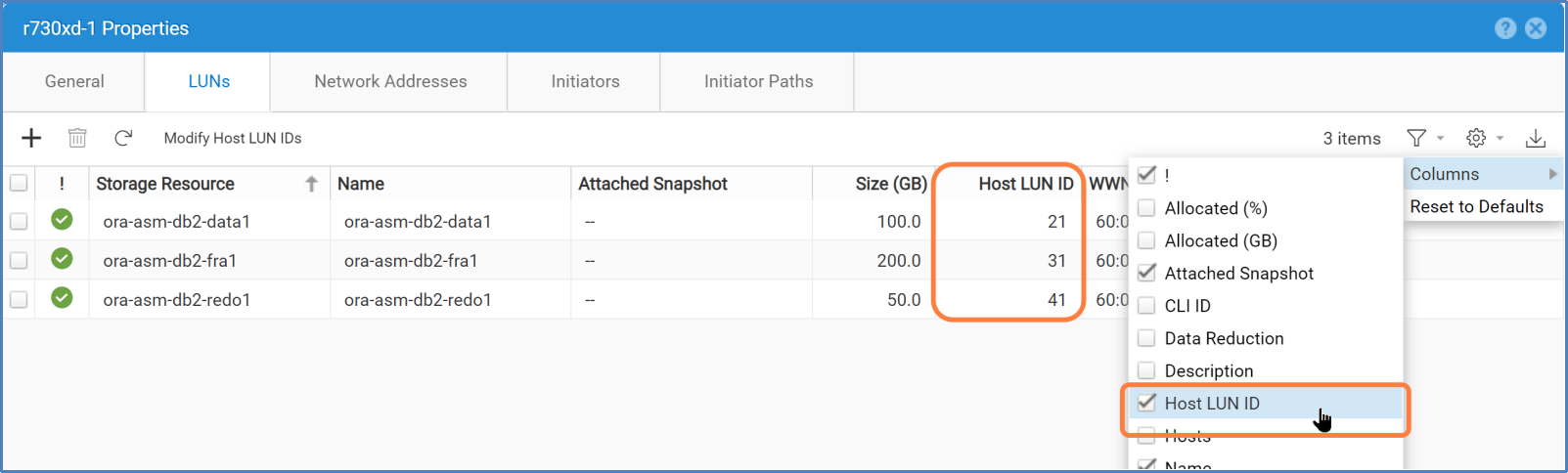

- Click Host Properties (pencil icon) > LUNs tab.

- If column Host LUN ID is hidden from the default view, click the columns filter (gear icon) > Columns > Host LUN ID.

Scanning for LUNs

LUNZ is known as a host LUN and is not a real LUN. LUNZ appears on the Linux operating system for making the Dell Unity system visible to the host when no LUNs are assigned to the host. LUNZ always takes up ID 0 on the host. Any I/Os sent down the LUNZ paths result in errors.

To see if there are any LUNZ on the system, run the lsscsi command.

# lsscsi|egrep DGC

[13:0:0:0] disk DGC LUNZ 4201 /dev/sde

[13:0:1:0] disk DGC LUNZ 4201 /dev/sdaf

[14:0:0:0] disk DGC LUNZ 4201 /dev/sdd

[14:0:1:0] disk DGC LUNZ 4201 /dev/sdag

[snipped]

If the host detects LUN ID 0 and LUNZ, run rescan-scsi-bus.sh with the --forcerescan option. LUNZ will be removed and allows the real LUN 0 to show up on the host. For example:

# /usr/bin/rescan-scsi-bus.sh --forcerescan

# lsscsi |egrep DGC

[13:0:0:0] disk DGC VRAID 4201 /dev/sde

[13:0:1:0] disk DGC VRAID 4201 /dev/sdaf

[14:0:0:0] disk DGC VRAID 4201 /dev/sdd

[14:0:1:0] disk DGC VRAID 4201 /dev/sdag

[snipped]

When the Dell Unity LUNs have nonzero IDs, use -a option instead.

# /usr/bin/rescan-scsi-bus.sh -a

Note: Omitting the --forcerescan option might prevent the operating system from discovering LUN 0 because of the LUNZ conflict.

Identifying LUNs by WWNs

The most accurate way to identify a LUN on the host operating system is by its WWN. The Dell Unity system assigns a unique WWN for each LUN. WWN information can be found using the same steps in Identifying LUN IDs on Dell Unity storage. However, instead of using column HOST LUN ID, use WWN.

Querying WWNs using scsi_id command

To query the WWN on a Linux operating system, run the following commands against the device file.

# /usr/lib/udev/scsi_id --page=0x83 --whitelisted --device=<device>

Where <device> can be one of the following:

- Single path device (/dev/sde)

- Linux multipath device (/dev/mapper/mpathe)

- Dell PowerPath device (/dev/emcpowerc)

The string returned by the scsi_id command indicates the WWN of the Dell Unity LUN, prepended with a 3:

36006016010e0420093a88859586140a5

Querying WWNs using multipath command

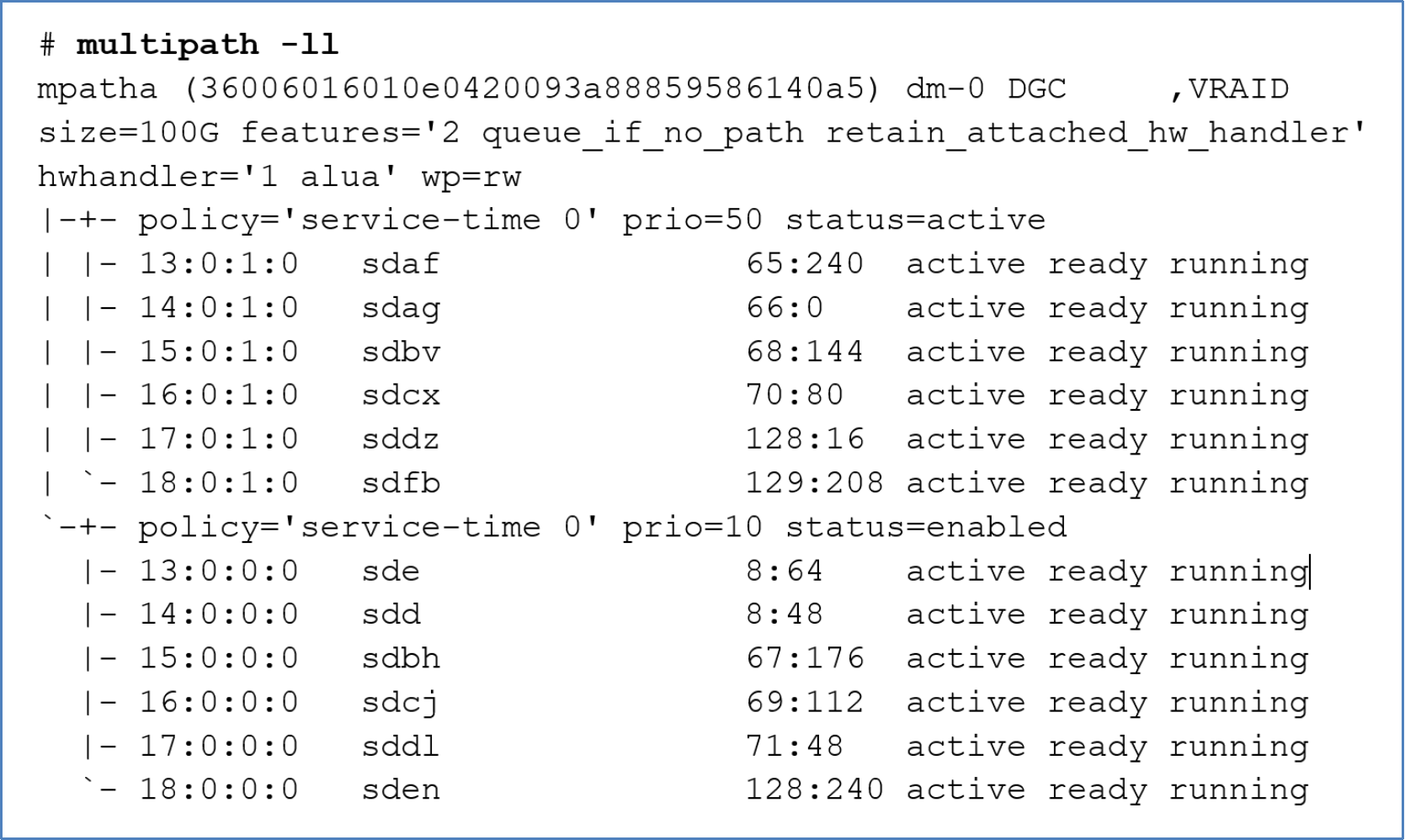

If the system has Linux device-mapper-multipath software enabled, the multipath command displays the multipath device properties including the WWN. For example:

Figure 2. multipath -ll

Querying WWNs using powermt command

Similarly, when PowerPath is enabled on the system, the powermt command displays the multipath device properties including the WWN.

# powermt display dev=all

Multipathing

Multipathing is a software solution implemented at the host operating system level. While multipathing is optional, it provides path redundancy, failover, and performance-enhancing capabilities. It is recommended to deploy the solution in a production environment or any environments where availability and performance are critical.

Main benefits of using an MPIO solution

- Increase database availability by providing automatic path failover and failback

- Enhance database I/O performance by providing automatic load balancing and capabilities for multiple parallel I/O paths

- Ease administration by providing persistent user-friendly names for the storage devices across cluster nodes

Multipath software solutions

There are a few multipath software choices available to choose from. It is up to the administrator's preference to decide which software solution is best for the environment. The following list provides a brief description of some of these solutions.

- Native Linux multipath (device-mapper-multipath)

- Dell PowerPath

- Symantec Veritas Dynamic Multipathing (VxDMP)

The native Linux multipath solution is supported and bundled with most popular Linux distributions in use today. Because the software is widely and readily available at no additional cost, many administrators prefer using it compared to other third-party solutions.

Unlike the native Linux multipath solution, both Dell PowerPath and Symantec VxDMP provide extended capabilities for some storage platforms and software integrations. Both solutions also offer support for numerous operating systems in addition to Linux.

Only one multipath software solution should be enabled on the host, and the same solution should be deployed in a cluster on all cluster hosts.

See the vendor's multipath solution documentation for more information. For information about operating systems supported by Dell PowerPath, see Support for PowerPath | Overview | Dell US.

Connectivity guidelines

The following list provides a summary of array-to-host connectivity best practices. It is recommended to review the documents, Configuring Hosts to Access Fibre Channel (FC) or iSCSI Storage and Dell Unity: High Availability.

- Have at least two FC/iSCSI HBAs or ports to provide path redundancy.

- Connect the same port on both Dell Unity storage processors (SP) to the same switch because the Dell Unity system matches the physical port assignment on both SPs.

- Use multiple switches to provide switch redundancy.

Configuration file

To ease deployment of the native Linux multipath, it comes with a set of default settings. The default settings list storage models from different vendors including the Dell Unity system. The default settings allow the software to work with the Dell Unity system without additional configuration. However, these settings might not be optimal for all situations and should be reviewed and modified if necessary.

The multipath daemon configuration file needs to be created on newly installed systems. A basic template can be copied from /usr/share/doc/device-mapper-multipath-<version>/multipath.conf to /etc/multipath.conf as a starting point. Any settings that are not defined explicitly in /etc/multipath.conf would assume the default values. The full list of settings (explicitly set and default values) can be obtained using the following command. Specific Dell Unity settings can be found by searching for DGC from the output. The default settings generally work without any issues.

# multipathd –k"show config"

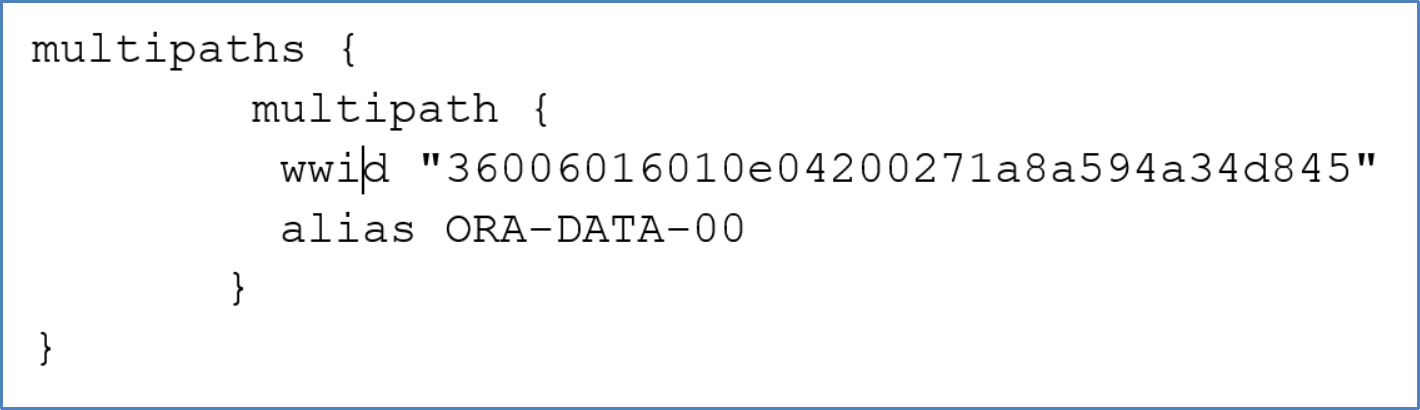

Creating aliases

It is generally a good idea to assign meaningful names (aliases) for the multipath devices though it is not mandatory. For example, create aliases based on the application type and environment it is in. The following snippet in the multipaths section assigns an alias of ORA-DATA-00 to the Dell Unity LUN with WWN 36006016010e04200271a8a594a34d845.

Figure 3. Multipath device configuration

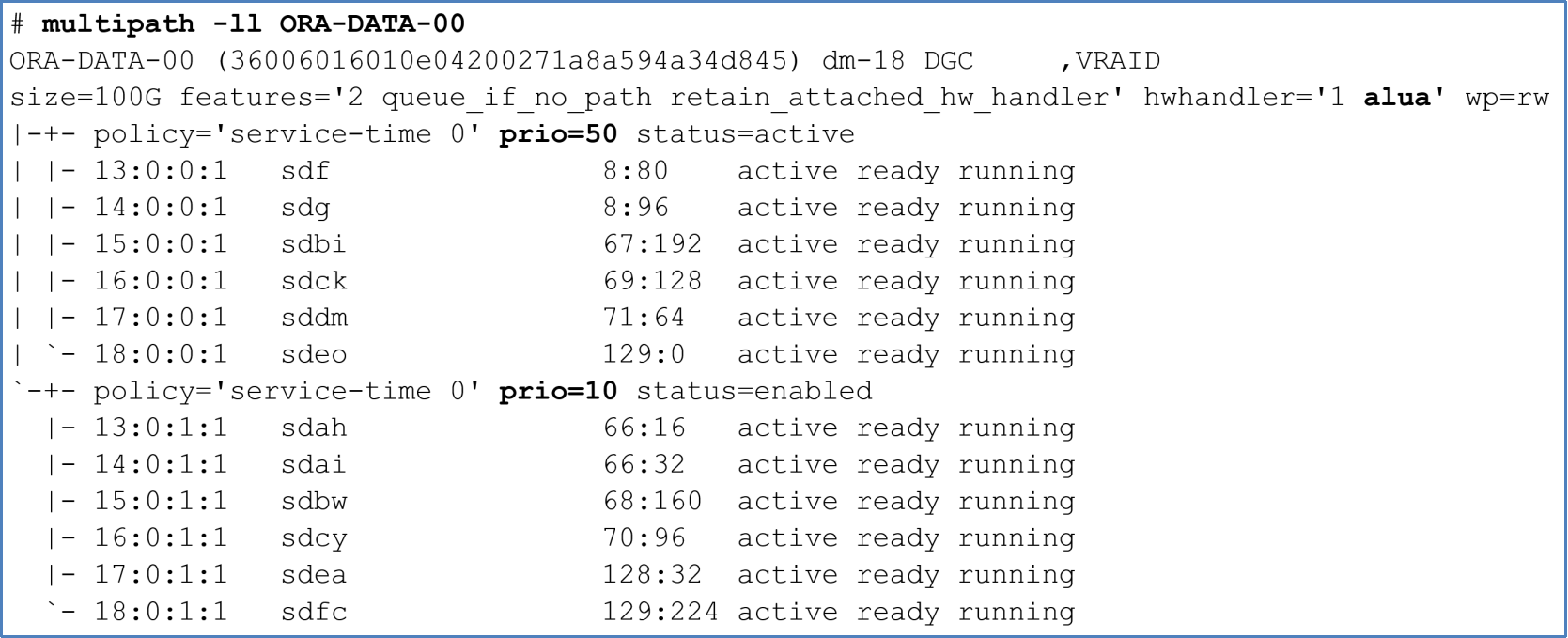

Asymmetric Logic Unit Access

Dell Unity systems support ALUA for host access. ALUA allows the host operating system to recognize optimized paths from nonoptimized paths. Optimized paths are the ones connected to the LUN’s SP owner, and they are assigned a higher priority. The default multipath settings reflect the support of the ALUA feature on Dell Unity storage. The following example shows LUN ORA-DATA-00 with 12 paths divided into two groups. The optimized paths have a priority of 50, and nonoptimized paths have a priority of 10.

Figure 4. Optimized and nonoptimized multipath paths

I/Os are sent down the optimized paths when possible. If I/Os are sent down the nonoptimized paths, the peer SP redirects the I/Os to the primary SP through the internal bus. When the Dell Unity system senses large amounts of nonoptimized I/Os, it automatically trespasses the LUN from the primary SP to the peer SP to optimize the data paths.

LUN partition

A LUN can be used as a whole partition or it can be divided into multiple partitions. Certain applications, such as Oracle ASMLib, recommend partitioning over whole LUNs. Dell Technologies recommends configuring whole LUNs without partitions wherever appropriate because it offers the most flexibility for configuring and managing the underlying storage.

See sections Oracle Automatic Storage Management and File systems on choosing a strategy to grow storage space.

Partition alignment

When partitioning a LUN, it is recommended to align the partition on the 1 MB boundary. Either fdisk or parted can be used to create the partition. Only parted can create 2 TB or larger partitions.

Creating partition using parted

Before creating a partition, label the device as GPT. Then, specify the partition offset at sector 2048, which will be a 1 MB offset from the beginning of the device. The following command labels device /dev/mapper/orabin-std and creates a single partition that consumes the entire LUN. Once the partition is created, the partition file, in this example /dev/mapper/orabin-std1, should be used for creating a file system or ASMLib volume.

parted --script /dev/mapper/orabin-std mklabel gpt mkpart primary 2048s 100% quit ;

Note: parted might issue a misalignment warning which can be safely ignored.

Partitioned devices and filesystems

When creating a file system, create the file system on a properly aligned partitioned device.

# mkfs.ext4 /dev/mapper/orabin-std1

I/O scheduler for Oracle ASM devices

The function of the disk I/O scheduler is to order, delay, or merge I/O requests submitted to a storage device to achieve performance improvements with throughput and latency. There are several different I/O schedulers that can be used in Linux. Each provides a different method for managing I/O requests. The recommended I/O scheduler will depend on several factors. Some factors are: Operating system version, UEK version, Oracle version, storage media used in the storage array, and type of database processing.

Oracle Linux 8 has four different I/O schedulers:

none: Implements a first-in-first-out (FIFO) scheduling algorithm. When high-performance SSDs are used, or when a CPU-bound system with fast storage exists, the 'none' scheduler is recommended. Scheduler ‘none’ is the default I/O scheduler.

mq-deadline: Sorts queued I/O requests into read or write batches. Batches are then performed in increasing logical block address (LBA) order so that writes are organized by location to storage. mq-deadline is suitable for most use cases, but is suited best for traditional hard drive storage with a SCSI interface, and where write operations are mostly asynchronous.

kyber: Tunes itself by analyzing each I/O request to obtain the least amount of latency for each request. kyber is recommended, as an alternative to the 'none' scheduler, for high-performance SSDs or CPU-bound systems with fast storage.

bfq: Prioritizes on providing lowest latency rather than maximum throughput. bfq is recommended for copying large files, systems with traditional hard drive with a SCSI interface, and desktop or interactive activities.

For additional use cases, see Red Hat Enterprise Linux 8, Optimizing system throughput, latency, and power consumption.

Earlier Linux versions implemented I/O schedules: noop, deadline, and cfq.

Before changing the default I/O scheduler in production, review the latest Oracle documentation and confer with Oracle support for recommended values. Then change the scheduler in a test environment, simulate production activity, and monitor performance before changing the value in production.

To verify the I/O schedule, use the following commands:

# egrep "*" /sys/block/sd*/queue/scheduler

/sys/block/sdaa/queue/scheduler:[none] mq-deadline kyber bfq

/sys/block/sdab/queue/scheduler:[none] mq-deadline kyber bfq

/sys/block/sdac/queue/scheduler:[none] mq-deadline kyber bfq

<snippet>

To set the I/O schedule persistently, create an udev rule that updates the devices. See the section Linux dynamic device management (udev) for more information about using udev to set persistent ownership and permission.

The following example shows setting the deadline I/O scheduler on all /dev/sd* devices. The rule is appended to the 99-oracle-asmdevices.rule file.

# cat /etc/udev/rules.d/99-oracle-asmdevices.rules

ACTION=="add|change", KERNEL=="sd*", RUN+="/bin/sh -c '/bin/echo mq-deadline > /sys$env{DEVPATH}/queue/scheduler'"

# udevadm control --reload-rules

# udevadm trigger