Throughput efficiency analysis with 2 second TTFT constraint

Home > AI Solutions > Gen AI > White Papers > Maximizing Llama Open Source Model Inference Performance with Tensor Parallelism on a Dell XE9680 with H100s > Throughput efficiency analysis with 2 second TTFT constraint

Throughput efficiency analysis with 2 second TTFT constraint

-

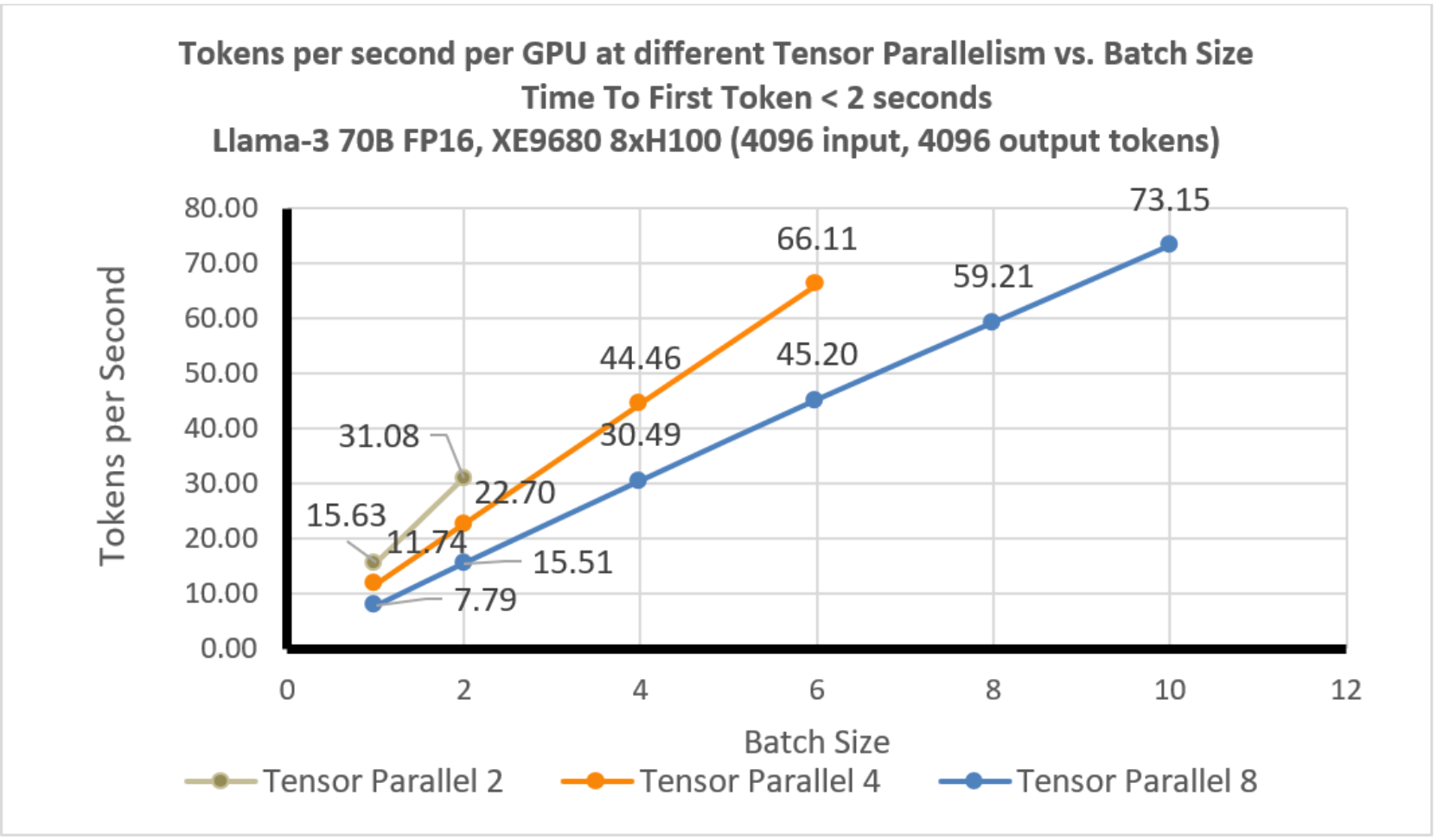

In this scenario, we plot the throughput efficiency per GPU for different tensor parallelism degrees at a full 8k tokens of context length, (4k input and 4k output).

Figure 3. Llama-3 70B: Tensor Parallelism Efficiency TPS vs Batch Size: TTFT < 2 seconds

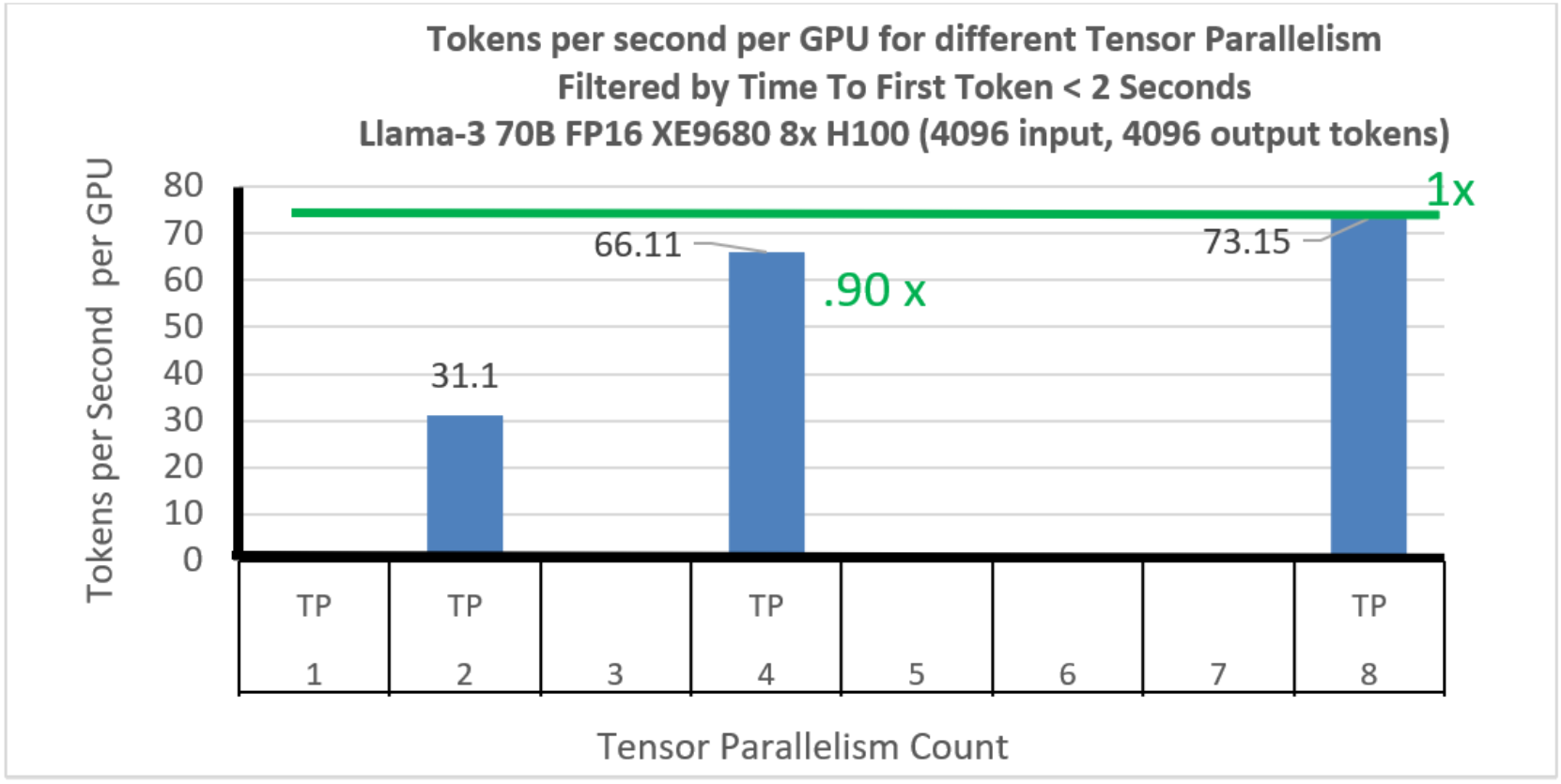

In Figure 4, we show the throughput per GPU efficiency at the best batch size (maximum batch size for each tensor parallelism). The TP2 case does not have enough pooled memory to be effective, but using TP4 the throughput per GPU is quite good (.9x of the peak at TP 8) and this continues to improve to the peak efficiency seen at TP=8.