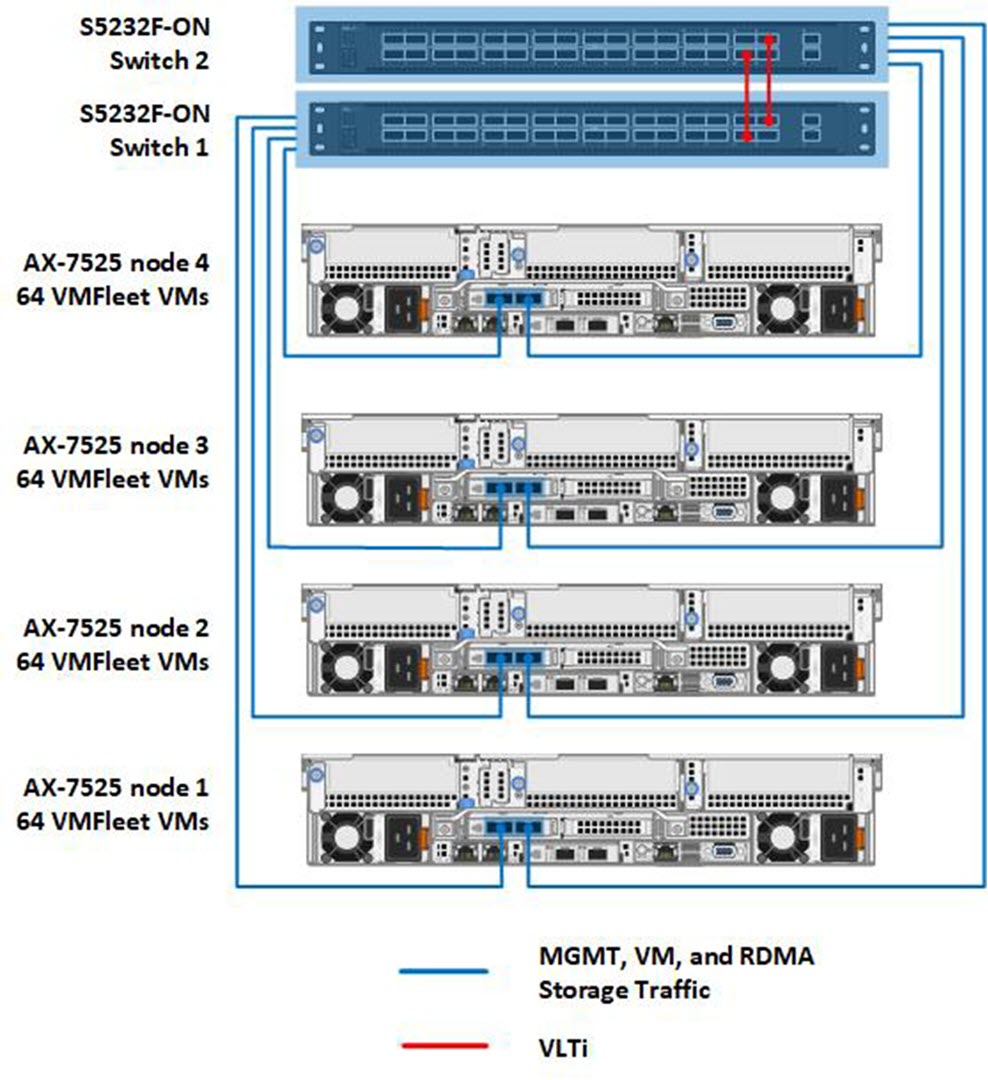

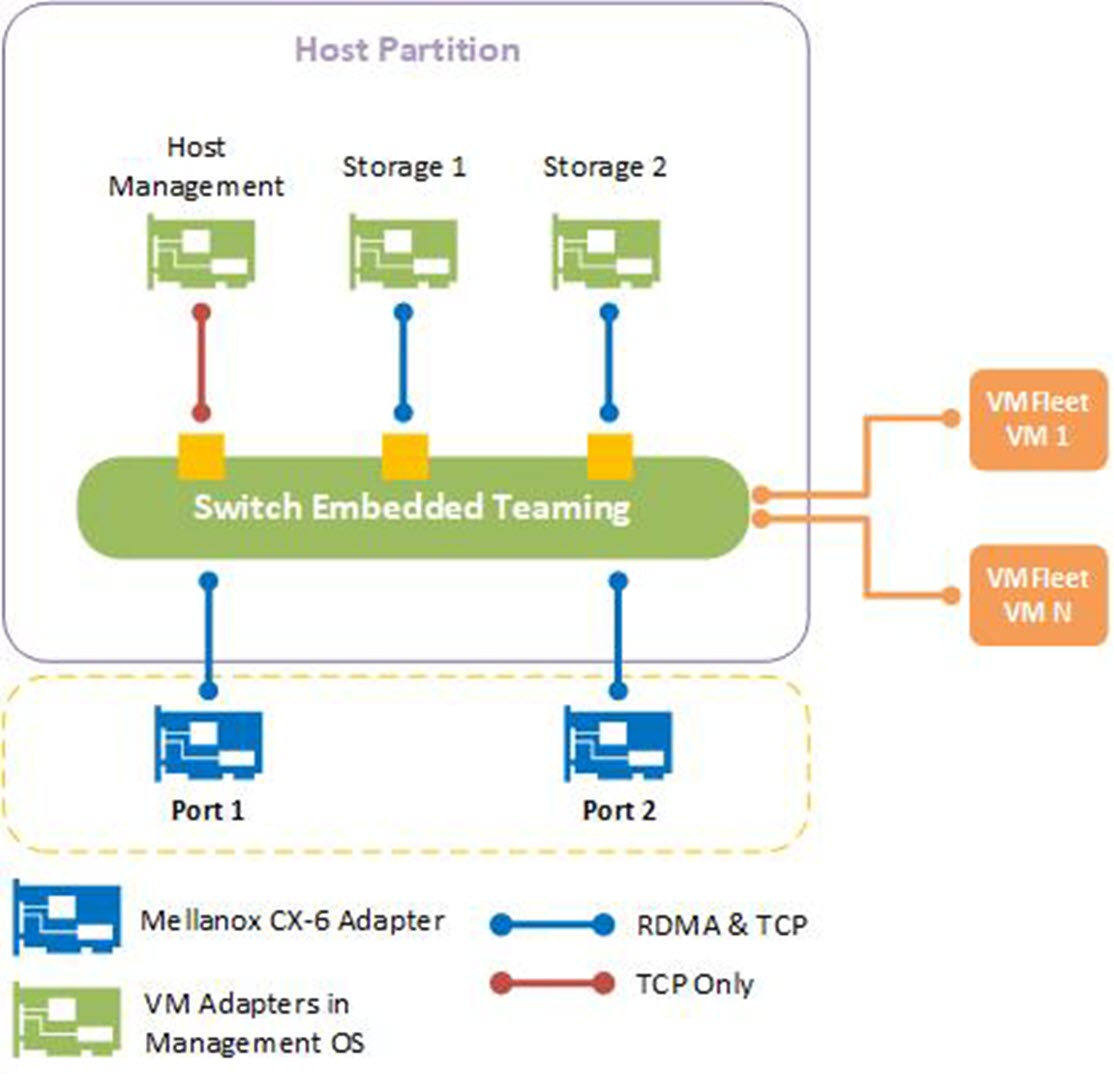

Figure 4 shows the cluster's physical networking as built in the lab. We set up a scalable, fully converged network topology with management, VM, and RDMA storage traffic traversing two 100 GbE Mellanox CX-6 adapter ports. With this topology, Data Center Bridging (DCB) configuration was required on the S5232F-ON ToR switches. We also required Quality of Service (QoS) configuration in the host operating system. Figure 5 shows the virtual network configuration on the host operating system using Switch Embedded Teaming (SET).

Ancillary services that are required for cluster operations such as Active Directory, DNS, and a file server or cloud-based witness are outside the scope of this white paper. For information about all the steps that are required to deploy this cluster, see our Deployment Guide and Network Integration and Host Network Configuration Options Guide.

The following two tables provide additional detail about the cluster configuration and AX-7525 specifications as built in the lab. We chose the three-way mirror resiliency type for the volumes we created with VMFleet because of its superior performance compared to erasure coding options in Storage Spaces Direct. The raw storage capacity of the cluster was a little over 150 TB. This resulted in just under 50 TB of usable storage capacity due to three-way mirroring's capacity efficiency of 33 percent. Microsoft and Dell Technologies also recommend leaving reserve capacity equivalent to the capacity of one drive per server.

| Cluster design elements | Description |

| Cluster node model | AX-7525 |

| Number of cluster nodes | 4 |

| Network switch model | Dell PowerSwitch S5232F-ON featuring 32 x 100 GbE QSFP28 ports |

| Number of ToR network switches | 2 |

| Network topology | Fully converged network configuration |

| Volume resiliency | Three-way mirror |

| Usable storage capacity | Approximately 50 TB |

| Resources per cluster node | Description |

| CPU | Dual-socket AMD EPYC 7742 64-Core Processor |

| Memory | 1 TB 3200 MHz DDR4 RAM |

| Storage controller for operating system | BOSS-S1 adapter card |

| Physical drives for operating system | 2 x M.2 480 GB SATA drives configured as RAID 1 |

| Physical drives for Storage Spaces Direct | 24 NVMe drives (PCIe Gen 4) |

| Network adapter for management, VM, and storage traffic | 1 x Mellanox ConnectX-6 DX Dual Port 40/100 GbE QSFP56 Adapter |

| Operating system | Microsoft Azure Stack HCI, version 20H2 |