Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Red Hat > Guides > Red Hat Open Stack Platform Guides > Jumbo frames Performance Analysis Over Red Hat OpenStack 16.1 Infrastructure > Implementation of best practices

Implementation of best practices

-

This section provides insights on implementing best practices concerning Dell EMC powered JetPack OpenStack and manual Red Hat OpenStack Platform deployments.

Red Hat OpenStack Platform can either be deployed manually or via automation scripts provided under JetPack automation toolkit. Manual deployment is typical but time-consuming. Installation and configuration need to be performed manually[7]. Contrarily, automation scripts ease the deployment with JetPack automation toolkit. Separate instructions have been written for the suggested tunings, where applicable, for manual and automated modes of deployment in the below sections.

The best practices discussed below have been validated and implemented on Dell PowerEdge R740xd servers provided under Dell EMC Ready Architecture RHOSP 16.1 [8].

Relation between NIC and NUMA socket

NUMA-awareness is hardware architectural design dividing processor cores into single or multiple NUMA nodes. Each NUMA node has its own set of CPUs and memory allocation. Memory access to the processor has performance significance. A processor accessing memory from neighboring NUMA node would be slower than utilizing its own local memory. Performance could be improved when NIC and CPUs allocated to VMs are from the same NUMA node. The relationship between the two components can be verified through the steps discussed below.

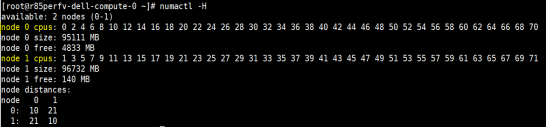

Logical cores are divided equally among the two NUMA nodes on each compute host. The following snapshot indicates the vCPUs distribution on each compute node for the current setup.

Figure 5. Distribution of cores across NUMA sockets

After RHOSP 16.1 installation, the NUMA socket of DPDK enabled interfaces can be verified using the commands below.

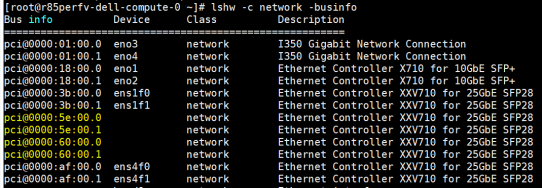

List all the interfaces on compute node with PCIe addresses:

# lshw -c network -businfo

Figure 6. List of interfaces with PCIe Addresses

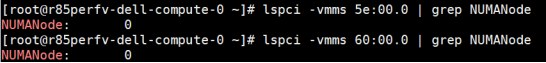

Find the NUMA socket for DPDK interfaces using their PCIe addresses.

# lspci -vmms 01:00.0 | grep NUMANode

Above mentioned snapshot verifies that DPDK ports are from the same NUMA socket 0.

Note: This step is valid for both manual and automated deployments.

Isolated CPU allocation for PMD and guest VMs

The current setup i.e. Appendix A includes the isolation of CPUs for DPDK Poll Mode Drivers (PMDs) and guest VMs from CPUs allocated to host processes. Out of 72 cores on a host, four are dedicated to host processes with parameter HostCpusList, whereas ten are assigned as PMD cores (eight from NUMA socket-0, two from NUMA socket-1) with parameter NeutronDpdkCoreList. The default convention is to allocate one PMD thread per NUMA node, whereas guest VMs utilize the remaining cores with the parameter NovaVcpuPinSet.

These updated parameters are in a template file for automated (neutron-OVS-dpdk.yaml) and manual (network-environment.yaml) deployments. The reference for CPU parameters [9], DPDK parameters for network environment files[10] and OVS-DPDK parameters template [11] are given for detailed understanding.

DPDK socket memory

The socket memory is a buffer memory for packets in OVS-DPDK. Allocating optimal size for socket memory on compute hosts reduces packet drops.

Note: This is also pre-deployment optimization that needs to be verified for compute host(s).

Based on the following guidance[12] for a quad-port Intel Ethernet Network Adapter XXV710 NIC, MTU of 9,000 bytes for each port, the following set of socket memory calculations apply:

- Round-up 9,000 bytes to the nearest multiple of 1,024 bytes, specifically 9,216 bytes.

- Add padding of 800 bytes to the previous result and multiply by (4,096 X 64) to achieve 2,625,634,304 bytes.

- Note that 800 bytes are the overhead value, and 4,096 X 64 is the number of packets in the mempool.

- Repeat steps one and two for each DPDK port connected to socket 0.

- Calculate the total amount of memory required and include a 512 MB buffer. In this case the total memory required would be (4 X 2,625,634,304 bytes) + 536,870,912 bytes or 11,039,408,128 bytes.

- Convert from bytes to megabytes, in this case resulting in 10,528 MB.

- Round the previous result to the nearest multiple of 1,024 MB, specifically 11,264 MB.

- Repeat steps one through six for socket one. Thus, for a NUMA balanced architecture with 2x quad-port Intel Ethernet Network Adapter XXV710 NIC, MTU of 9,000 bytes, the result would be 11,264 MB for socket 0 and 11,264 MB for socket one.

Note: Allocate at least 1024 MB to the unused socket if there are no DPDK ports attached to socket one.

Manual deployment

The compute hosts assign hugepage memory allocation as DPDK socket memory on each NUMA node. The network-environment.yaml file needs to be updated and passed as a template file when deploying OVS-DPDK. Socket memory parameter is set as OVSDpdkSocketMemory parameter in the file. The size of socket memory for each NIC is dependent on the size of MTU. The greater the size of MTU, the larger the socket memory. The previous section describes the detailed calculations for socket memory.

The reference template network-environment.yaml populates the DPDK socket memory with other parameters mentioned.[13]

Automated deployment

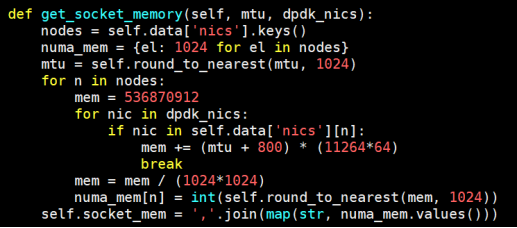

Update the socket memory for RHOSP 16 deployment using JetPack automation toolkit as follows:

- Manually calculate the DPDK socket memory for MTU 9000 bytes as calculated in the previous section. Name that value X. This value is for a single NIC. Similarly, calculate for other NICs, as this setup uses four NICs. Use the aggregated value for 4 NICs in the get_socket_memory formula’s packets pool to produce 12288 MB of resultant socket memory.

- Clone the JetPack automation toolkit.

- Go to directory: Jetpack/src/pilot

- Edit the file nfv_parameters.py, update the function get_socket_memory by replacing 4096*64 with calculated value ‘X’ in previous steps as (4X*64). It will populate the neutron-ovs-dpdk.yaml file parameters on the runtime.

Figure 7. Socket memory function

Multiple RX queues on DPDK physical ports

Usually, the NIC has single Rx queue processing packets between the hardware and the kernel. However, the performance with single Rx queue is confined to larger packet sizes. The NIC redirects traffic to the Rx queue by hashing source/destination IP or source/destination port. Better performance is obtained with multiple Rx queues because each Rx queue has a separate CPU pinned to it.

Initially, the current setup utilized a single Rx queue and experienced packet drops. Afterward, we enabled multiple Rx queues on physical DPDK ports of compute hosts. The IP stepping was enabled in the traffic generator to hash the traffic on multiple Rx queues, basically employing Receive Side Scaling (RSS). Significant optimization was achieved that ultimately helped to gain ZPL with a 100% line rate.

We now discuss how to configure these parameters in a manual and automated RHOSP deployment.

Manual deployment

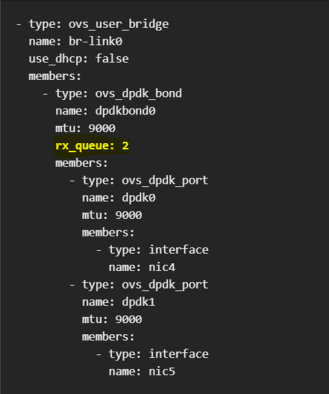

Enable multiple Rx queues in the compute.yaml template file. Reference for compute template is included to scale Rx queues[14]. Make sure to enable multi-Rx queues before deployment. The parameter that sets the number of Rx queues is rx_queue in Red Hat documentation.

Figure 8. Rx Queue parameter in compute template

Automated deployment

In the JetPack toolkit, edit the file dellcompute_dpdk.yaml containing detailed parameters of compute host, located at the path:

JetPack/src/pilot/templates/nic-configs/OVS-dpdk_9_port/dellcompute_dpdk.yaml

To scale the Rx queues, update this parameter in the file: rx_queue. This parameter takes positive integer values.

NumDpdkInterfaceRxQueues:

description: Number of Rx Queues required for DPDK bond or DPDK ports

default: 2

type: number

----------

- type: OVS_dpdk_bond

name: dpdkbond0

rx_queue:

get_param: NumDpdkInterfaceRxQueues

Note: The latest deployment was done using two Rx queues per DPDK port with zero ZPL.

Hugepages backing for VM profile

Hugepages are to be enabled on compute hosts for OVS-DPDK deployment in Red Hat OpenStack Platform16.1; this is a pre-deployment requirement. The recommended size for a hugepage is 1GiB. Virtual machines spun on compute hosts are allocated the desired number of hugepages from the host node.

DPDK memory channels

The multi-channel memory architecture technology enables support for multiple communication channels, thus, increasing the data transfer rates between memory modules, DRAM, and the memory controller. Hardware should be capable of supporting multiple memory channels.

Memory channels are wire traces between the memory unit and CPU for data movement. The number of memory channels acts as paths for data transfer at faster rates. For OVS-DPDK, the parameter OVSDpdkMemoryChannels holds the number of actively used channels. Mostly, memory channels per NUMA node are four (default) but may vary with platform. Configure the number of memory channels pre or post-deployment.

Before deployment, expose parameter “OvsDpdkMemoryChannels” in network-environment.yaml file. After deployment on the compute node, scale the number of memory channels on the runtime. The following commands scale the memory channels from default to the desired number.

List the default values:

# ovs-vsctl get Open_vSwitch . other_config

Scale the memory channels to six.

# ovs-vsctl set Open_vSwitch . other_config:dpdk-extra="-n 6”

{dpdk-extra="-n 6", dpdk-init="true", dpdk-lcore- mask="3000000003", dpdk-socket-mem="8977,1024", pmd-cpu-mask="100601004100601004"}

DPDK Tx and Rx descriptor size

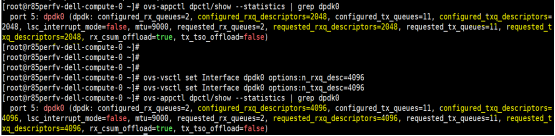

The descriptor size defines the Tx/Rx queue size for each port. On each compute node, both Tx and Rx descriptor sizes change from default 2048 to 4096. The steps to configure this are as follows:

Check the default statistics for the DPDK port.

# ovs-appctl dpctl/show --statistics | grep dpdk0

Scale the Tx, Rx descriptors size for each DPDK port, respectively.

# ovs-vsctl set Interface dpdk0 options:n_rxq_desc=4096

# ovs-vsctl set Interface dpdk0 options:n_txq_desc=4096

Figure 9. DPDK port statistics

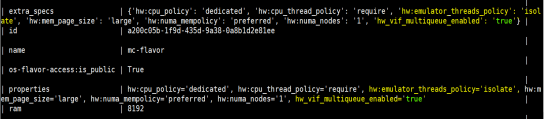

Emulator thread policy, multiple Rx queues on VirtIO

Achieve Emulator thread pinning by assigning an extra flavor spec, the default policy for this spec is dedicated. It’s recommended to use the isolate policy in addition to the dedicated property with the flavor[15].

Enabling multiple Rx queues on virtual interfaces requires assigning an extra flavor spec. The following command shows how to set both properties flavors during instance creation[16].

# openstack flavor set <flavor name> --property hw:emulator_threads_policy=isolate --property hw:cpu_policy=dedicated --property hw_vif_multiqueue_enabled=true

Figure 10. VM flavor properties

IsolCPUs

The kernel thread interrupts are run in isolation from CPUs dedicated for guest VMs. This parameter is configured in grub command line parameters. List of CPUs for parameters nohz_full and rcu_nocbs are identical to isolCPUs. The configuration is mentioned in Appendix A under “Other configurations“ section.