Home > Storage > PowerFlex > White Papers > Deploying MySQL Database on Dell PowerFlex with NVMe over TCP > NVMe/TCP configuration

NVMe/TCP configuration

-

The following sections describe the configuration of NVMe/TCP initiators on compute nodes.

Prerequisites

Meet the following requirements to configure NVMe/TCP on bare metal nodes:

- Compute nodes are installed with SLES 15 SP5 kernel version Linux 5.14.21-150500.55.52 or later. Linux 5.14.21-150500.55.52 is the minimum SLES kernel version required for PowerFlex NVMe/TCP support.

- The SDT component is installed on each storage node.

Configuring NVMe/TCP initiators in compute node

Perform the following steps to configure the NVMe/TCP initiator on the compute nodes:

- Log in to the compute node.

- Check if the NVMe module is loaded:

$ lsmod | grep nvme

nvme_tcp 40960 0

nvme_fabrics 28672 0 nvme_tcp

nvme_core 163840 2 nvme_tcp,nvme_fabrics

nvme_common 24576 1 nvme_core

t10_pi 16384 2 sd_mod,nvme_core

- Load the NVMe modules:

$ modprobe nvme_tcp

$ modprobe nvme_fabrics

- Rerun the following command to confirm that NVMe modules are loaded:

$ lsmod | grep nvme

nvme_tcp 40960 0

nvme_fabrics 28672 1 nvme_tcp

nvme_core 163840 2 nvme_tcp,nvme_fabrics

nvme_common 24576 1 nvme_core

t10_pi 16384 2 sd_mod,nvme_core

- Enable automatic loading of NVMe modules:

$ echo nvme_tcp >> /etc/modules-load.d/nvme_tcp.conf

$ echo nvme_fabrics >> /etc/modules-load.d/nvme_tcp.conf

- Install the NVMe CLI if it is not installed:

$ zypper install nvme-cli

- Check that the NVMe CLI is installed:

$ nvme

nvme-2.4

usage: nvme <command> [<device>] [<args>]

- Verify that the host NQN is available:

$ nvme show-hostnqn

nqn.2014-08.org.nvmexpress:uuid:4c4c4544-0039-5110-8037-b9c04f445632

$ cat /etc/nvme/hostid

4c4c4544-0039-5110-8037-b9c04f445632

The NVMe modules and initiators are now loaded.

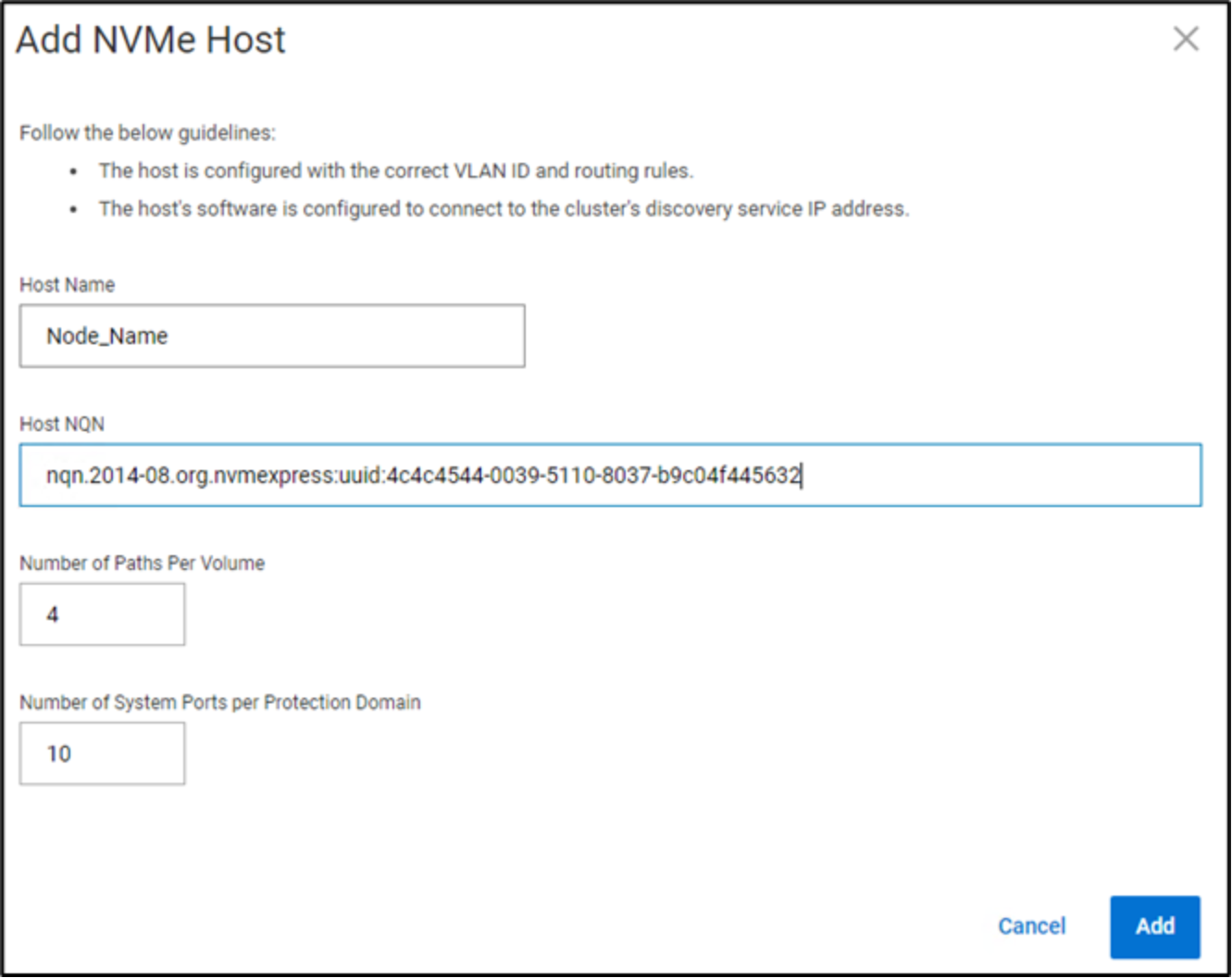

Adding NVMe hosts to PowerFlex

Perform the following steps to add the bare metal compute server as NVMe hosts to the PowerFlex system for storage consumption:

- Log in to the PowerFlex manager.

- To add NVMe hosts to the PowerFlex system, click Block > Host.

- Click Add Hosts.

- In the Add Host window, add the hostname and the host NQN that were generated in the preceding section as part of configuring NVMe initiators in the compute node.

The default number of paths are set to 4.

- Click Add to add the hosts, the added hosts are listed under Block>Hosts.

Figure 7. Adding NVMe nodesMapping volumes to NVMe hosts

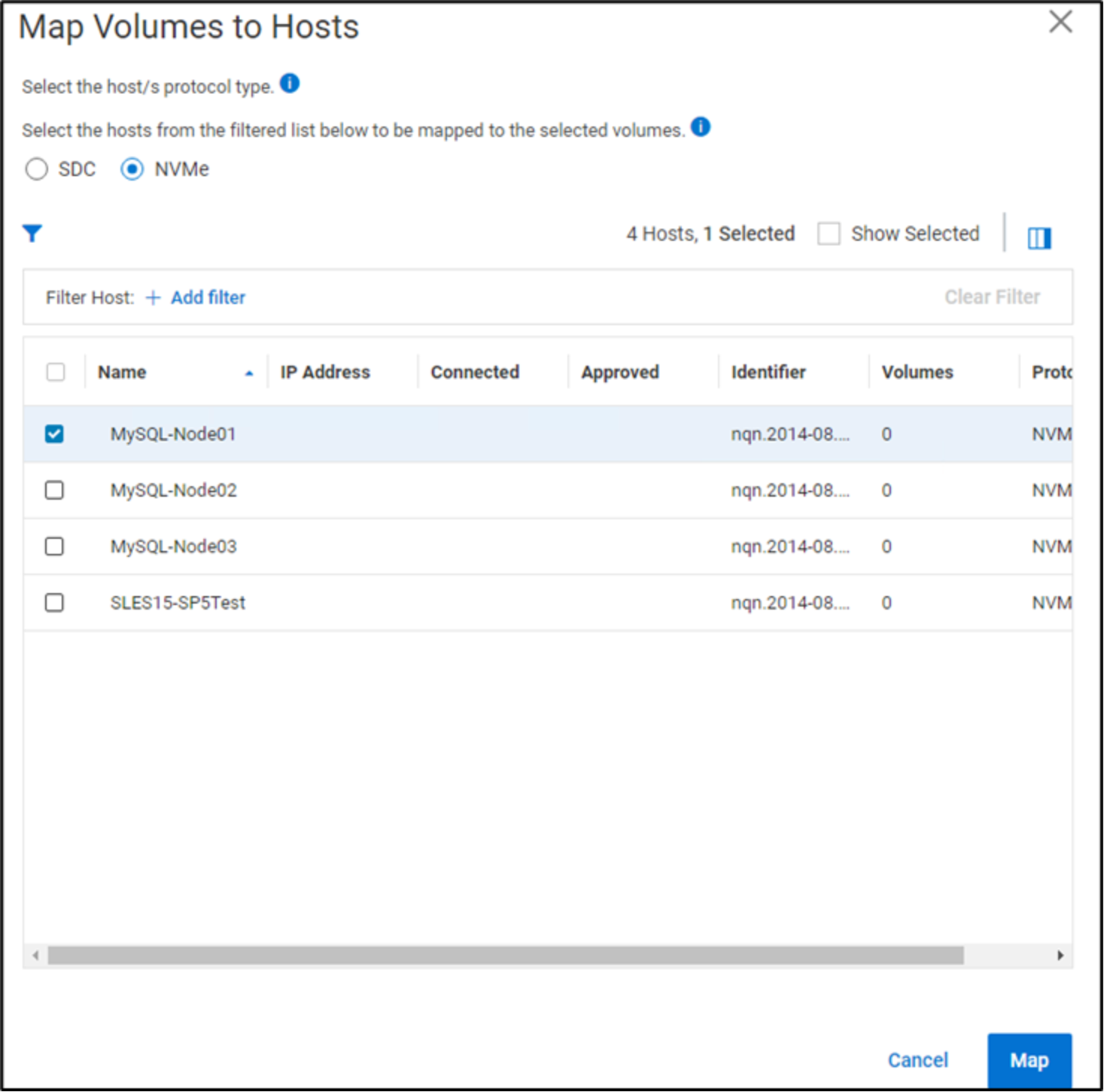

Perform the following steps to map PowerFlex volumes to the NVMe hosts:

- To add a volume to the NVMe host, click Block > Volumes. Perform the following steps if no volumes are created.

- Click Create Volume.

- Provide a name for the volume.

- Specify the size of the volumes in multiples of 8 GB.

- Click Create.

- To map the volumes that are created, click Block > Volumes and select the checkbox next to the volume name that must be mapped to the host added in step 5 in Adding NVMe hosts to PowerFlex.

- Click Mapping, and then select NVMe.

- Select the checkbox next to the name of the hosts to which the volumes must be mapped.

- Click Map to map the selected volumes to the host, as shown in the following figure:

Figure 8. Adding volumes to the NVMe nodeDiscovering volumes on NVMe host

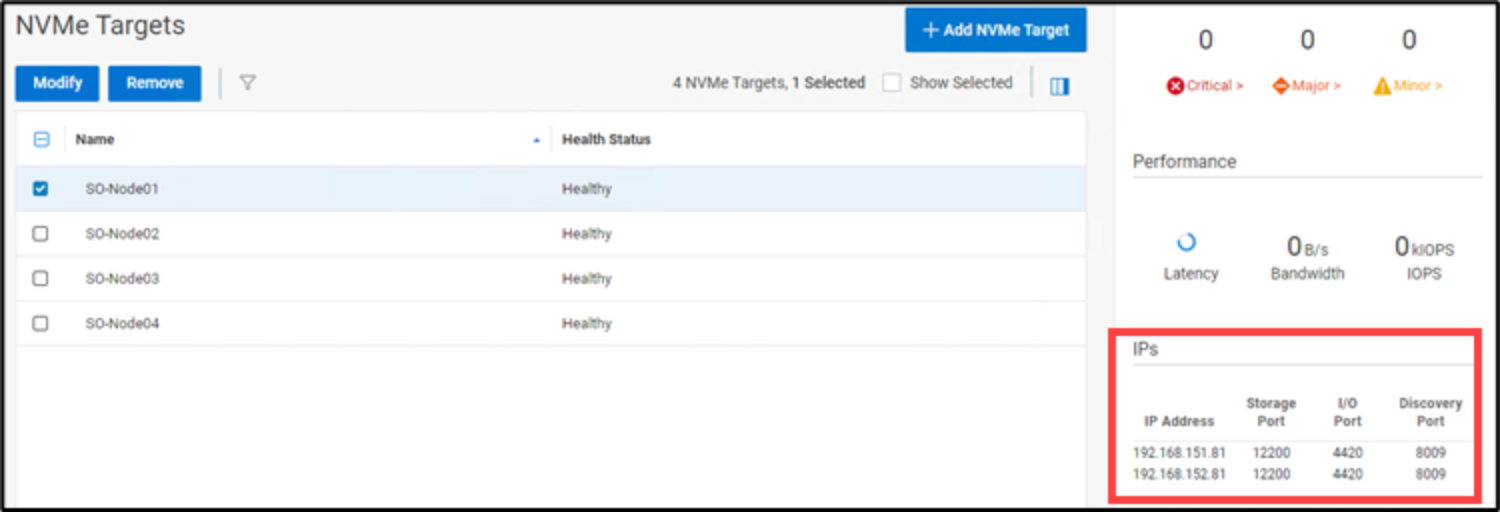

Perform the following steps to ensure that the mapping of the NVMe volumes is persistent on the compute nodes:

- Log in to the PowerFlex manager.

- Click Block > NVMe Targets.

- Select the NVMe target nodes and note that the IP address and discovery ports seen are at the bottom right of the screen, as shown in the following figure:

Figure 9. NVMe target node configurationIP address and port information is required to discover the NVMe targets on the compute nodes.

- Reboot the host and verify that the NVMe modules are automatically loaded:

$ lsmod |grep nvme

nvme_tcp 40960 0

nvme_fabrics 28672 1 nvme_tcp

nvme_core 163840 2 nvme_tcp,nvme_fabrics

nvme_common 24576 1 nvme_core

t10_pi 16384 2 sd_mod,nvme_core

- Discover NVMe SDT interfaces (NVMe/TCP targets) on the compute nodes:

$ nvme discover --transport=tcp --traddr=192.168.151.81 -s 4420

Discovery Log Number of Records 8, Generation counter 2

=====Discovery Log Entry 0======

trtype: tcp

adrfam: ipv4

subtype: nvme subsystem

treq: not specified, sq flow control disable supported

portid: 0

trsvcid: 4420

subnqn: nqn.1988-11.com.dell:powerflex:00:f54bea39e689d10f

traddr: 192.168.151.84

eflags: none

sectype: none

where #192.168.151.81 is the data network IP of the storage nodes.

Note: The IP address and port information used is gathered in Step 3.

- Check that multipathing is enabled:

# cat /sys/module/nvme_core/parameters/multipath

Y

- To connect all the paths to target hosts from the PowerFlex system:

- Verify path connectivity:

$ nvme list-subsys

nvme-subsys9 - NQN=nqn.2014-08.org.nvmexpress.discovery

hostnqn=nqn.2014-08.org.nvmexpress:uuid:4c4c4544-0039-5110-8037-b9c04f445632

iopolicy=numa

\

+- nvme9 tcp traddr=192.168.151.82,trsvcid=4420 live

.

.

.

.

+- nvme1 tcp traddr=192.168.151.84,trsvcid=4420 live

+- nvme2 tcp traddr=192.168.152.84,trsvcid=4420 live

+- nvme3 tcp traddr=192.168.151.81,trsvcid=4420 live

+- nvme4 tcp traddr=192.168.152.81,trsvcid=4420 live

+- nvme5 tcp traddr=192.168.151.83,trsvcid=4420 live

+- nvme6 tcp traddr=192.168.152.83,trsvcid=4420 live

+- nvme7 tcp traddr=192.168.151.82,trsvcid=4420 live

+- nvme8 tcp traddr=192.168.152.82,trsvcid=4420 live

nvme-subsys0 - NQN=nqn.2014-08.org.nvmexpress.discovery

hostnqn=nqn.2014-08.org.nvmexpress:uuid:4c4c4544-0039-5110-8036-b9c04f445632

iopolicy=numa

\

+- nvme0 tcp traddr=192.168.151.81,trsvcid=4420 live

Note: This command lists all the paths that are available for connectivity.

- Verify that the volumes are seen on the compute nodes:

$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 160G 0 disk

├─sda1 8:1 0 512M 0 part /boot/efi

├─sda2 8:2 0 10G 0 part /

├─sda3 8:3 0 86.7G 0 part /home

└─sda4 8:4 0 62.8G 0 part [SWAP]

sr0 11:0 1 1024M 0 rom

nvme1n1 259:1 0 760G 0 disk

nvme1n2 259:3 0 760G 0 disk

nvme1n3 259:5 0 760G 0 disk

- Enable the NVMe path and storage persistence, which persist after a reboot:

echo "-t tcp -a <SDT IP ADDRESS> -s 4420 -p" | tee -a /etc/nvme/discovery.conf

Note: The SDT IP address is the data network IP of the storage nodes.

- Enable and start the nvmf-autoconnect service:

# systemctl enable nvmf-autoconnect.service

# systemctl start nvmf-autoconnect.service

- Check that the nvmf-autoconnect service has started and is enabled:

# systemctl status nvmf-autoconnect.service

○ nvmf-autoconnect.service - Connect NVMe-oF subsystems automatically during boot

Loaded: loaded (/usr/lib/systemd/system/nvmf-autoconnect.service; enabled; vendor preset: disabled)

Active: inactive (dead) since Wed 2024-07-24 06:41:44 EDT; 5min ago

Process: 59572 ExecStartPre=/sbin/modprobe nvme-fabrics (code=exited, status=0/SUCCESS)

Process: 59573 ExecStart=/usr/sbin/nvme connect-all (code=exited, status=0/SUCCESS)

Main PID: 59573 (code=exited, status=0/SUCCESS)

Jul 24 06:41:44 MySQL-Node01 systemd[1]: Starting Connect NVMe-oF subsystems automatically during boot...

Jul 24 06:41:44 MySQL-Node01 systemd[1]: nvmf-autoconnect.service: Deactivated successfully.

Jul 24 06:41:44 MySQL-Node01 systemd[1]: Finished Connect NVMe-oF subsystems automatically during boot.

For more information about the NVMe configuration, see NVMe initiator configuration.