Home > Networking Solutions > Converged and Hyperconverged Solutions > VxRail Networking Solutions > Guides > VMware Cloud Foundation on VxRail Multirack Deployment Using BGP EVPN - Part 2 of 2 > VxRail node connections

VxRail node connections

-

Workload domains include combinations of ESXi hosts and network equipment which can be set up with varying levels of hardware redundancy. Workload domains are connected to a network core that distributes data between them.

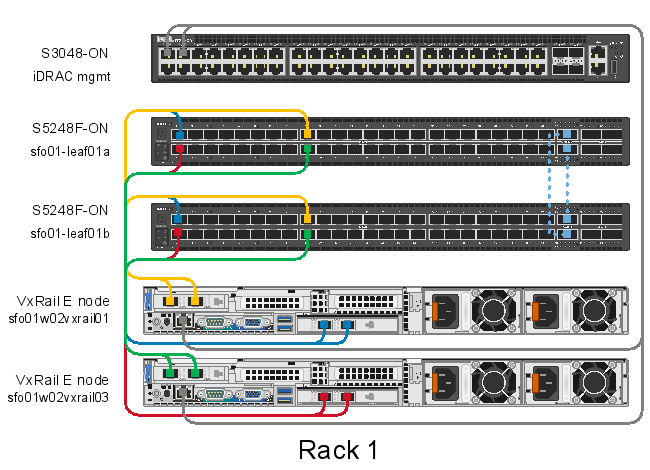

Figure 16 shows a physical view of Rack 1. On each VxRail node, the NDC links carry traditional VxRail network traffic such as management, vMotion, vSAN, and VxRail management traffic. The 2x 25 GbE PCIe, shown here in slot 2, is dedicated to NSX-T overlay and NSX-T uplink traffic. Resiliency is achieved by providing redundant leaf switches at the ToR.

Each VxRail node has an iDRAC connected to an S3048-ON OOB management switch. This connection is used for the initial node configuration. The S5248F-ON leaf switches are connected using two QSFP28-DD 200 GbE direct-access cables (DAC) forming a VLT interconnect (VLTi) for a total throughput of 400 GbE. Upstream connections to the spine switches are not shown but are configured using two QSFP28 100 GbE uplinks.

Figure 16. Dell EMC VxRail multirack Rack 1 physical connectivity