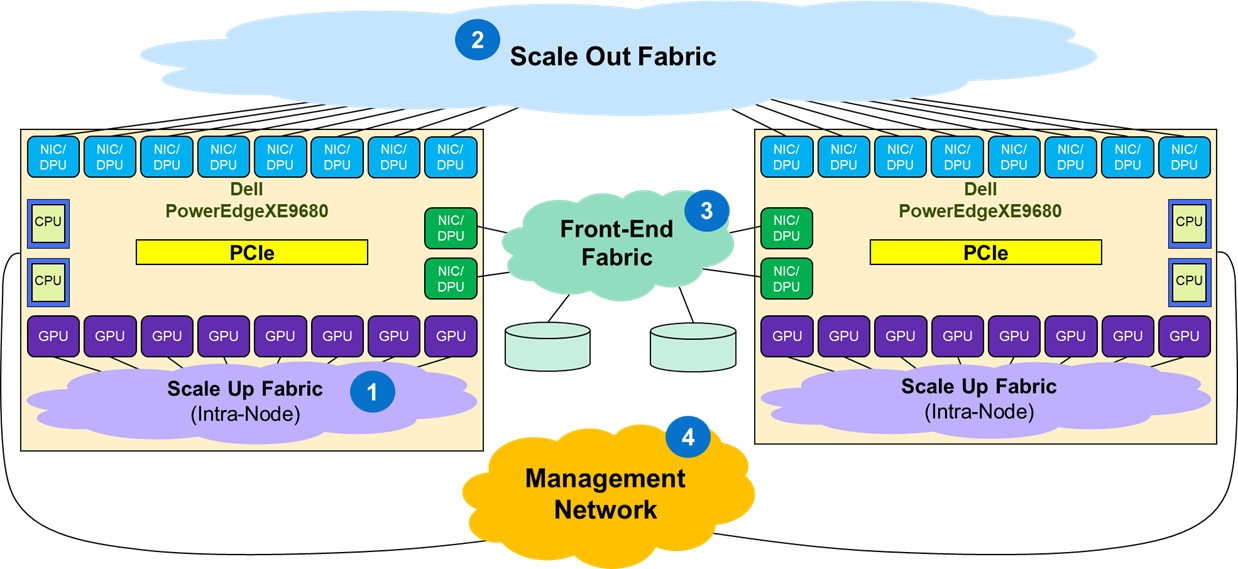

The eight GPUs within an XE9680 are interconnected by an Intra-Node Scale Up Fabric that creates a GPU high bandwidth domain. Eight of the NICs/DPUs are coupled through PCIe with the eight GPUs and are used to exchange parameters among GPUs belonging to different chassis through a Scale Out Fabric. The remaining two NICs/DPUs are connected to a front-end fabric, used to deploy storage, applications, and in-band cluster management.

Figure 9 shows the different fabrics that support the deployment of a typical AI workload:

- 1. Scale Up Fabric

- This fabric interconnects the eight GPUs to form a high bandwidth domain, usually through proprietary network technologies (e.g., NVIDIA NVLink or AMD XGMI).

- 2. Scale Out Fabric

- This fabric is used by the set of GPUs composing a GPU cluster to exchange AI workload parameters.

- 3. Front-End Fabric

- This fabric supports the deployment of the storage, application, and in-band cluster management components of the AI solution.

- 4. Management Network

- This fabric supports the overall environment management network for the solution.

- Scale Up Fabric

- GPU Fabric, Back-End Fabric, Front-End Fabric.

- Scale Out Fabric

- Host Fabric, East-West Fabric, Back-End Fabric, Front-End Fabric.

- Front-End Fabric

- Storage/Access Fabric, North-South Fabric.